Managing Drive Failures with AN!CDB

|

Alteeve Wiki :: How To :: Managing Drive Failures with AN!CDB |

| Note: At this time, only LSI-based controllers are supported. Please see this section of the AN!Cluster Tutorial 2 for required node configuration. |

The AN!CDB dashboard supports basic drive management for nodes using LSI-based RAID controllers. This covers all Fujitsu servers with hardware RAID.

This guide will show you how to handle a few common storage management tasks easily and quickly.

Starting the Storage Manager

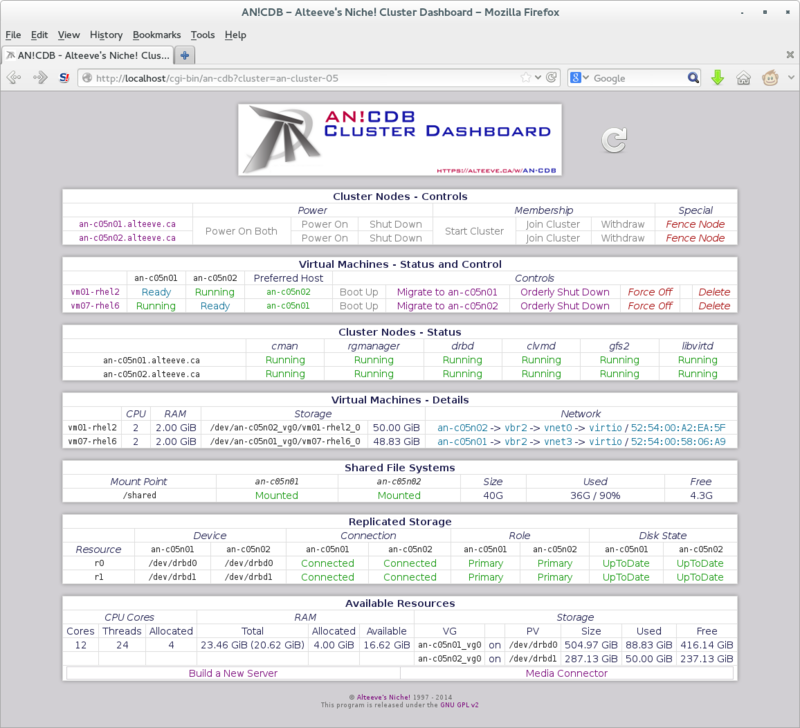

From the main AN!CDB page, under the "Cluster Nodes - Control" window, click on the name of the node you wish to manage.

This will open a new tab (or window) showing the current configuration and state of the node's storage.

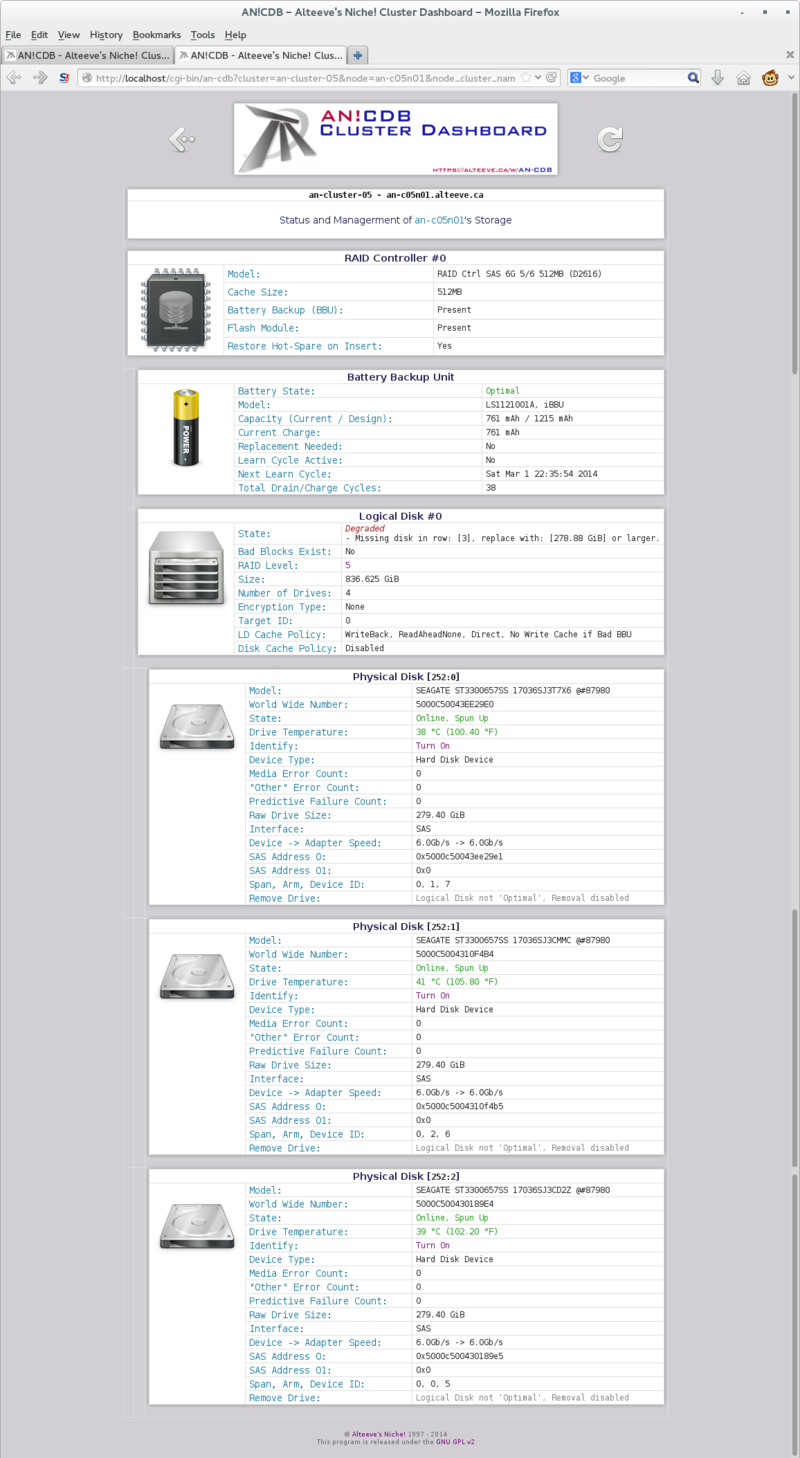

Storage Display Window

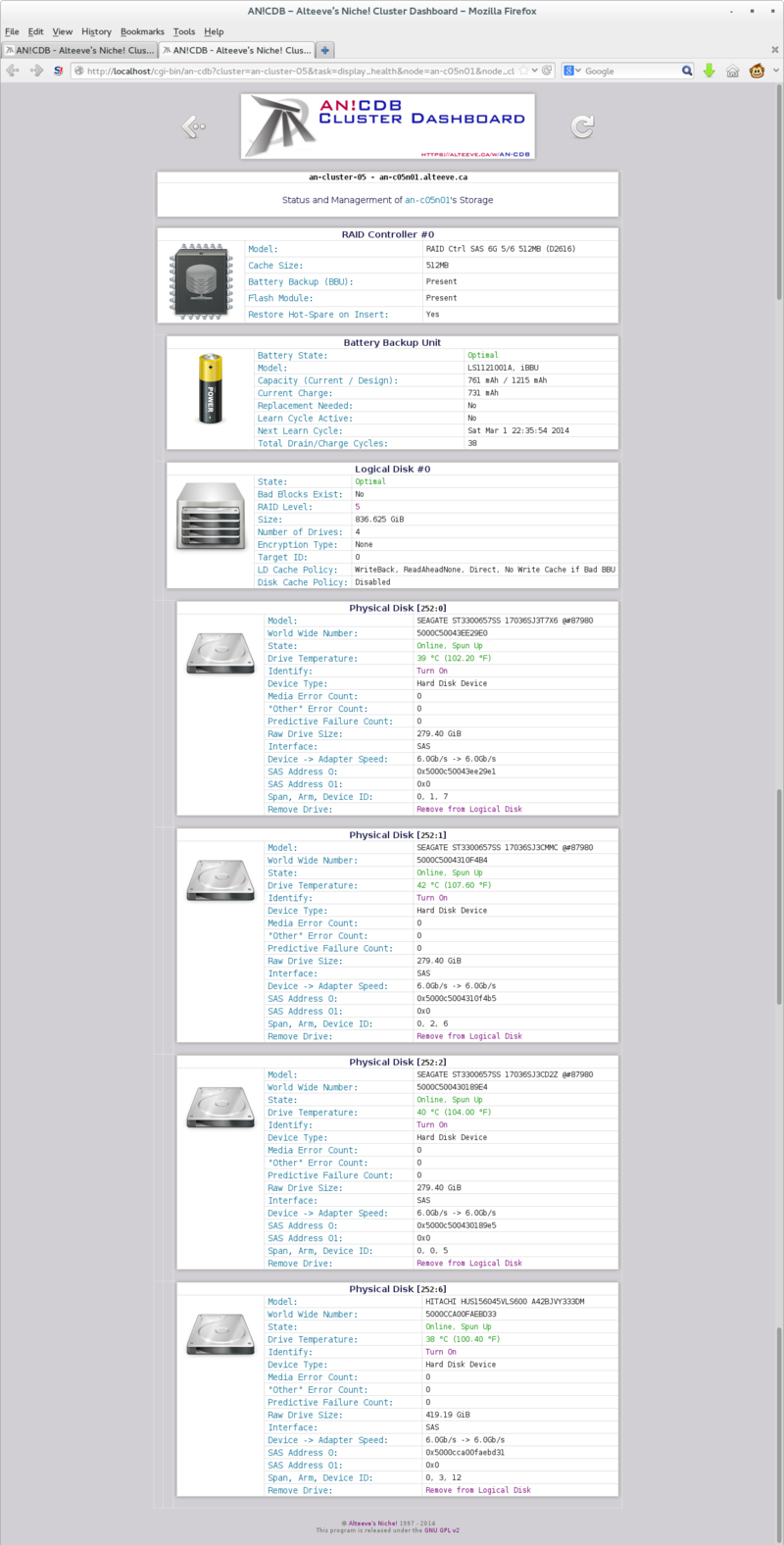

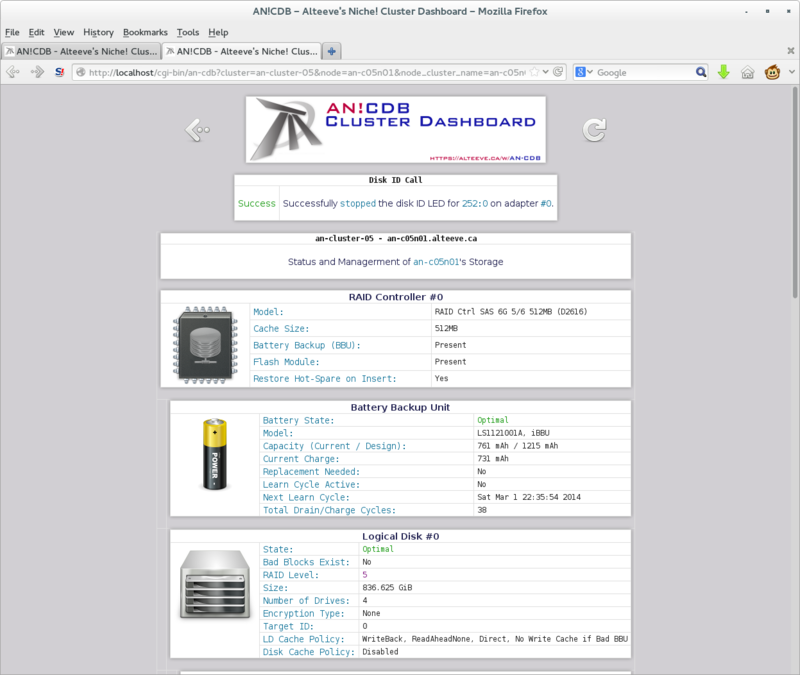

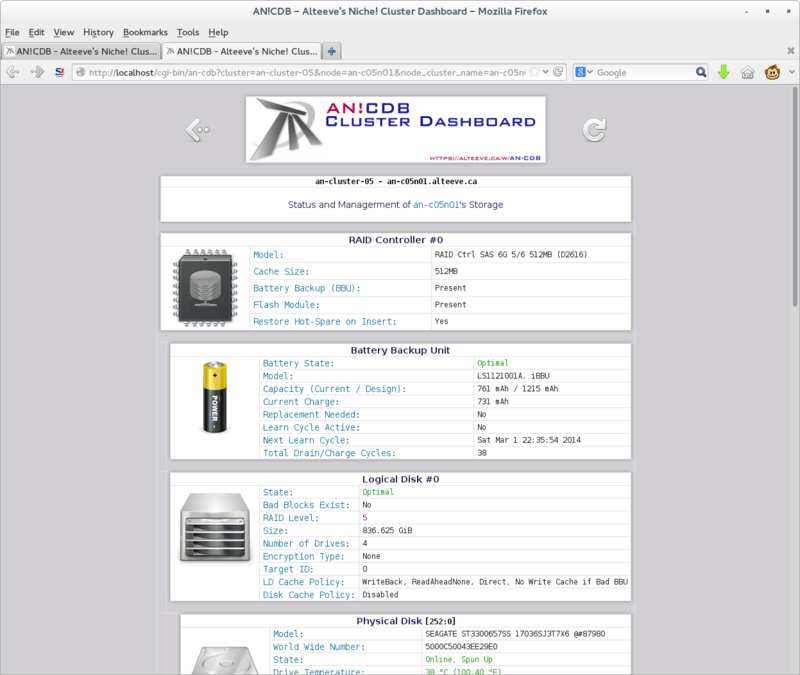

The storage display window shows your storage controller(s), their auxiliary power supply for write-back caching if installed, the logical disk(s) and each logical disk's constituent drives.

The auxiliary power and logical disks will be slightly indented under their parent controller.

The physical disks associated with a given logical disk are further indented to show their association.

In this example, we have only one RAID controller, it has an auxiliary power pack and a single logical volume has been created.

The Logical volume is a RAID level 5 array with four physical disks.

Controlling the Physical Disk Identification Light

The first task we will explore is using identification lights to match a physical disk listing with a physical drive in a node.

If a drive fails completely, its fault light will light up, making the failed drive easy to find. However, the AN!CDB alert system can notify us of pending failures. In these cases, the drive's fault light will not illuminate. Therefore, it becomes critical to identify the failing drive. Removing the wrong drive, when another drive is unhealthy, may well leave your node non-operational.

That's no fun.

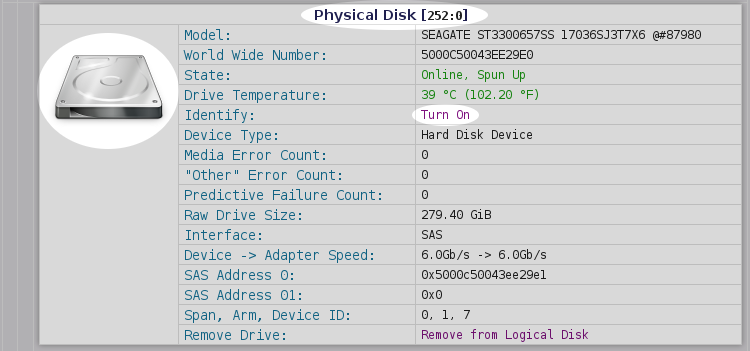

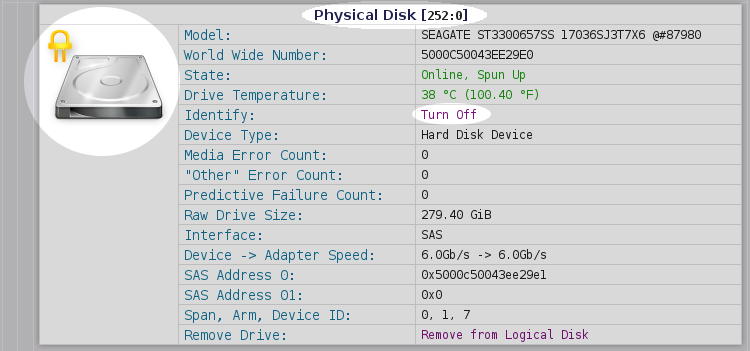

Each physical drive will have a button labelled either Turn On or Turn Off, depending on the current state of the identification LED.

Illuminating a Drive's ID Light

Let's illuminate!

We will identify the drive with the somewhat-cryptic name '252:0'.

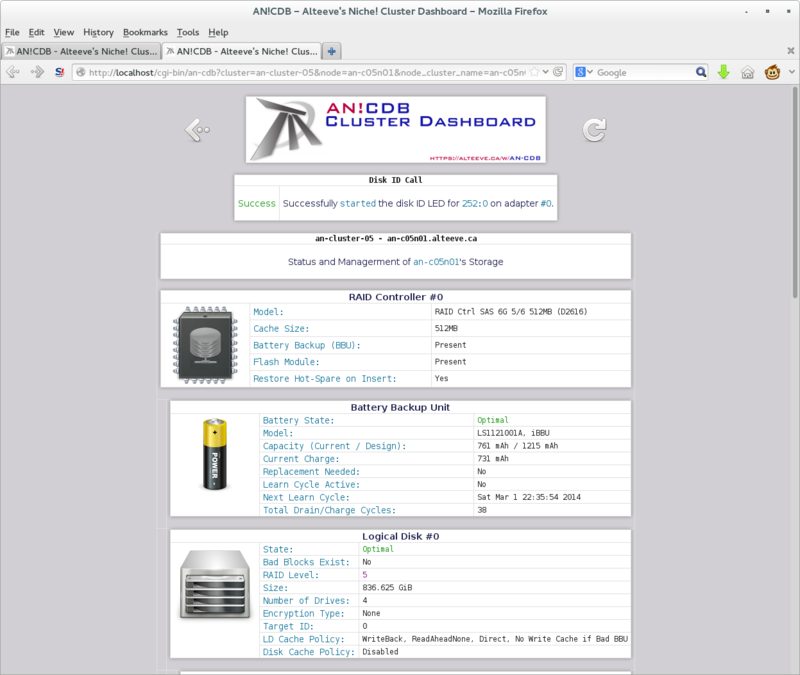

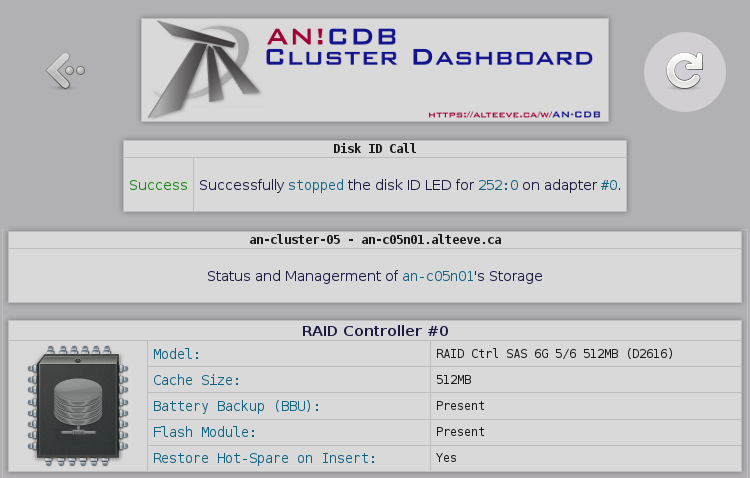

The storage page will reload, indicating whether the command succeeded or not.

If you now look at the front of your node, you should see one of the drives lit up.

Most excellent.

Shutting off a Drive's ID Light

To turn the ID light off, simply click on the drive's Turn Off button.

As before, the success or failure will be reported.

Refreshing the Storage Page

After issuing a command to the storage manager, please do not use your browser's "refresh" function. It is always better to click on the reload icon.

This will reload the page with the most up to date state of the storage in your node.

Failure and Recovery

There are many ways for hard drives to fail.

In this section, we're going to sort-of simulate four failures:

- Drive vanishes entirely

- Drive is failed but still online

- Good drive was ejected by accident, recovering

- Drive has not yet failed, but may soon

The first three are going to be sort of mashed together. We'll simply eject the drive while it's running, causing it to disappear and for the array to degrade. If this happened in real life, you would simply eject it and insert a new drive.

For the second case, we'll re-insert the ejected drive. The drive will be listed as failed ("Unconfigured(bad)"). We'll tell the controller to "spin down" the drive, making it safe to remove. In the real world, we would then eject it and install a new drive.

In the third case, we will again eject the drive, and then re-insert it. In this case, we won't spin down the drive, but instead mark it as healthy again.

Lastly, we will discuss predictive failure. In these cases, the drive has not failed yet, but confidence in the drive has been lost, so pre-failure replacement will be done.

Drive Vanishes Entirely

If a drive fails catastrophically, say the controller on the drive fails, you may find that the drive simply no longer appears in the list of physical disks under the logical disk.

Likewise, if the disk is physically ejected (by accident or otherwise), the same result will be seen.

The logical drive will list as 'Degraded', but none of the disks will show errors.

If you look under Logical Disk #X, you will see the number of physical disks in the logical disk beside the 'Number of Drives'. When you count the actual number of disks under the logical drive though, you will see the number of displayed disks is smaller.

In this situation, the best thing to do is locate the failed disk. The 'Failed' LED should be illuminated on the bay with the failed or missing disk. If it is not lit, you may need to light up the ID LED on the remaining good disks and use a simple process of illimination to find the dead drive.

Once you know which disk is dead, remove it and install a replacement disk. If 'Restore Hot-Spare on Insert' is set to 'Yes', then the drive should immediately be added to the logical disk and the rebuild process should start.

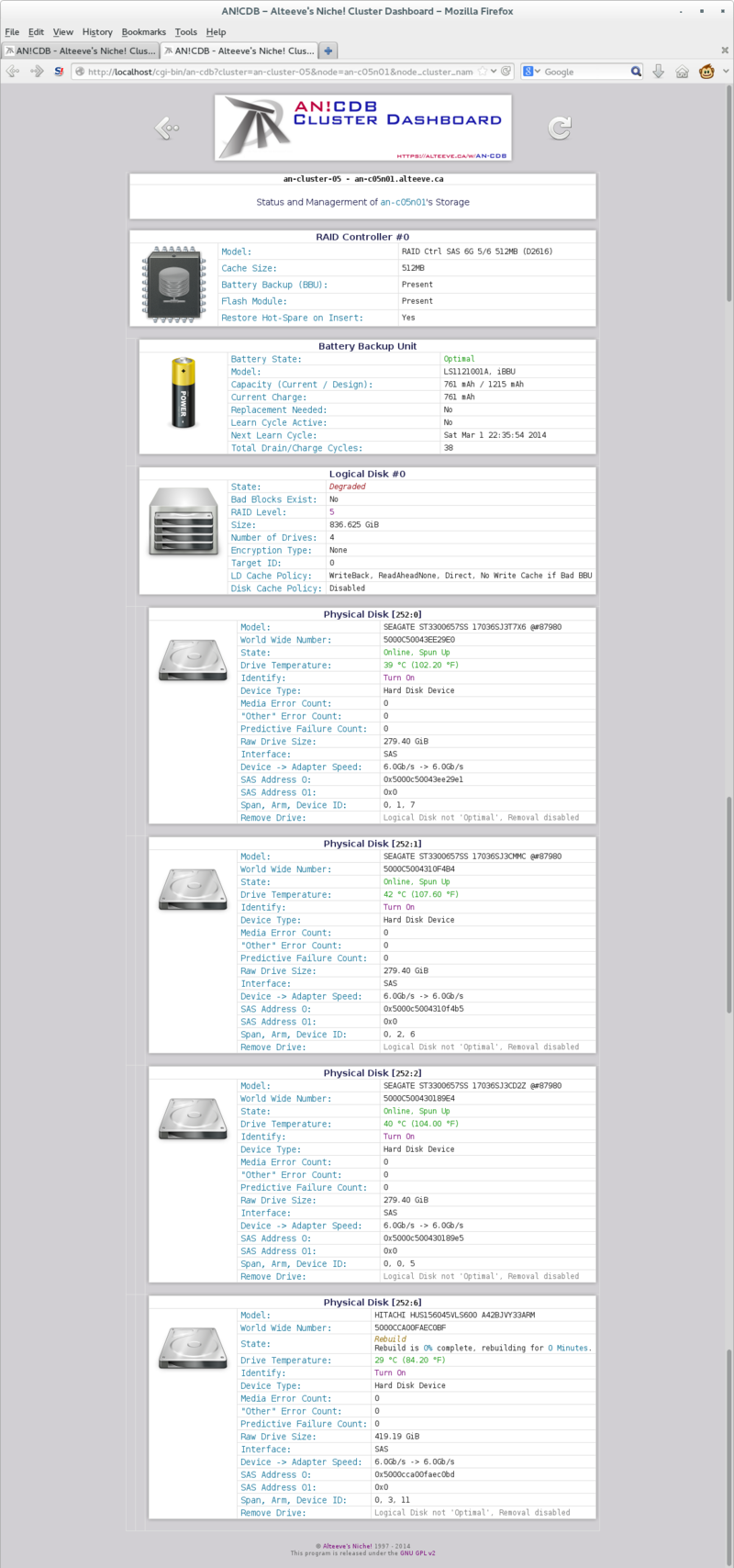

Inserting a Replacement Physical Disk

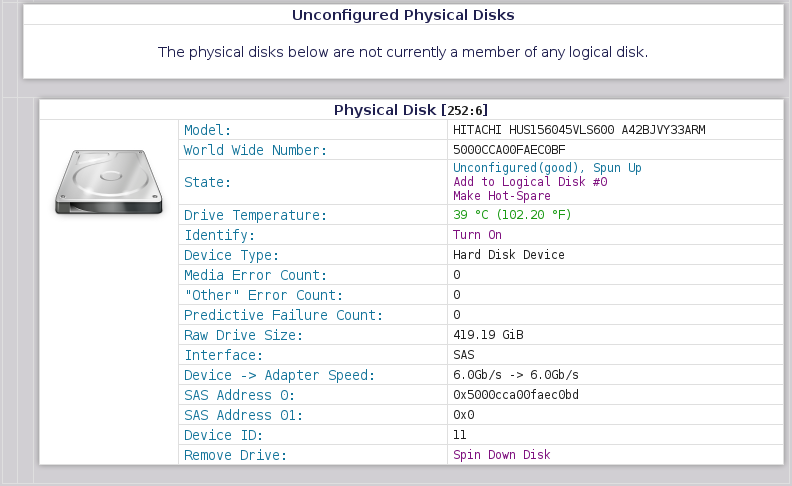

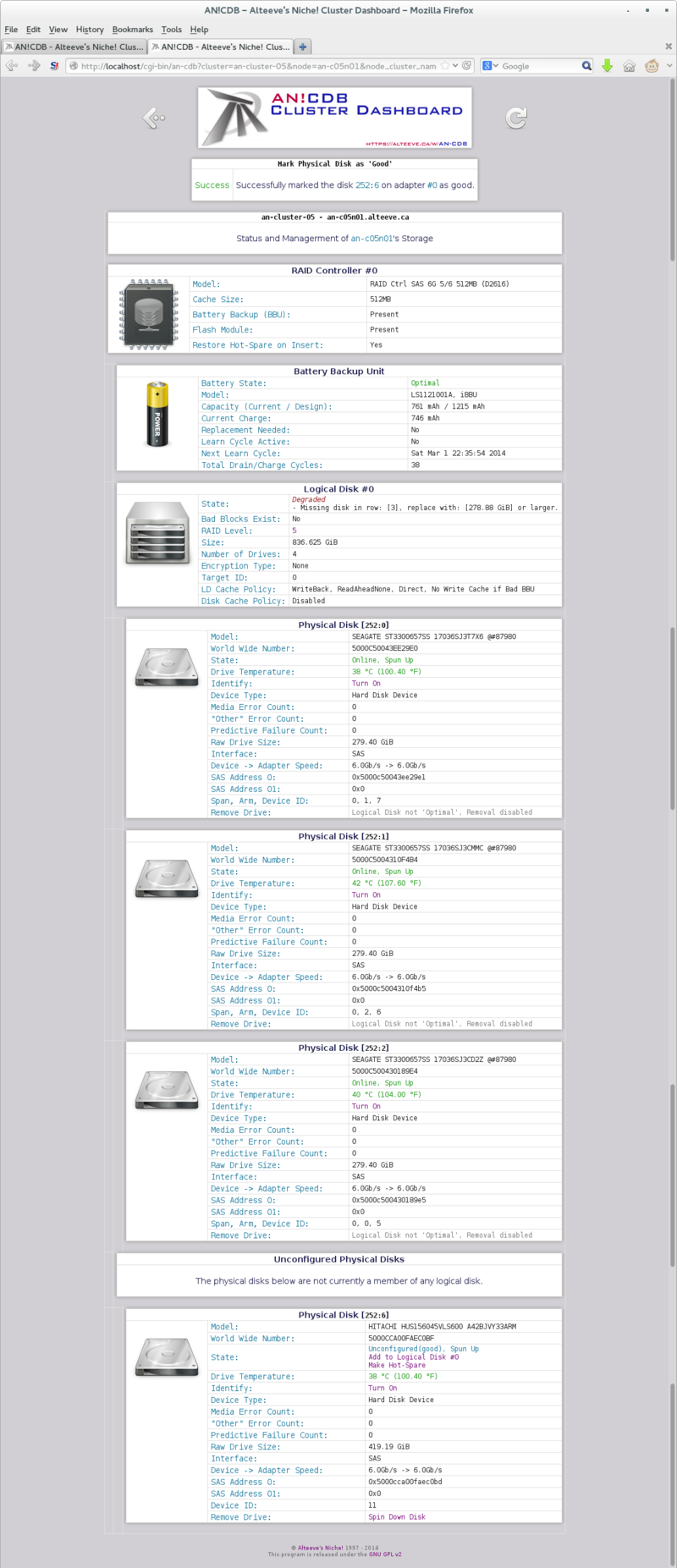

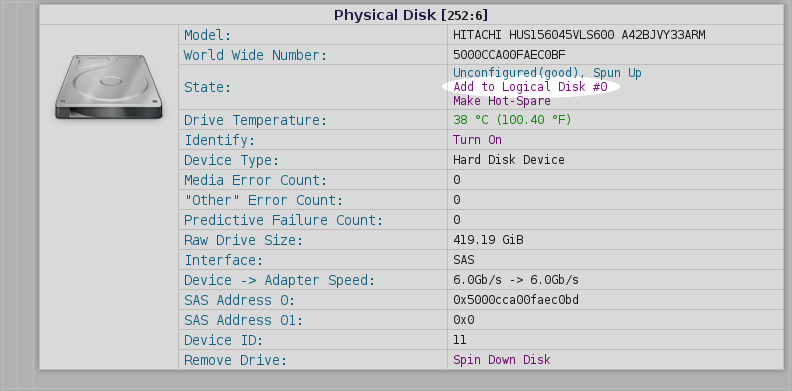

If the replacement drive doesn't automatically get added to the logical disk, you can add the new physical disk to the Degraded logical disk using the storage manager. The new disk will come up as 'Unconfigured(good), Spun Up'. Directly beneath that, you will see the option to 'Add to Logical Disk #X', where X is the logical disk number that is degraded.

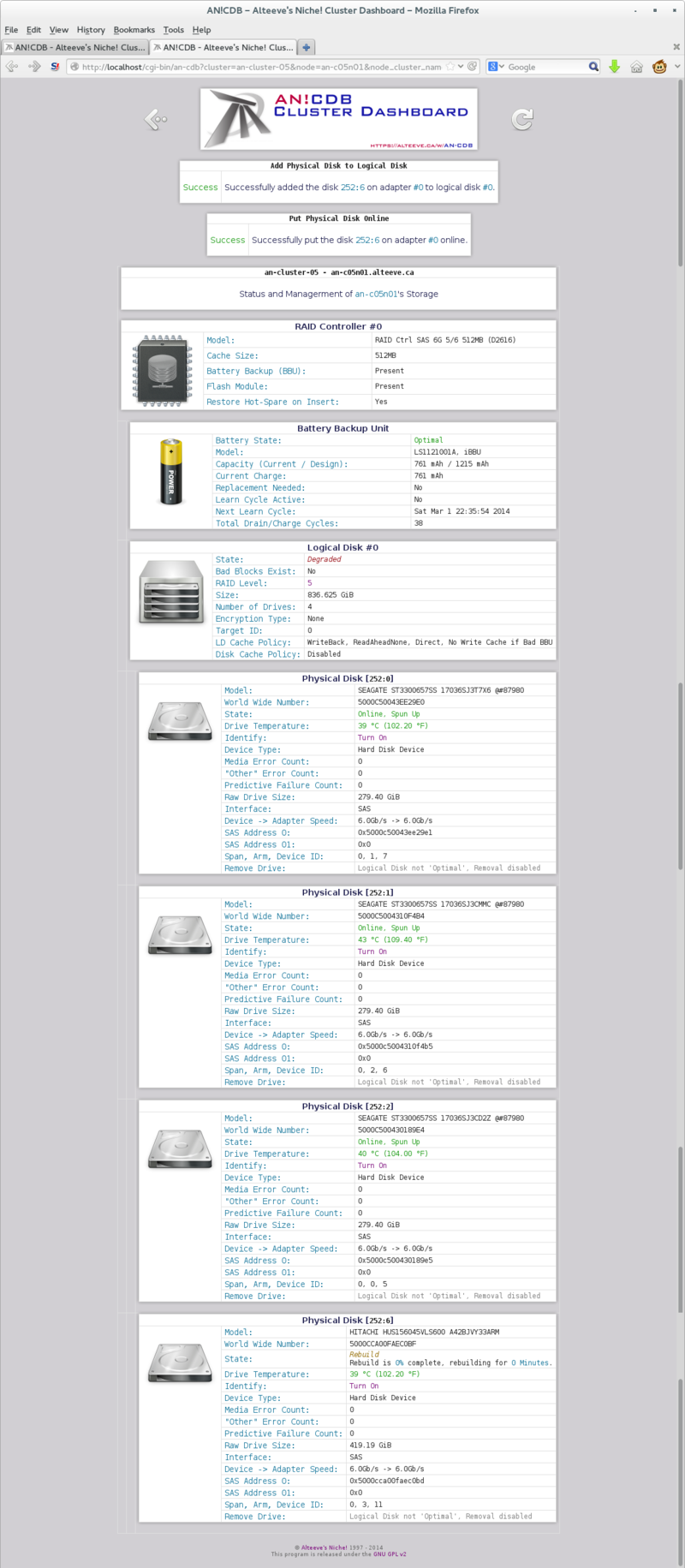

Click on 'Add to Logical Disk #X' and the drive will be added to the logical disk. The rebuild process will start immediately.

Drive is Failed but Still Online

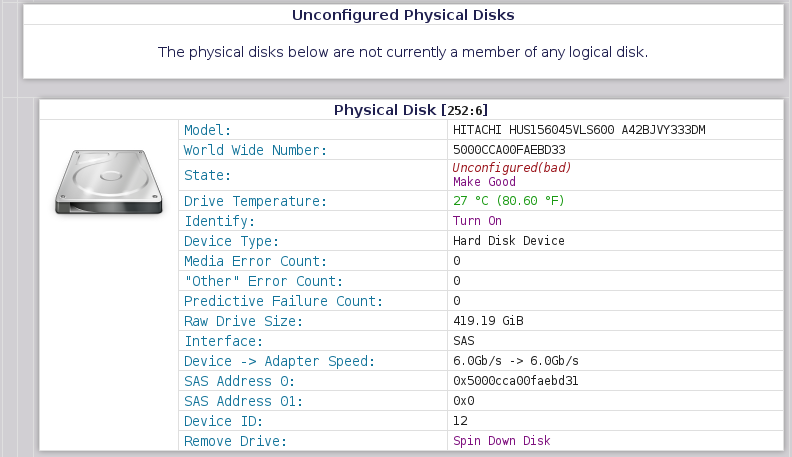

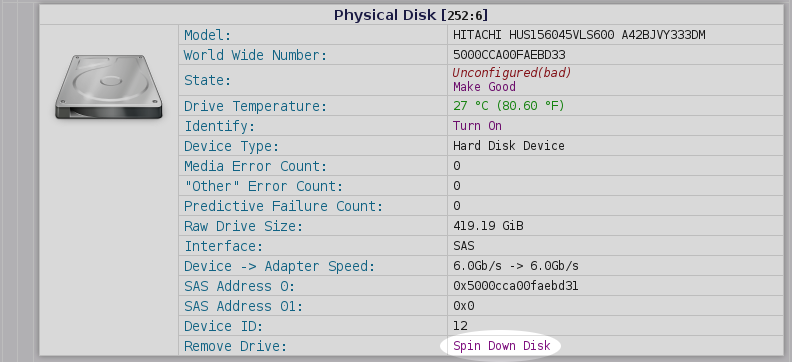

If a drive has failed, but its controller is still working, the drive should appear as 'Unconfigured(bad).

This will be one of the most common failures you will have to deal with.

You will generally have two options here.

- Prepare it for removal

- Try to make the drive "good" again

Preparing a Failed Disk for Removal

A failed disk may still be "spun up", meaning that its platters are rotating. Moving the disk before the drive has spun down completely could cause a head-crash. Further, the electrical connection to the drive will still be active, making it possible to cause a short when the drive is mechanically ejected, which could damager the drive's controller, the backplane or the RAID controller itself.

To avoid this risk, before a drive is removed, we must prepare it for removal. This will spin-down the disk and make it safe to eject with minimal risk of electrical shorts.

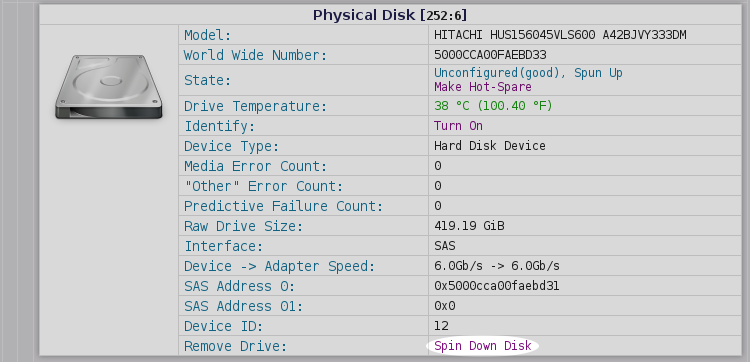

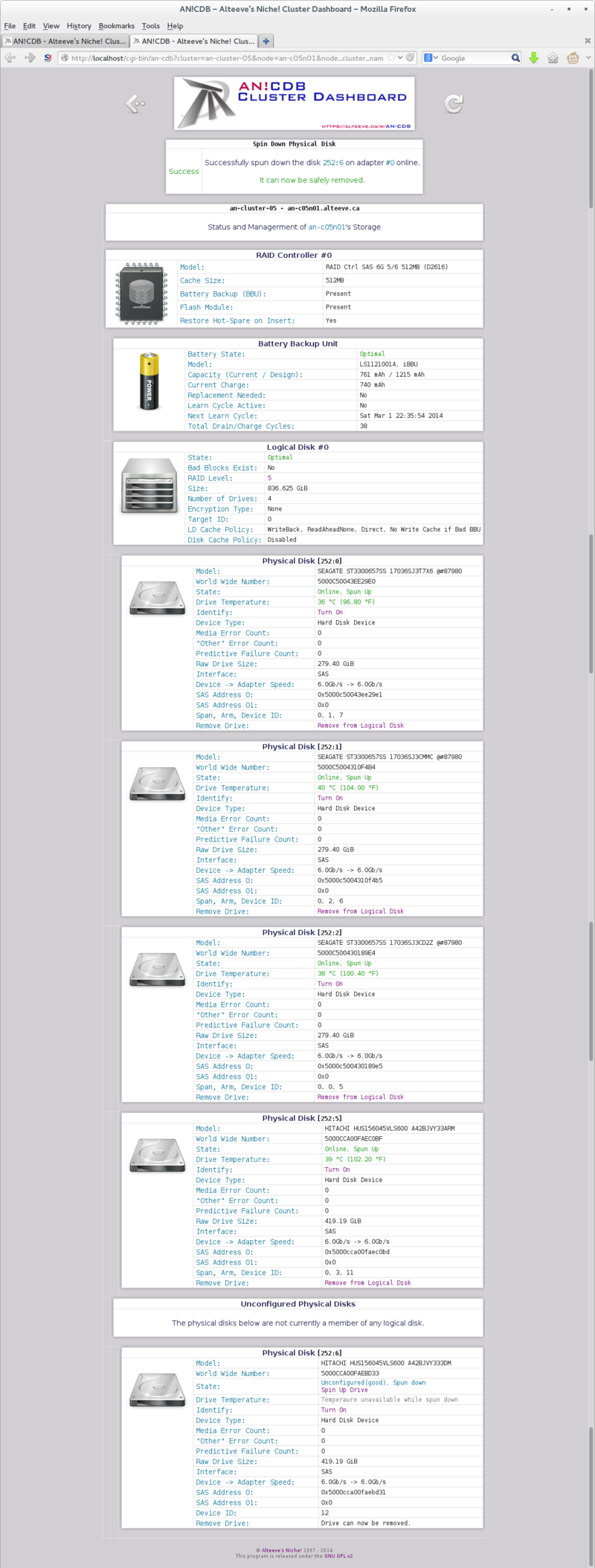

To prepare it for removal, you will click on 'Spin Down Disk'.

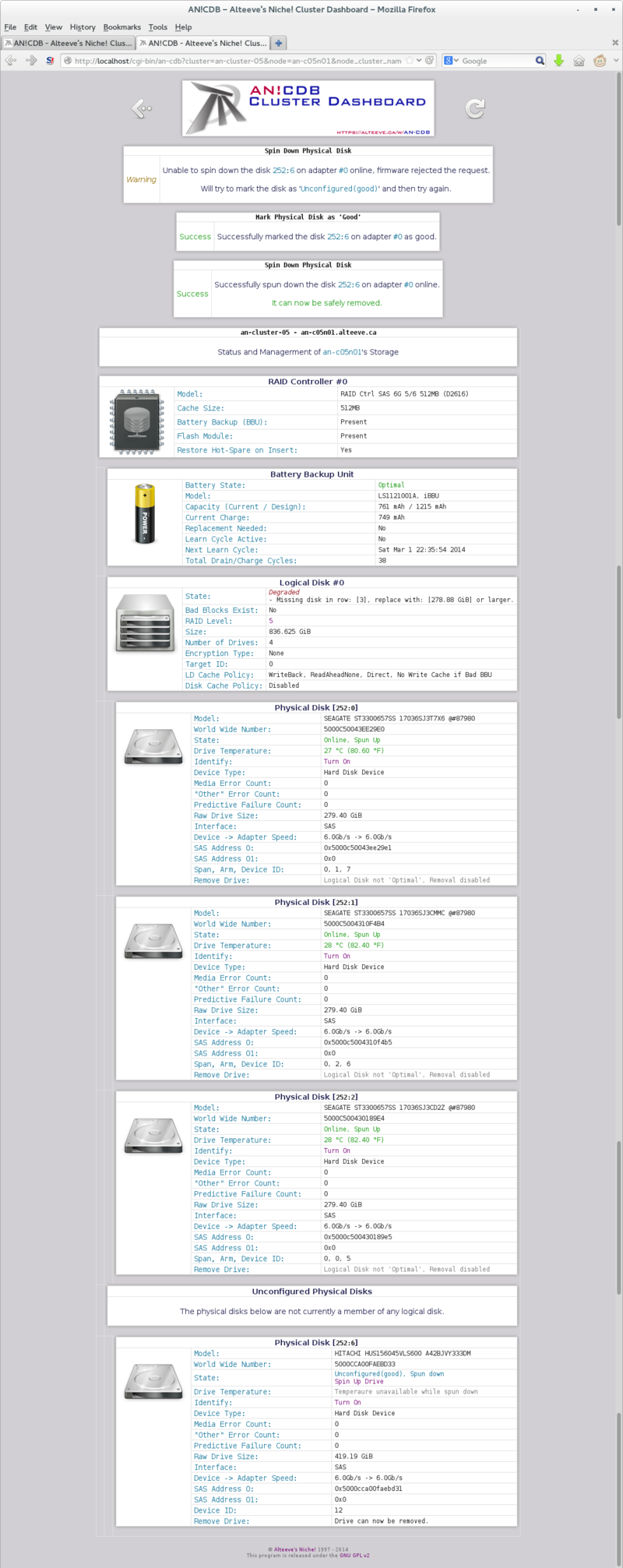

| Note: In the example below, AN!CDB's first attempt to spin down the drive failed. So it attempted, successfully, to mark the disk as 'good' and then tried to spin it down a second time, which worked. Be sure to physically record the disk as failed! |

Once spun down, the physical disk will be shown to be safe to remove.

Once you insert the new disk, it should automatically be added to the logical disk and the rebuild process should start. If it doesn't, please see "Inserting a Replacement Physical Disk" above.

Attempting to Recover a Failed Disk

| Warning: If you do not know why the drive failed, replace it. Even if it appears to recover, it will likely fail again soon. |

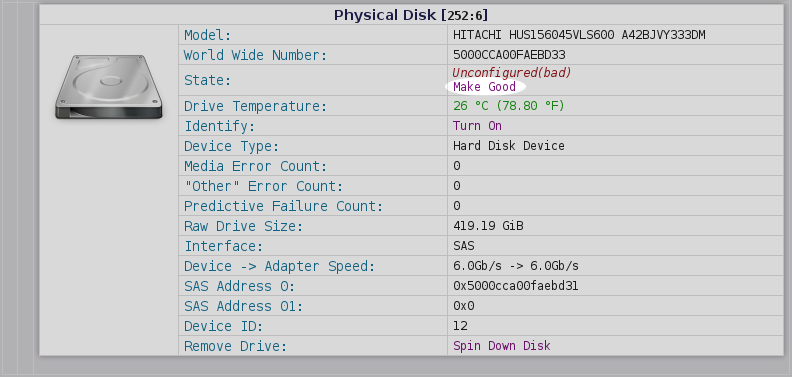

Depending on why the physical disk failed, it may be possible to mark is as "good" again. This should only be done in two cases;

- You know why the disk was flagged as failed and you trust it is actually healthy

- To try and restore redundancy to the logical disk while you wait for the replacement disk to arrive

Underneath the 'Unconfigured(good), Spun Up' disk state will be the option 'Make good'.

If this works, the disk state will change to 'Unconfigured(good), Spun Up'. Below this, one of two options will be available;

- If (one of) the logical disk(s) are degraded, 'Add to Logical Disk #X' will be available.

- If (all) the logical disk(s) are 'Optimal', you will be able to mark the disk as a 'Hot Spare'.

In this section, we will add the recovered physical disk to the degraded logical disk. Managing hot-spares is covered further below. Do note though; If you mark a disk as a hot-spare when a logical disk is degraded, it will automatically be added to that degraded logical disk and rebuild will begin.

If there are multiple degraded logical disks, you will see multiple 'Add to Logical Disk #X' options, one for each degraded logical disk. In our case, there is just one logical disk, so there is just one option.

Once you click on 'Add to Logical Disk #X', the physical disk will appear under the logical disk and rebuild will begin automatically.

Done.

Recovering from Accidental Ejection of Good Drive

There are numerous reasons why a perfectly good drive might get ejected from a node. In spite of the risks in the above warning, people often seem to eject a running disk. Perhaps not realizing the system is running, or out of a desire to simulate a failure. In any case, it happens and it is important to know how to recover from it.

As we discussed above, ejecting a disk will cause it to vanish from the list of physical disks and the logical disk it was in will become degraded.

Once re-inserted, the disk will be flagged as 'Unconfigured(bad)'. In this case, you know the disk is fine, so marking it as good after reinserting it is fine.

Please jump up to that section to finish recovering the physical disk.

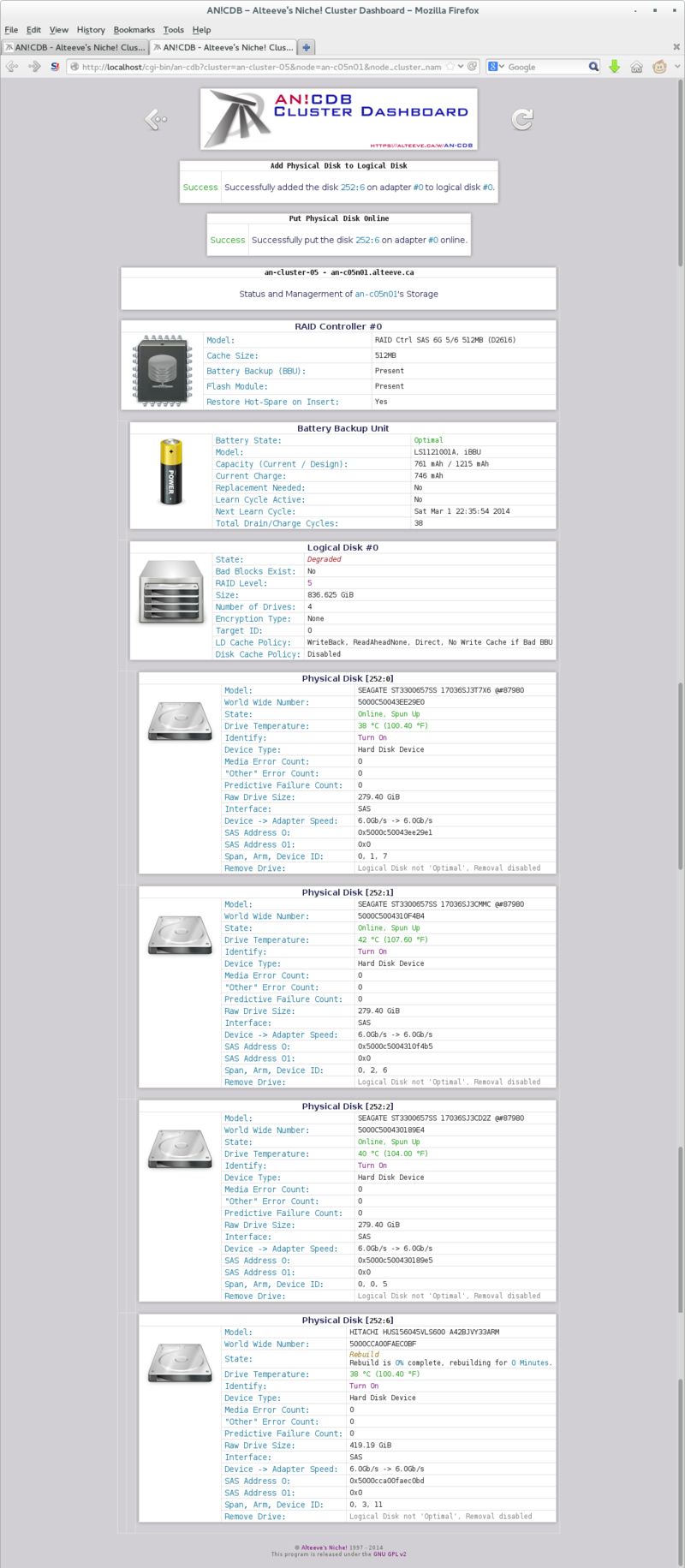

Pre-Failure Drive Replacement

With the AN!CDB monitoring program, you may occasionally get an alert like:

Still healthy drive:

RAID 0's Physical Disk 5's "Other Error Count" has changed!

0 -> 1

This is not always a concern. For example, every drive has a rated "unrecoverable read error (URE)" rating, usually 1 in every 10^15 reads for enterprise SAS drives. When the logical disk is healthy, the read error will not be a problem as the read can be recovered and the drive itself is still fine.

However, if we see a sudden spike in "Other Error Count", it may be a good idea to replace the drive.

A disk that may be failing and should be replaced:

RAID 0's Physical Disk 3's "Other Error Count" has changed!

0 -> 4

In this case, we see that four errors occurred at almost the same time. This causes concern and warrants a pre-failure replacement.

In this case, you would leave the questionable disk in the logical disk until the replacement drive is in-hand. Once we're ready, we will remove the drive from the logical disk, which will leave it ready to eject, and then insert the replacement physical disk.

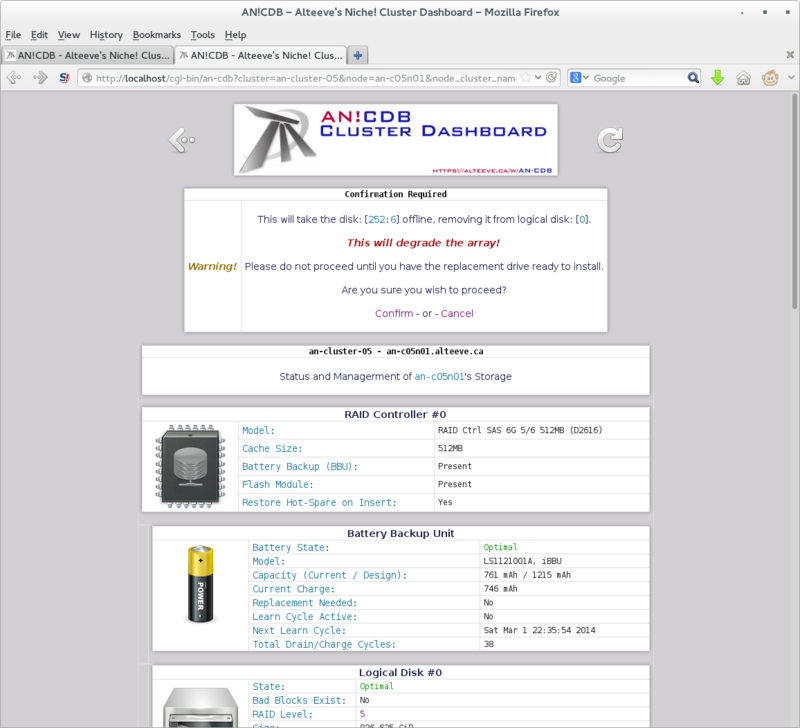

When you click on 'Remove from Logical Disk', you will be asked to confirm the action. You will also be warned that this action will degrade the logical disk. In this case, the replacement drive is ready to insert, so we are safe to proceed.

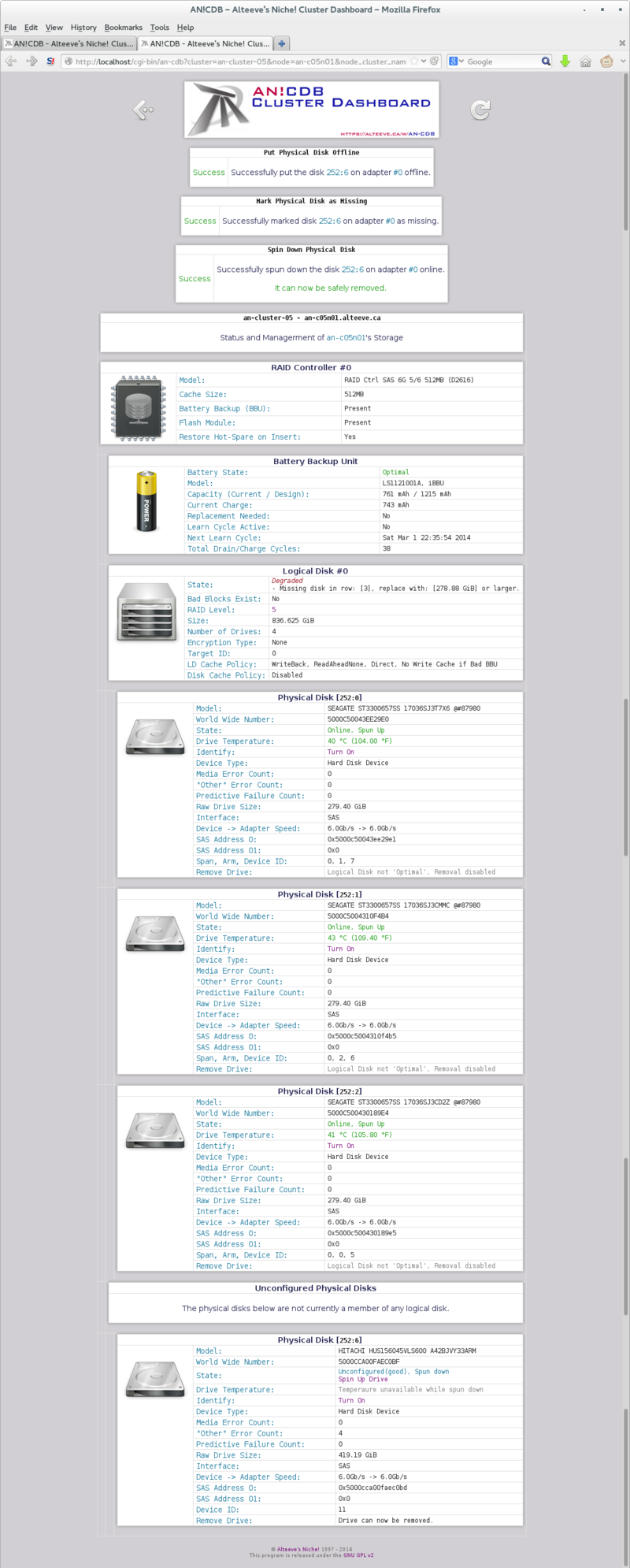

Click on 'Confirm', the physical disk will be taken offline, marked as missing and then spun down. Once completed, you will be able to safely eject the physical disk and install the replacement drive.

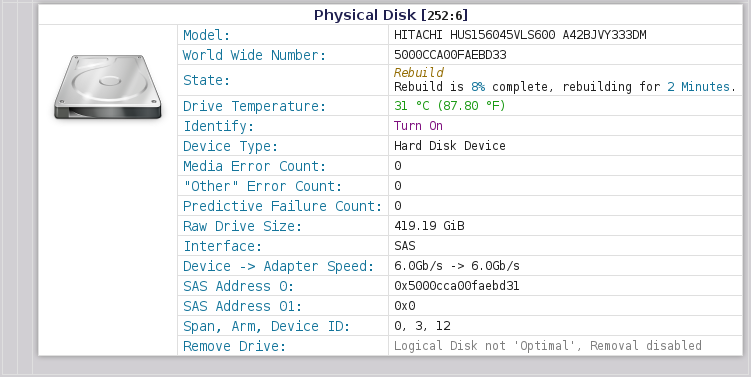

When you insert the replacement disk, it should automatically be added to the logical disk and the rebuild should begin.

| Note: If the freshly inserted drive is not automatically added to the logical disk, please see the "Inserting a Replacement Physical Disk" section above. |

Done!

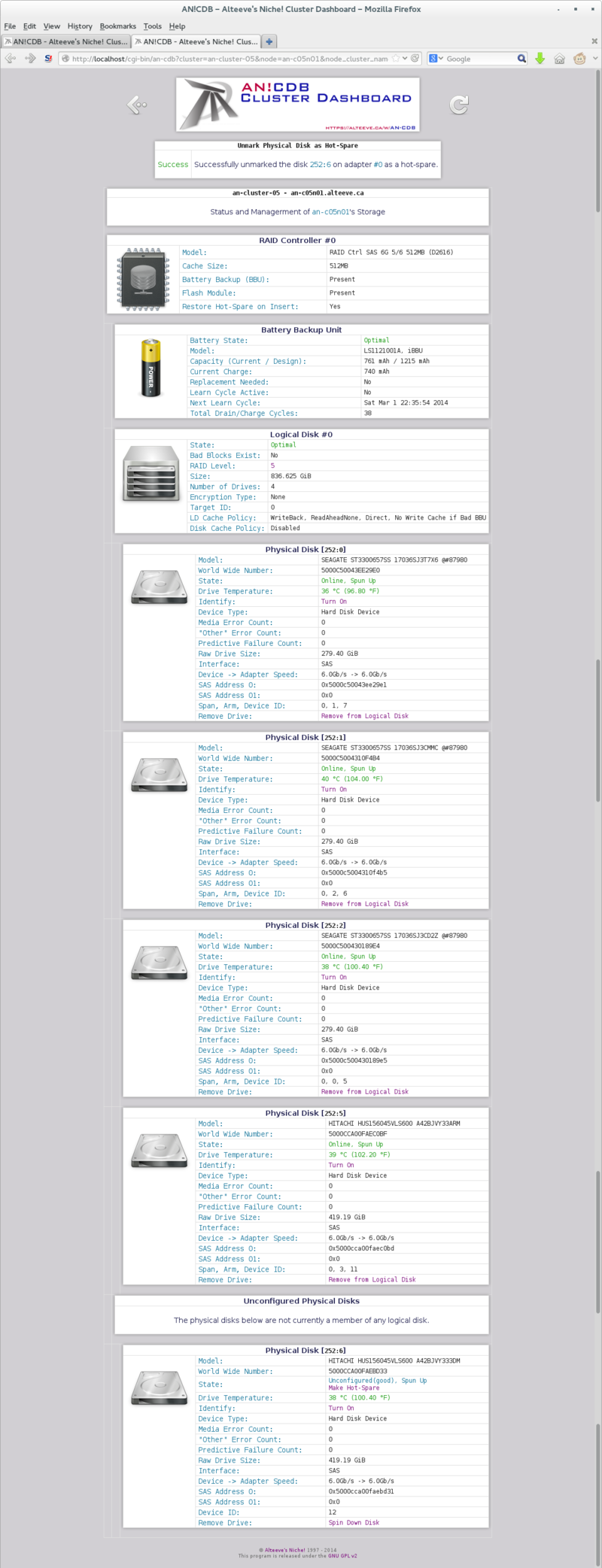

Managing Hot-Spares

In this final section, we will add a "hot-spare" drive to our node.

A "Hot-Spare" is an unused drive that the RAID controller knows can be used to immediately replace a failed drive. This is a popular option for people who want to return to a fully redundant state as soon after a failure as possible.

We will Configure a hot-spare, show how it replaces a failed drive, and show how to unmark a drive as a hot-spare, in case the hot-spare itself goes bad.

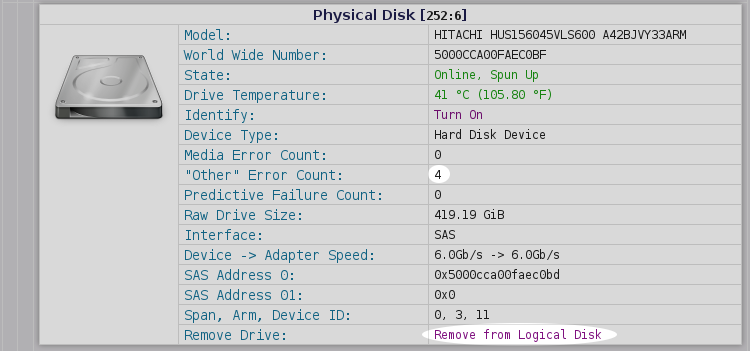

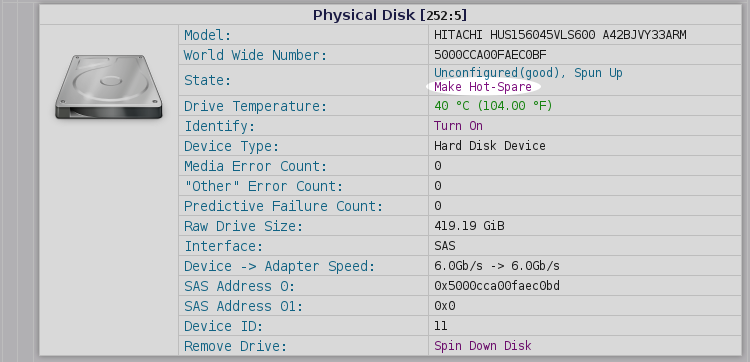

Creating a Hot-Spare

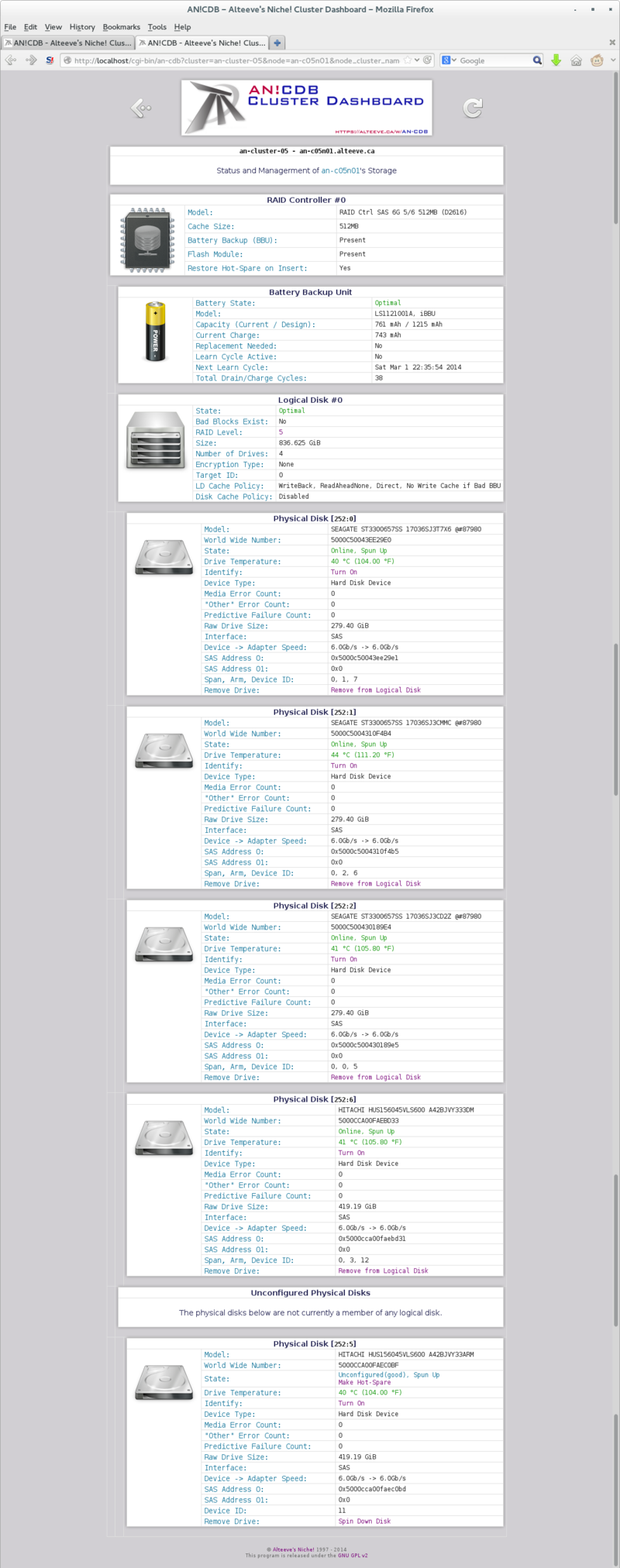

Up until this point, we've had four physical disks in our system. Here, we've inserted a fifth healthy disk. The logical disk was 'Optimal', so no default action was taken. Likewise, no option to add the physical disk to a logical disk is presented.

The one option that is presented, however, is 'Make Hot-Spare'.

Once marked as a hot-spare, the disk will be automatically used to replace a physical drive, should one of an equal or smaller size fail.

Done!

Now any disk failure will recover as fast as technically possible.

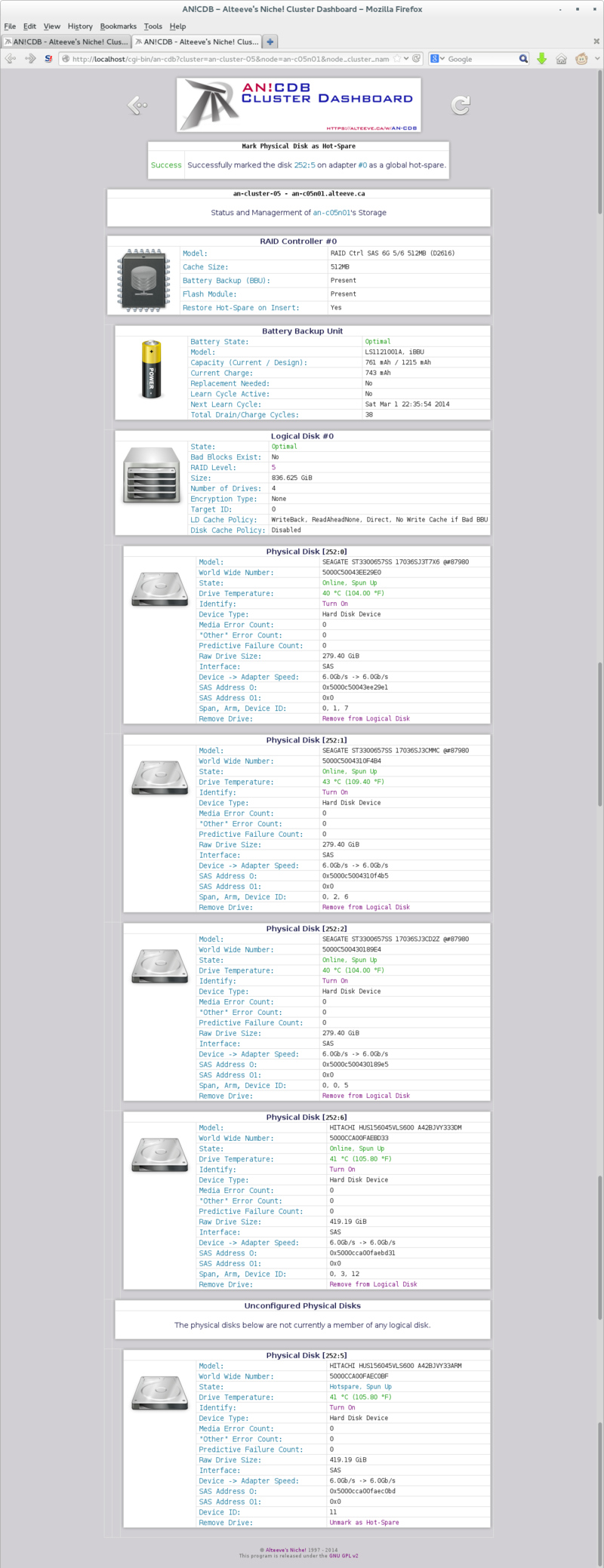

Example of a Hot-Spare Working

As we saw earlier, there are many ways for a logical disk to degrade. The hot-spare will be used automatically as soon as the array is degraded, regardless of the cause.

For this reason, we'll simply remove a physical disk currently in the logical disk. We should see immediately that the hot-spare has entered the array and rebuild has begun.

I will remove physical disk '[252:6]' from the array.

At this point, we could eject [252:6] or spin it back up. I know it's healthy, so I will spin it up and mark it as a hot spare in preparation for the next section.

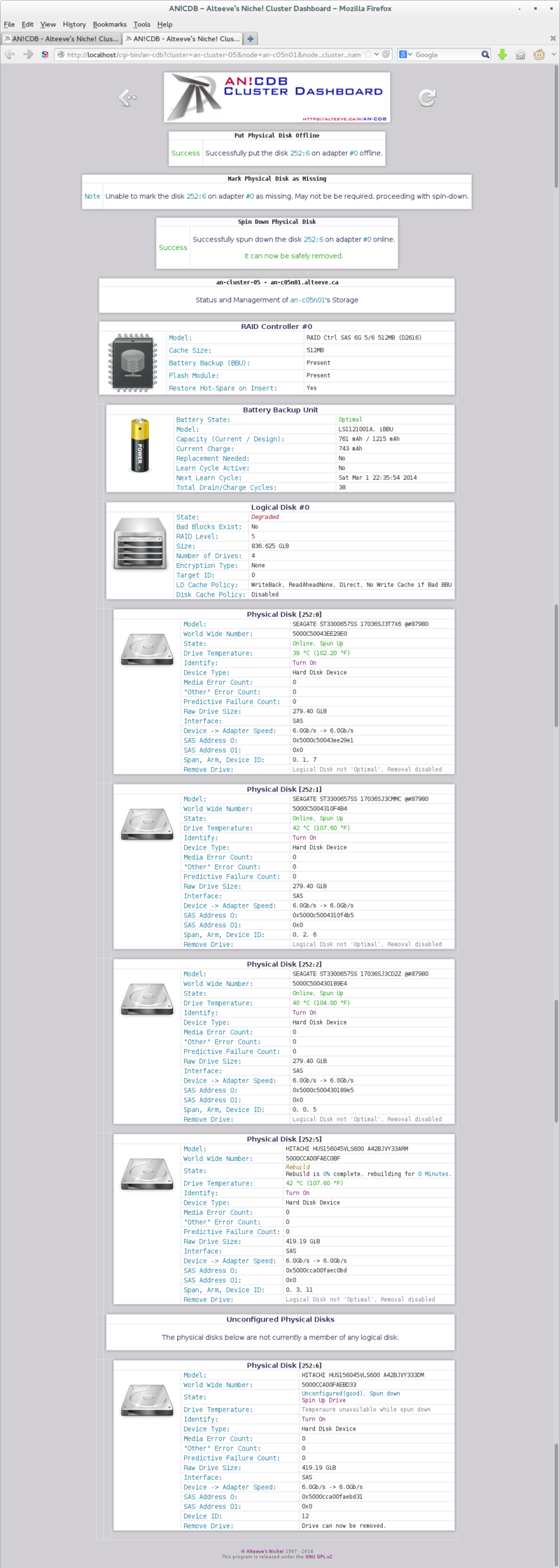

Replacing a Failing Hot-Spare

As we discussed earlier, a drive may show signs of failing before it's actually failed. Of course, it could just as well die and get marked as 'Unconfigured(bad)' or vanish entirely.

In this case, we'll pretend the drive is predicted to fail.

Before we can spin down and remove a hot-spare disk, we must first click on 'Unmark as Hot-Spare'.

Once you click it, the physical disk reverts to being 'Unconfigured(good)'. From here, we can click on 'Spin Down Disk' to prepare it for removal.

Once spun down, the disk can safely be ejected and replaced.

Done!

| Any questions, feedback, advice, complaints or meanderings are welcome. | |||

| Alteeve's Niche! | Alteeve Enterprise Support | Community Support | |

| © 2025 Alteeve. Intelligent Availability® is a registered trademark of Alteeve's Niche! Inc. 1997-2025 | |||

| legal stuff: All info is provided "As-Is". Do not use anything here unless you are willing and able to take responsibility for your own actions. | |||