Build an M3 Anvil! Cluster - v3.2

|

Alteeve Wiki :: How To :: Build an M3 Anvil! Cluster - v3.2 |

| Note: This is the documentation for the v3.2 release of the Anvil! software. The latest version is Build an M3 Anvil! Cluster. |

What is an Anvil!?

In short, it's a system designed to keep (virtual) servers running through an array of failures, without need for an internet connection or human interaction.

Think about ship-board computer systems, remote research facilities, factories without dedicated IT staff, un-staffed branch offices and so forth. Where most hosted solutions expect for technical staff to be available in short order, the Anvil! is designed to continue functioning properly for weeks or months with faulty components.

In these cases, the Anvil! system will predict component failure and mitigate automatically. It will adapt to changing threat conditions, like cooling, power loss, and component failure, including automatic recovery from full power loss.

It is designed around the understanding that a fault condition may not be repaired for weeks or months, and no one is around to resolve issues.

That is an Anvil! cluster!

An Anvil! cluster is designed so that any component in the cluster can fail, be removed and a replacement installed without needing a maintenance window. There are no single critical components, full stop. This includes power, network, compute and management systems.

Components

| Note: An Anvil! cluster will function with less than these minimum requirements. However, removal of any component will introduce some single points of failure that will require human intervention to recover. These are referred to a Micro-Anvil! clusters. |

The minimum configuration needed to host servers on an Anvil! is this;

| Management Layer | |

|---|---|

| Striker Dashboard 1 | Striker Dashboard 2 |

| Anvil! Node | |

| Node 1 | |

| Subnode 1 | Subnode 2 |

| Foundation Pack 1 | |

| Ethernet Switch 1 | Ethernet Switch 2 |

| Switched PDU 1 | Switched PDU 2 |

| UPS 1 | UPS 2 |

With this configuration, you can host as many servers as you would like, limited only by the resources of Node 1 (itself made of a pair of physical nodes with your choice of processing, RAM and storage resources). Additional capacity is added by adding additional nodes.

Scaling

To add capacity for hosted servers, individual nodes can be upgraded (online!), and/or additional nodes can be added. There is no hard limit on how many nodes can be in a given cluster.

Each 'Foundation Pack' can handle as many nodes as you'd like, though for reasons we'll explain in more detail later, it is recommended to run two to four nodes per foundation pack.

Management Layer; Striker Dashboards

The management layer, the Striker dashboards, have no hard limit on how many Node Blocks they can manage. All node-blocks record their data to the Strikers (to offload processing and storage loads). There is a practical limit to how many node blocks can use the Strikers, but this can be accounted for in the hardware selected for the dashboards.

Nodes

An Anvil! cluster uses one or more nodes, with each node being a pair of matched physical subnodes configured as a single logical unit. The power of a given node block is set by your hardware selection and based on the loads you expect to place on it.

There is no hard limit on how many nodes exist in an Anvil! cluster. Your servers will be deployed across the nodes and, when you want to add more servers than you currently have resource for, you simple add another node.

Foundation Packs

A foundation pack is the power and ethernet layer that feeds into one or more node blocks. At it's most basic, it consists of three pairs of equipment;

- Two stacked (or VLT-domain'ed) ethernet switches.

- Two switched PDUs (network-switched power bars

- Two UPSes.

Each UPS feeds one PDU, forming two separate "power rails". Ethernet switches and all sub-nodes are generally equipped with redundant PSUs, with one PSU fed by either power rail.

In this way, any component in the foundation pack can fault, and all equipment will continue to have power and ethernet resources available. How many Anvil! nodes can be run on a given foundation pack is limited only by the sizing of the selected foundation pack equipment.

Configuration

| Note: This is SUPER basic and minimal at this stage. |

Striker Dashboards

Striker dashboards are often described as "cheap and cheerful", generally being a fairly small and inexpensive device, like a Dell Optiplex 3090, Intel NUC, or similar.

You can choose any vendor you wish, but when selecting hardware, be mindful that all Scancore data is stored in PostgreSQL databases running on each dashboard. As such, we recommend an Intel Core i5 or AMD Ryzen 5 class CPU, 8 GiB or more of RAM, a ~250 GiB SSD (mixed use, decent IOPS) storage and two ethernet ports.

Striker Dashboards host the Striker web interface, and act as a bridge between your IFN network and the Anvil! cluster's BCN management network. As such, they must have a minimum of two ethernet ports.

Node Pairs

An Anvil! Node Pair is made up of two identical physical machines. These two machines act as a single logical unit, providing fault tolerance and automated live migrations of hosted servers to mitigate against predicted hardware faults.

Each sub-node (a single hardware node) must have;

- Redundant PSUs

- Six ethernet ports (eight recommended). If six, use 3x dual-port. If eight, 2x quad port will do.

- Redundant storage (RAID level 1 (mirroring) or level 5 or 6 (striping with parity). Sufficient capacity and IOPS to host the servers that will run on each pair.

- IPMI (out-of-band) management ports. Strongly recommend on a dedicated network interface.

- Sufficient CPU core count and core speed for expected hosted servers.

- Sufficient RAM for the expected servers (note that the Anvil! reserves 8 GiB).

Disaster Recovery (DR) Host

Optionally, a "third node" of a sort can be added to a node-pair. This is called a DR Host, and should (but doesn't have to be) identical to the node pair hardware it is extending.

A DR (disaster recovery) Host acts as a remotely hosted "third node" that can be manually pressed into service in a situation where both nodes in a node pair are destroyed. A common example would be a DR Host being in another building on a campus installation, or on the far side of the building / vessel.

A DR host can in theory be in another city, but storage replication speeds and latency need to be considered. Storage replication between node pairs is synchronous, where replication to DR can be asynchronous. However, consideration of storage loads are required to insure that storage data can keep up with the rate of data change.

After your first node is up and running, you can follow this article to learn how to use DR;

Foundation Pack Equipment

The Anvil! is, fundamentally, hardware agnostic. That said, the hardware you select must be configured to meet the Anvil! requirements.

As we are hardware agnostic, we've created three linked pages. As we validate hardware ourselves, we will expand hardware-specific configuration guides. If you've configured foundation pack equipment not in the pages below, and you are willing, we would love to add your configuration instructions to our list.

Striker, Node and DR Host Configuration

In UEFI (BIOS), configure;

- Striker Dashboards to power on after power loss in all cases.

- Configure Subnodes to stay powered off after power loss in all cases.

- Configure any machines with redundant PSUs to balance the load across PSUs (don't use "hot spare" where only one PSU is active carrying the full load)

If using RAID

- If you have two drives, configure RAID level 1 (mirroring)

- If using 3 to 8 drives, configure RAID level 5 (striping with N-1 parity)

- If using 9+ drives, configure RAID level 6 (striping with N-2 parity)

Note that a server on a given node-pair will have it's data mirrored, effectively creating a sort of RAID level 11 (mirror of mirrors), 15 (mirror of N-1 stripes) or 16 (mirror of N-2 stripes). This is why we're comfortable pushing RAID level 5 to 8 disks.

A Note on Secure Boot

Secure boot must be disabled on subnodes and DR hosts. This is because the replicated storage kernel drive is compiled in-place, and so doesn't have a signed binary.

If you forget to do this, you will see the following error when trying to start replicated storage;

modprobe drbd

modprobe: ERROR: could not insert 'drbd': Key was rejected by service

Installation of Base OS

For all three machine types; (striker dashboards, subnodes, and dr host), begin with a minimal RHEL 9 or Alma Linux 9 install.

| Note: This tutorial assumes an existing understanding of installed RHEL 9. If you are new to RHEL, you can setup a free Red Hat account, and then follow their installation guide. |

| Warning: It is important that you set the host name during the installation of the OSes. It can be changed later, but the default 'localhost.localdomain' will cause conflicts later. |

Base OS Install

| Note: Every effort has been made in the development of the Anvil! to ensure it will work with localisations. However, parsing of command output has been tested with Canadian and American English. As such, it is recommended that you install using one of these localisations. If you use a different localisation, and run into any problems, please let us know and we will try to add support. |

Localisation

Choose your localisation;

Main Install Menu

Once the localisation is selected, you will see the main installation screen.

The order things are configured generally doesn't matter, though it's a good habit to configure the network before configuring storage. Doing it in this order means that the volume group name will be based on the host name, making it unique among the cluster. This is a minor thing, but in the case of a future data recovery, can be helpful to identify the source of the data.

Disable kdump

Disable kdump; This prevents kernel dumps if the OS crashes, but it means the host will recover faster. If you want to leave kdump enabled, that is fine, but be aware of the slower recovery times. Note that a subnode getting fenced will be forced off, and so kernel dumps won't be collected regardless of this configuration.

Network & Host Name

| Note: Networking in the Anvil! cluster can be a little complex at first. If you haven't already, please review Anvil! Networking. |

Set the host name for the machine. It's useful to do this before configuring storage, so that the volume group name includes the host's short host name. This doesn't effect the operation of the Anvil! system, but it can assist with debugging down the road.

This configuration is to make the machine accessible for the next stage of the install. The network will be fully reconfigured later during the Striker, subnode or DR configuration configuration stage. As such, you can configure only one interface if you prefer. If you leave DHCP, make a note of the IP address it was assigned for later access.

| Note: If you want to configure the BCN IP as well, be sure to click on Routes and click to check Use this connection only for resources on its network. |

Time & Date

| Note: If your site restricts access to NTP time servers, you will be able to configure which NTP server targets in Striker. It is very important that all machines in the Anvil! cluster have the same concept of time! |

Setting the timezone is very much specific to you and your install. The most important part is that the time zone is set consistently across all machines in the Anvil! cluster.

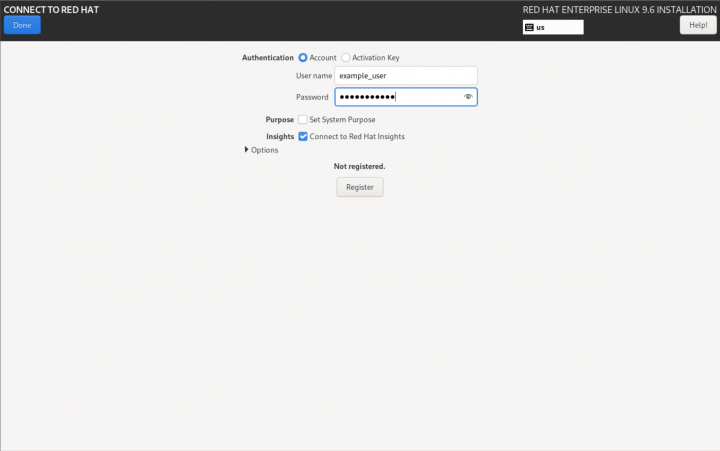

Optional; Connect to Red Hat

If you are installing Red Hat Enterprise Linux, you can register the server during installation. If you don't do this, the Anvil! will give you a chance to register the server during the installation process later.

| Warning: If you already selected the Software Selection, you will need to select it again after registering with Red Hat. |

Software Selection

All machines can start with a Minimal Install. On Strikers, if you'd prefer to use Server With GUI, that is fine, but it is not needed at this step. The anvil-striker RPM will pull in the graphical interface.

| Note: If you select a graphical install on a Striker Dashboard, create a user called admin and set a password for that user. |

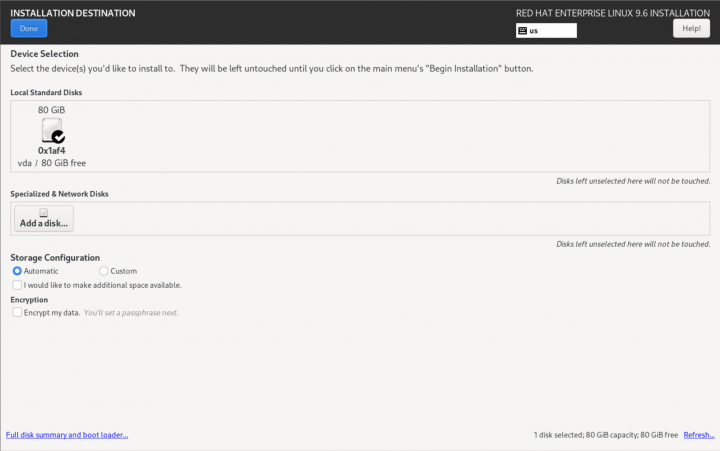

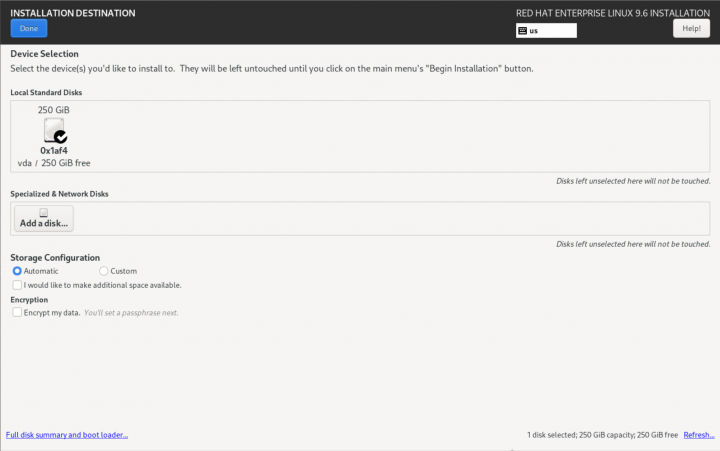

Installation Destination

| Note: It is strongly suggested to set the host name before configuring storage. |

| Note: This is where the installation of a Striker dashboard will differ from an Anvil! Node's subnode or DR host |

In this example, there is a single hard drive that will be configured. It's entirely valid to have a dedicated OS drive, and using a second drive for hosting servers. If you're planning to use a different storage plan, then you can ignore this stage. The key requirement is that there is unused space sufficiently large to host the servers you plan to run on a given node or DR host.

| Striker Dashboards | Anvil! Subnodes and DR Hosts |

|---|---|

|

|

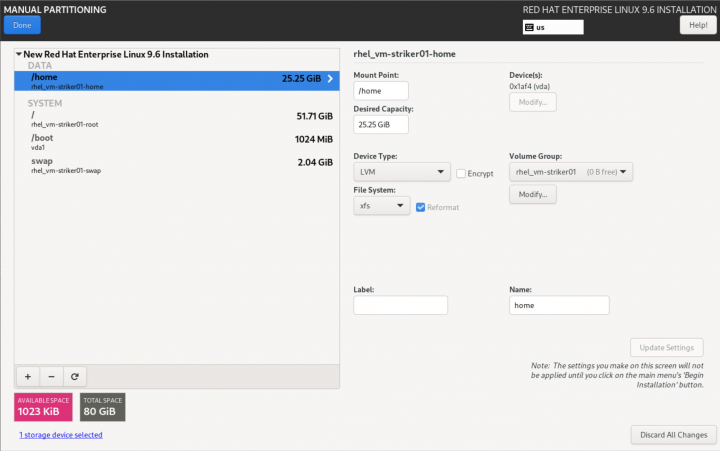

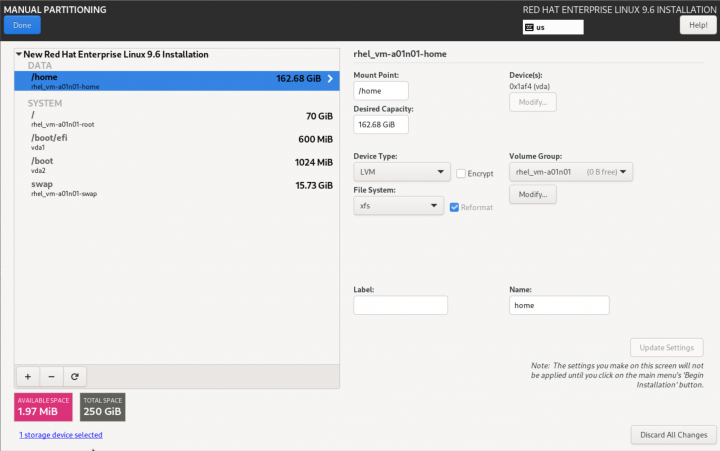

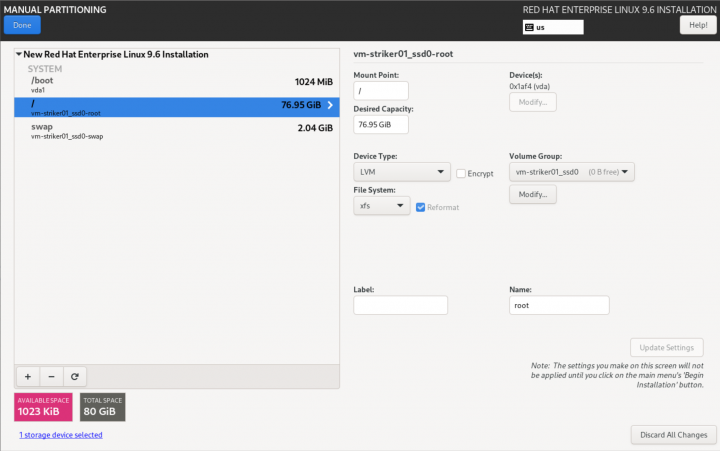

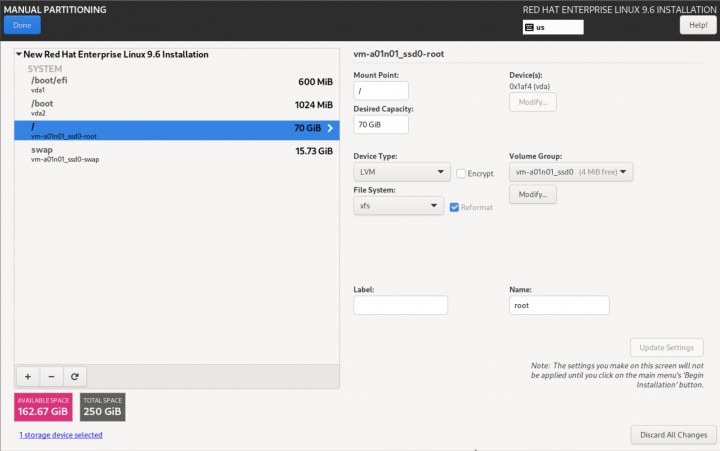

Click on "Click here to create them automatically". This will create the base storage configuration, which we will adapt.

| Striker Dashboards | Anvil! Subnodes and DR Hosts |

|---|---|

|

|

In all cases, the auto-created /home logical volume will be deleted.

- For Striker dashboards, after deleting /home, assign the freed space to the / partition. To do this, select the / partition, and set the Desired Capacity to some much larger size than is available (like 1TiB), and click on Update Setting. The size will change to the largest valid value.

- For Anvil! subnodes and DR hosts, simply delete the /home partition, and do not give the free space to /. The space freed up by deleting /home will be used later for hosting servers.

- Optionally, if you plan to have two or more volume groups, you may want to change the name of the VG to something more descriptive. For example, if the default is 'rhel_vm-a01n01' and you plan to have a bulk platter array and a higher speed solid state array, you may want to use a name like 'vm-a01n01_hdd0' and 'vm-a01n01_ssd0'. The Anvil! does not care what you use, so this is entirely up to you.

| Note: The default "/" partition will generally be 70 GiB. You might want to increase this, especially if you plan to have a lot of different install ISOs for various hosted OSes. You might want to consider 100 GiB or more, depending on you free space and the expected server requirements. |

| Striker Dashboards | Anvil! Subnodes and DR Hosts |

|---|---|

|

|

From this point forward, the rest of the OS install is the same for all systems.

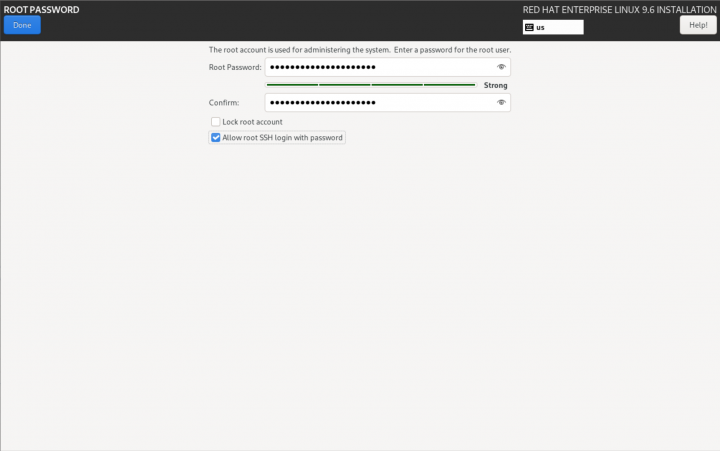

Root Password

Set the 'root' user password. Be sure to also check to enable Allow root to SSH login with password.

Begin Installation

With everything selected, click on Begin Installation. When the install has completed, reboot into the minimal install.

Post OS Install Configuration

Setting up the Alteeve Intelligent Availability® repos is the same, but after that, the steps start to diverge depending on which machine type we're setting up in the Anvil! cluster.

Installing the Alteeve Repo

| Note: Our repo pulls in a bunch of other packages that will be needed shortly. |

There are two Alteeve repositories that you can install; Community and Enterprise. Which is used is selected after the repository RPM is installed. Lets install the repo RPM, and then we will discuss the differences before we select one.

dnf install https://alteeve.com/an-repo/m3/alteeve-release-latest.noarch.rpm

Updating Subscription Management repositories.

Last metadata expiration check: 0:01:00 ago on Mon 16 Jun 2025 10:38:18 PM.

alteeve-release-latest.noarch.rpm 32 kB/s | 13 kB 00:00

Dependencies resolved.

============================================================================================================================================

Package Architecture Version Repository Size

============================================================================================================================================

Installing:

alteeve-release noarch 0.1-5 @commandline 13 k

Installing dependencies:

annobin x86_64 12.92-1.el9 rhel-9-for-x86_64-appstream-rpms 1.1 M

cpp x86_64 11.5.0-5.el9_5 rhel-9-for-x86_64-appstream-rpms 11 M

dwz x86_64 0.14-3.el9 rhel-9-for-x86_64-appstream-rpms 130 k

efi-srpm-macros noarch 6-2.el9_0 rhel-9-for-x86_64-appstream-rpms 24 k

fonts-srpm-macros noarch 1:2.0.5-7.el9.1 rhel-9-for-x86_64-appstream-rpms 29 k

gcc x86_64 11.5.0-5.el9_5 rhel-9-for-x86_64-appstream-rpms 32 M

<...snip...>

rust-srpm-macros noarch 17-4.el9 rhel-9-for-x86_64-appstream-rpms 11 k

sombok x86_64 2.4.0-16.el9 rhel-9-for-x86_64-appstream-rpms 51 k

systemtap-sdt-devel x86_64 5.2-2.el9 rhel-9-for-x86_64-appstream-rpms 77 k

unzip x86_64 6.0-58.el9_5 rhel-9-for-x86_64-baseos-rpms 186 k

zip x86_64 3.0-35.el9 rhel-9-for-x86_64-baseos-rpms 270 k

Installing weak dependencies:

perl-CPAN-DistnameInfo noarch 0.12-23.el9 rhel-9-for-x86_64-appstream-rpms 17 k

perl-Encode-Locale noarch 1.05-21.el9 rhel-9-for-x86_64-appstream-rpms 21 k

perl-Term-Size-Any noarch 0.002-35.el9 rhel-9-for-x86_64-appstream-rpms 16 k

perl-TermReadKey x86_64 2.38-11.el9 rhel-9-for-x86_64-appstream-rpms 40 k

perl-Unicode-LineBreak x86_64 2019.001-11.el9 rhel-9-for-x86_64-appstream-rpms 129 k

Transaction Summary

============================================================================================================================================

Install 266 Packages

Total size: 117 M

Total download size: 117 M

Installed size: 355 M

Is this ok [y/N]:

Downloading Packages:

(1/265): ghc-srpm-macros-1.5.0-6.el9.noarch.rpm 25 kB/s | 9.0 kB 00:00

(2/265): lua-srpm-macros-1-6.el9.noarch.rpm 28 kB/s | 10 kB 00:00

(3/265): libthai-0.1.28-8.el9.x86_64.rpm 384 kB/s | 211 kB 00:00

(4/265): perl-Algorithm-Diff-1.2010-4.el9.noarch.rpm 257 kB/s | 51 kB 00:00

(5/265): perl-Archive-Zip-1.68-6.el9.noarch.rpm 525 kB/s | 116 kB 00:00

<...snip...>

(262/265): zip-3.0-35.el9.x86_64.rpm 963 kB/s | 270 kB 00:00

(263/265): make-4.3-8.el9.x86_64.rpm 972 kB/s | 541 kB 00:00

(264/265): unzip-6.0-58.el9_5.x86_64.rpm 478 kB/s | 186 kB 00:00

(265/265): pkgconf-pkg-config-1.7.3-10.el9.x86_64.rpm 6.0 kB/s | 12 kB 00:02

--------------------------------------------------------------------------------------------------------------------------------------------

Total 3.8 MB/s | 117 MB 00:31

Red Hat Enterprise Linux 9 for x86_64 - AppStream (RPMs) 3.5 MB/s | 3.6 kB 00:00

Importing GPG key 0xFD431D51:

Userid : "Red Hat, Inc. (release key 2) <security@redhat.com>"

Fingerprint: 567E 347A D004 4ADE 55BA 8A5F 199E 2F91 FD43 1D51

From : /etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release

Is this ok [y/N]:

Key imported successfully

Importing GPG key 0x5A6340B3:

Userid : "Red Hat, Inc. (auxiliary key 3) <security@redhat.com>"

Fingerprint: 7E46 2425 8C40 6535 D56D 6F13 5054 E4A4 5A63 40B3

From : /etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release

Is this ok [y/N]:

Key imported successfully

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Installing : perl-Digest-1.19-4.el9.noarch 1/266

Installing : perl-Digest-MD5-2.58-4.el9.x86_64 2/266

Installing : perl-FileHandle-2.03-481.el9.noarch 3/266

Installing : perl-B-1.80-481.el9.x86_64 4/266

<...snip...>

redhat-rpm-config-209-1.el9.noarch rust-srpm-macros-17-4.el9.noarch

sombok-2.4.0-16.el9.x86_64 systemtap-sdt-devel-5.2-2.el9.x86_64

unzip-6.0-58.el9_5.x86_64 zip-3.0-35.el9.x86_64

Complete!

Selecting a Repository

There are two released version of the Anvil! cluster. There are pros and cons to both options;

Community Repo

The Community repository is the free repo that anyone can use. As new builds pass our integration and automated test infrastructure, the versions in this repository are automatically built.

This repository always has the latest and greatest from Alteeve. We use Jenkins and a suite of proprietary test suite to ensure that the quality of the releases is excellent. Of course, Alteeve is a company of humans, and there's always a small chance that a bug could get through. Our free community repository is community supported, and it's our wonderful users who help us improve and refine our Anvil! platform.

Enterprise Repo

The Enterprise repository is the paid-access repository. The releases in the enterprise repo are "cherry picked" by Alteeve, and subjected to more extensive testing and QA. This repo is designed for businesses who want the most stable releases.

Using this repo opens up the option of active monitoring of your Anvil! cluster by Alteeve, also!

If you choose to get the Enterprise repo, please contact us and we will provide you with a custome repository key.

Configuring the Alteeve Repo

To configure the repo, we will use the alteeve-repo-setup program that was just installed.

You can see a full list of options, including the use of the --key <uuid> to enable to Enterprise Repo. For this tutorial, we will configure the community repo.

alteeve-repo-setup

You have not specified an Enterprise repo key. This will enable the community

repository. We work quite hard to make it as stable as we possibly can, but it

does lead Enterprise.

Proceed? [y/N]:

Writing: [/etc/yum.repos.d/alteeve-anvil.repo]...

Repo: [rhel-9] created successfuly.

RHEL 9 Additional Repos

If you are using RHEL 9 proper, you will now need to enable additional repositories.

On all systems;

subscription-manager repos --enable codeready-builder-for-rhel-9-x86_64-rpms

Repository 'codeready-builder-for-rhel-9-x86_64-rpms' is enabled for this system.

This is needed for fencing to work, which Striker uses to reboot subnodes after a power of thermal event.

subscription-manager repos --enable rhel-9-for-x86_64-highavailability-rpms

Repository 'rhel-9-for-x86_64-highavailability-rpms' is enabled for this system.

Installing Anvil! Packages

This is the step where, from a software perspective, Anvil! cluster systems differentiate to become Striker Dashboards, Anvil! subnodes, and DR hosts. Which a given machine becomes depends on which RPM is installed. The three RPMs that set a machine's role are;

| Striker Dashboards: | anvil-striker |

|---|---|

| Anvil! Subnodes: | anvil-node |

| DR Hosts: | anvil-dr |

{{note|1=You only need to manually install the Alteeve repo on the Striker dashboards. Once setup, you can use the Striker UI to "initialise" the subnodes and DR hosts, which handles the Alteeve repo setup and package installation for you.

If for some reason you want to do a manual install on a subnode or DR host, follow the steps below, simply substituting the 'anvil-striker' package for 'anvil-node' or 'anvil-dr'.

Striker Dashboards; Installing anvil-striker

Now we're ready to install!

| Note: Given the install of the OS was minimal, these RPMs pull in a lot of RPMs. The output below is truncated. |

Thus, let's install the RPMs on our systems.

dnf install anvil-striker

Updating Subscription Management repositories.

Red Hat Enterprise Linux 9 for x86_64 - High Availability (RPMs) 568 kB/s | 3.2 MB 00:05

Red Hat CodeReady Linux Builder for RHEL 9 x86_64 (RPMs) 9.6 MB/s | 13 MB 00:01

Dependencies resolved.

============================================================================================================================================

Package Arch Version Repository Size

============================================================================================================================================

Installing:

anvil-striker noarch 3.1.80-1.235.901a.el9 anvil-community-rhel-9 3.4 M

Installing dependencies:

ModemManager-glib x86_64 1.20.2-1.el9 rhel-9-for-x86_64-baseos-rpms 337 k

accountsservice x86_64 0.6.55-10.el9 rhel-9-for-x86_64-appstream-rpms 134 k

accountsservice-libs x86_64 0.6.55-10.el9 rhel-9-for-x86_64-appstream-rpms 96 k

<...snip...>

redhat-backgrounds noarch 90.4-2.el9 rhel-9-for-x86_64-appstream-rpms 5.2 M

telnet x86_64 1:0.17-85.el9 rhel-9-for-x86_64-appstream-rpms 66 k

tracker-miners x86_64 3.1.2-4.el9_3 rhel-9-for-x86_64-appstream-rpms 942 k

Transaction Summary

============================================================================================================================================

Install 711 Packages

Total download size: 571 M

Installed size: 2.1 G

Is this ok [y/N]:

Downloading Packages:

(1/711): bpg-dejavu-sans-fonts-2017.2.005-25.el9.noarch.rpm 699 kB/s | 180 kB 00:00

(2/711): bpg-fonts-common-20120413-25.el9.noarch.rpm 509 kB/s | 20 kB 00:00

(3/711): htop-3.3.0-3.el9.x86_64.rpm 1.0 MB/s | 198 kB 00:00

(4/711): anvil-core-3.1.80-1.235.901a.el9.noarch.rpm 2.0 MB/s | 1.0 MB 00:00

(5/711): anvil-striker-3.1.80-1.235.901a.el9.noarch.rpm 6.8 MB/s | 3.4 MB 00:00

<...snip...>

(708/711): perl-Module-Runtime-0.016-13.el9.noarch.rpm 129 kB/s | 25 kB 00:00

(709/711): perl-PadWalker-2.5-4.el9.x86_64.rpm 202 kB/s | 30 kB 00:00

(710/711): perl-Params-ValidationCompiler-0.30-12.el9.noarch.rpm 322 kB/s | 43 kB 00:00

(711/711): perl-namespace-clean-0.27-18.el9.noarch.rpm 306 kB/s | 38 kB 00:00

--------------------------------------------------------------------------------------------------------------------------------------------

Total 15 MB/s | 571 MB 00:39

Anvil Community Repository (rhel-9) 1.6 MB/s | 1.6 kB 00:00

Importing GPG key 0xD548C925:

Userid : "Alteeve's Niche! Inc. repository <support@alteeve.ca>"

Fingerprint: 3082 E979 518A 78DD 9569 CD2E 9D42 AA76 D548 C925

From : /etc/pki/rpm-gpg/RPM-GPG-KEY-Alteeve-Official

Is this ok [y/N]: y

Key imported successfully

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Running scriptlet: npm-1:8.19.4-1.16.20.2.8.el9_4.x86_64 1/1

Preparing : 1/1

Installing : atk-2.36.0-5.el9.x86_64 1/711

Installing : libtirpc-1.3.3-9.el9.x86_64 2/711

Installing : libwayland-client-1.21.0-1.el9.x86_64 3/711

<...snip...>

xorriso-1.5.4-5.el9_5.x86_64 yum-utils-4.3.0-20.el9.noarch

zenity-3.32.0-8.el9.x86_64 zlib-devel-1.2.11-40.el9.x86_64

zstd-1.5.5-1.el9.x86_64

Complete!

Done!

Configuring the Striker Dashboards

When the 'anvil-striker' package is installed, it will begin to initialise the Striker dashboard automatically. After a few moments, you should be able to access the Striker WebUI from your favourite browser. Simply point to 'http://192.168.4.1' (using the IP your set or the IP assigned by the DHCP server) in a browser on the same network.

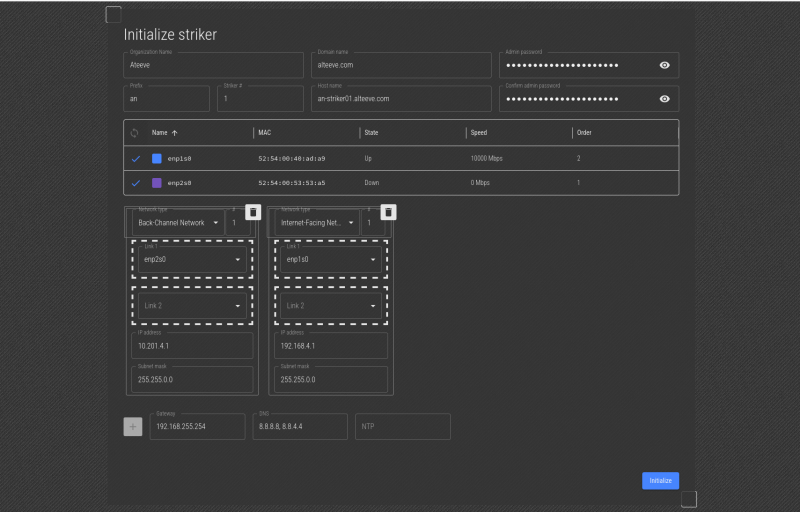

Striker Dashboard

Using a machine connected to the same network as the Striker dashboard, enter the URL http://<striker_ip_address>. This will load the initial Striker configuration page where we'll start configuring the dashboard.

| Note: At this time, https:// is not yet supported, so please use http:// from a machine on the local network. |

Fields;

| Organization name | This is a descriptive name for the given Anvil! cluster. Generally this is a company, organization or site name. |

|---|---|

| Domain name | This is the domain name that will be used when setting host names in the cluster. Generally this is the domain of the organization who use the Anvil! cluster. |

| Prefix | This is a short, generally 2~5 characters, descriptive prefix for a given Anvil! cluster. It is used as the leading part of a machine's short host name. |

| Striker # | Most Anvil! clusters have two Striker dashboards. This sets the sequence number, so the first is '1' and the second is '2'. If you have multiple Anvil! clusters, the first Striker on the second Anvil! cluster would be '3', etc. |

| Host name | This is the host name for this striker. Generally it's in the format of <prefix>-striker0<sequence>.<domain>. |

| Admin Password | This is the cluster's password, and should generally be the same password set when you set the password for the 'admin' user. |

| Confirm Password | Re-enter the password to confirm the typo you entered doesn't have a typo. |

| Note: It is strongly recommended that you keep the "Organization Name", "Domain Name", "Prefix" and "Admin Passwords" the same on Strikers that will be peered together. |

Network Configuration

One of the "trickier" parts of configuring an Anvil! machine is figuring out which physical network interface are to be used for which job.

There are found networks used in Anvil! clusters. If you're not familiar with the networks, you may want to read this first;

The middle section shows the existing network interfaces, enp1s0 and enp2s0 in the example above. In the screenshot above, both interfaces show their State as "On". This shows that both interfaces are physically connected to a network switch.

Which physical interface you want to use for a given task will be up to you, but above is an article explaining how Alteeve does it, and why.

In this example, the Striker has two interfaces, one plugged into the BCN, and one plugged into the IFN. To find which one is which, we'll unplug the cable going to the interface connected to the BCN, and we'll watch to see which interface state changes to "Down".

| Note: Striker tries to auto-select the interface with the DHCP IP as IFN 1, link 1. As this example only has two interfaces, in these screenshots it is obvious which interface is which. However, the unplugging of the interface to identify it is shown in case the auto-detection didn't work on your system. |

When we unplug the network cable going to the BCN interface, we can see that the enp2s0 interface has changed to show that it is "Down". Now that we know which interface is used to connect to the BCN, we can reconnect the network cable.

To configure the enp2s0 interface for use as the "Back-Channel Network 1" interface, click or press on the lines to the left of the interface name, and drag it, into the Link 1 box.

In this example, enp1s0 was already selected, so we're done. If you didn't have an IP on either interface though, we would know that obviously enp1s0 must be the IFN interface.

Once you're sure that the IPs you want are set, click on Initialize to send the new configuration.

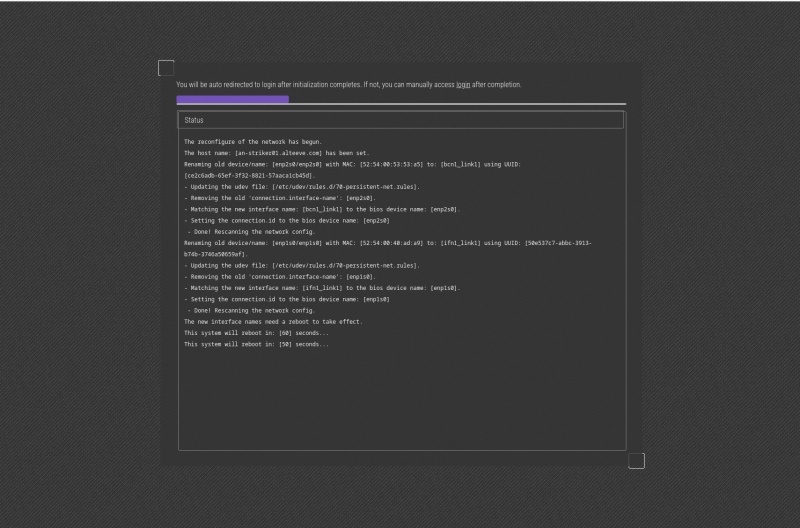

If you're happy with the values set, click Initialize again, and it will start to initialize the Striker.

| Note: Please be patient, the system will take a minute to pick up the job, reconfigure the network and reboot to apply the new config. |

After a moment, the Striker will reboot!

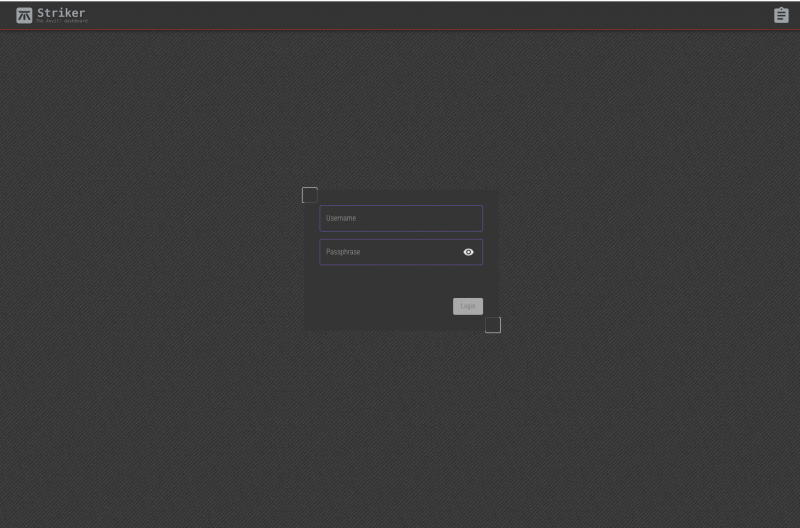

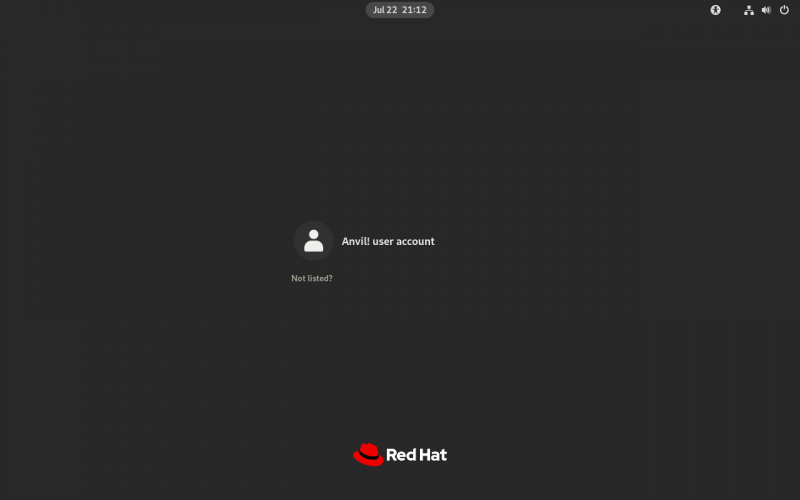

After it reboots, you will be presented with the log in screen. The user name is 'admin' and the password is the one you set above.

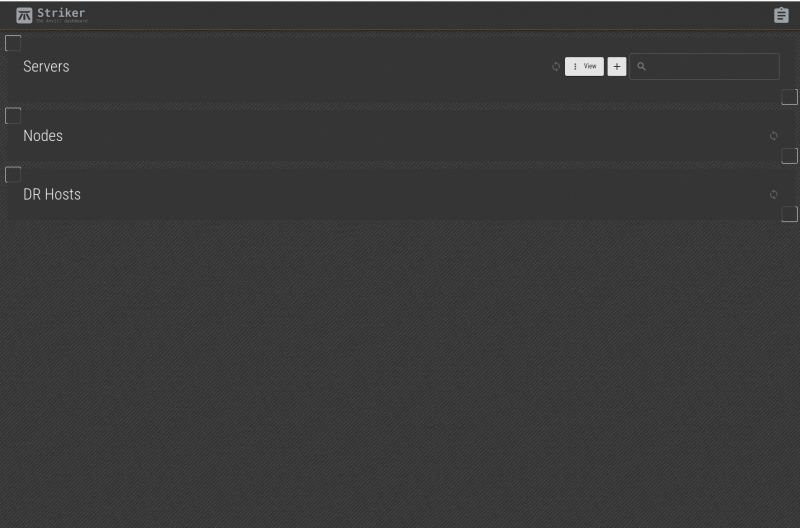

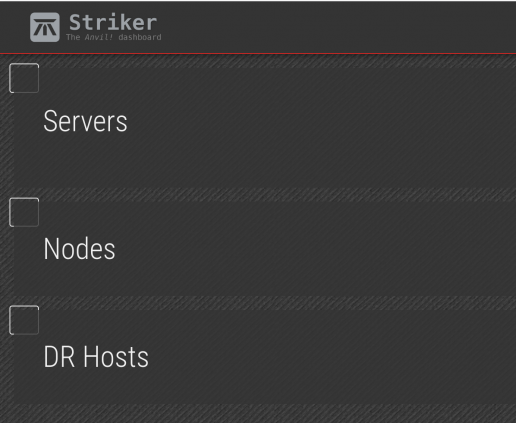

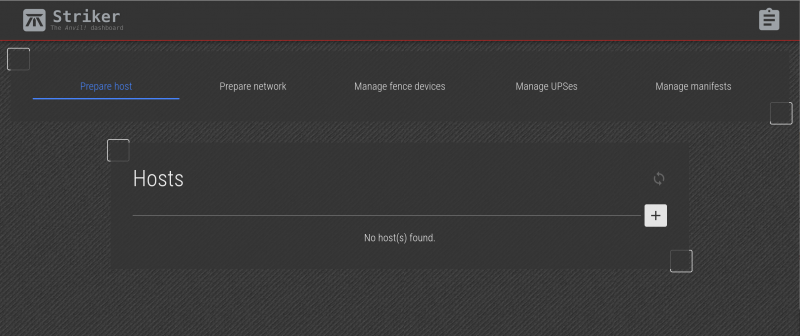

Once logged in, we'll see the fresh, initial Anvil! dashboard!

Optional; First Login

After the Striker dashboard reboots, you will now be able to log into the Striker dashboard's desktop if you wish. There is no need to use the desktop though, as all tasks can be done using the web interface or the command line tools via an ssh session for advanced functions.

Once the Striker dashboard has rebooted, reload the http://<striker_ip_address> to get the login page.

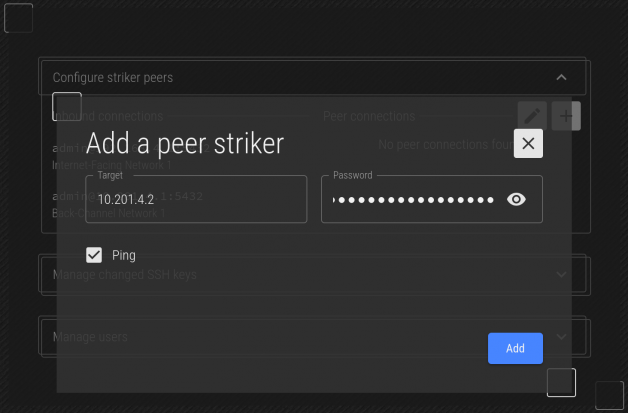

Peering Striker Dashboards

With two configured strikers ready, lets peer them. For this example, we'll be working on an-striker01, and peering with an-striker02 which has the BCN IP address 10.201.4.2.

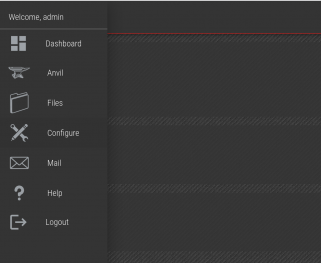

Click on the Striker logo at the top left, which opens the Striker menu.

Click on the Configure menu item.

Here we see the menu for configuring (or reconfiguring) the Striker dashboard.

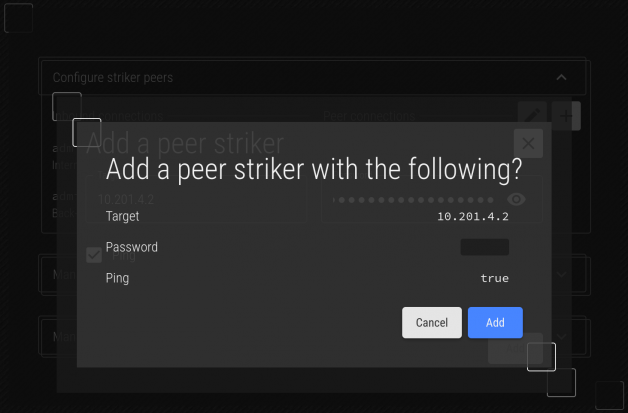

Click on the title of Configure Striker Peers to expand the peer menu. Then click on the "+" icon on the top-right to open the new peer menu.

In almost all cases, the Striker being peered is on the same BCN as the other. As such, we'll connect to the peer striker's BCN IP address, 10.201.4.2 in this example.

Confirm that you've entered the right IP and password.

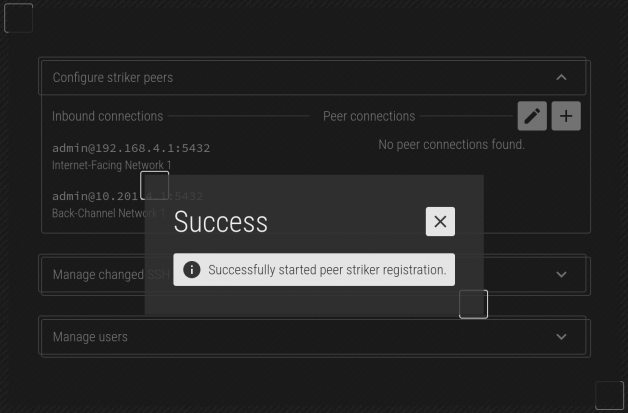

Once confirmed out, click Add and the job to peer will be saved. Click on the red 'X' at the top-right to return to the main form.

| Note: Give it a minute or two, and the peering should be complete. |

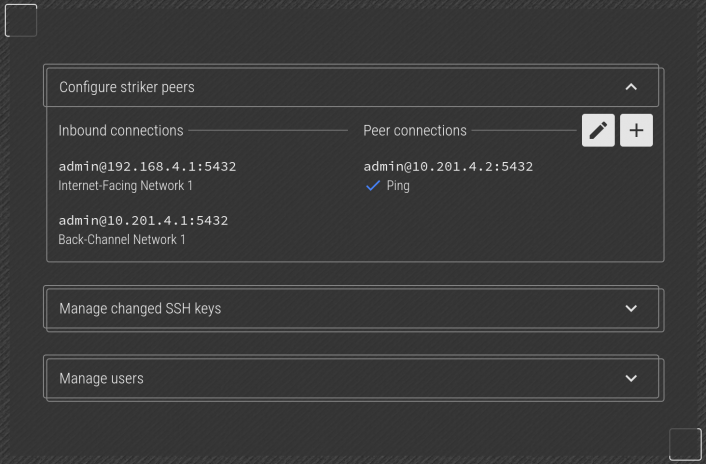

After a couple minutes, you will see the peer striker appear in the list. At this point, the two Strikers now operate as one. Should one ever fail, the other can be used in its place.

Configuring the First Node

An Anvil! node is a pair of subnodes acting as one machine, providing full redundancy to hosted servers.

The process of configuring a node is;

- Initialize each subnode

- Configure backing UPSes/PDUs

- Create an "Install Manifest"

- Run the Manifest

Initializing Subnodes

To initialize a node (or DR host), we need to enter it's current IP address and current root password. This is used to allow striker to log into it and update the subnode's configuration to use the databases on the Striker dashboards. Once this is done, all further interaction with the subnodes will be via the database.

Click on the Striker logo to open the menu, and then click on Anvil.

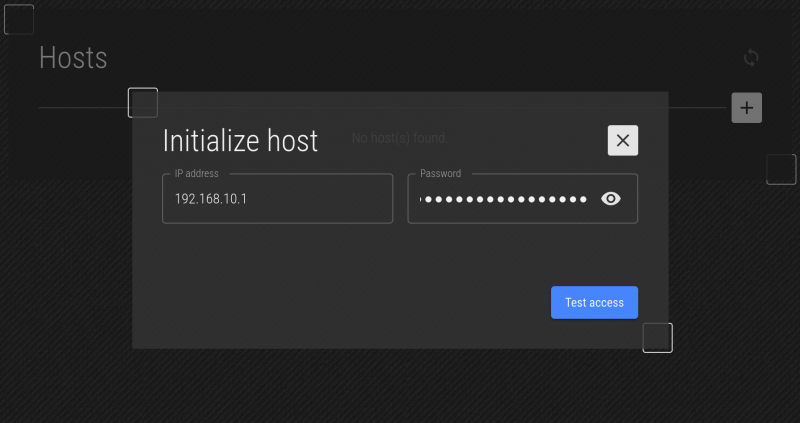

Enter the IP address use set (or was set by DHCP) when installing an-a01n01, and enter the root user password you set. There is no default password. Then click on "Test access".

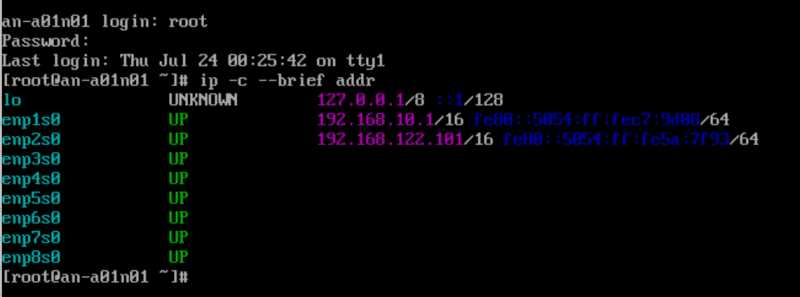

| Note: If you're not sure what the IP of the subnode is, you can run the command "ip -c --brief addr" to get a list of the current IPs. |

The IP address is the one that you assigned during the initial install of the OS on the subnode. If you left it as DHCP, you can check to see what the IP address is using the ip addr list command on the target subnode. In the example above, we see the IP is set to 192.168.10.1.

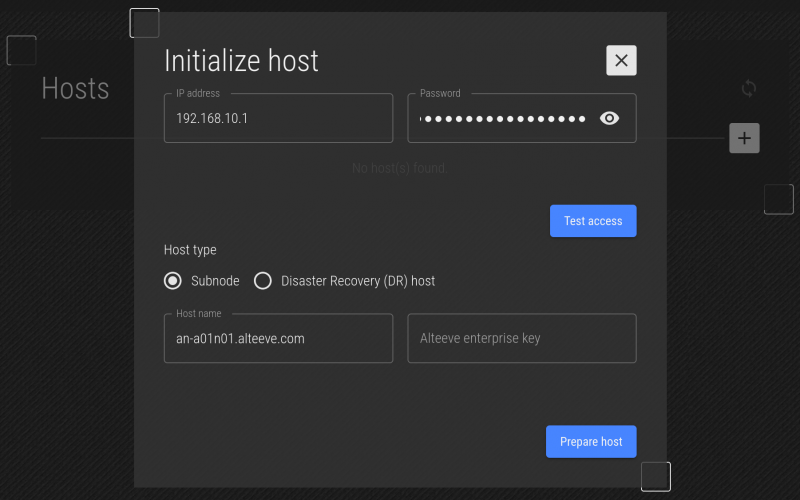

The current host name of the target is shown, to help you confirm that you connected to the host you suspected. You can change it here if you want, but generally it's not recommended unless you're correcting a bad host name from the initial OS install.

Lastly, if you want to setup an Alteeve Enterprise key and didn't do so during the OS install, you can set it here.

| Note: If you're installing on RHEL host that has not yet been activated, you will have the option to enter your RHEL login credentials. This will register the system before installing the packages. |

We're happy with this, click on Prepare Host.

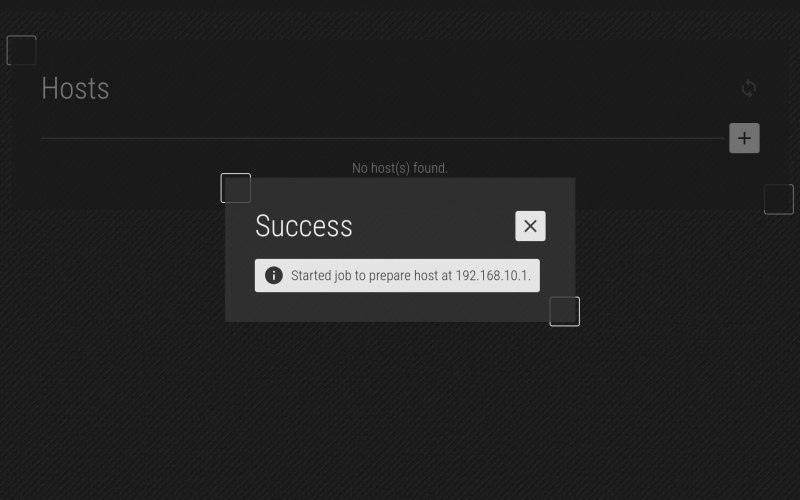

The plan to initialize is present, if you're happy, click on Prepare. The job will be saved, and you can then repeat until your subnodes are initialized.

You'll see that the job has been saved! Click on the "X" to close this and return to the Hosts menu.

| Note: It could take a minute or three for the subnode to connect to the database. Please be patient. |

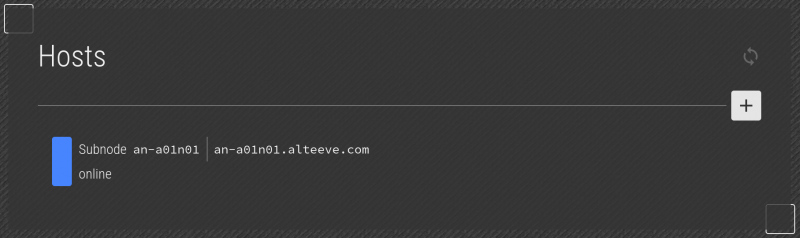

After a few moments, the subnode will appear.

Repeat this process to initialize the remaining subnode(s) and DR host(s).

The Anvil! uses a jobs processor to handle requests from the user interface. It most cases, this is not a concern for the user of Striker, but it is important for debugging issues.

In the screenshot above, the "hamburger menu" at the top right shows a short summary of any running or recently completed jobs.

When there is a problem, the job progress pie goes red. If you click on the job summary, you will be able to see the full details, including log output.

In this example, we're trying to initialise a RHEL subnode and, for whatever reason, the "High-Availability Add-on" entitlement failed. Without it, anvil-node was unable to install, as the HA dependencies were not met. To fix this, we purchase (or release a no-longer needed) entitlement. Re-initialize the subnode once that's done, and the install will complete.

Here we see the new job running with the previous failed job below it.

Here we see the details of the second job after it completed successfully.

Once you've got the subnodes (and DR host, if applicable) initialized, we can move on to mapping the network.

Network Mapping

With the two subnodes and a DR host initialized, we can now configure their networks!

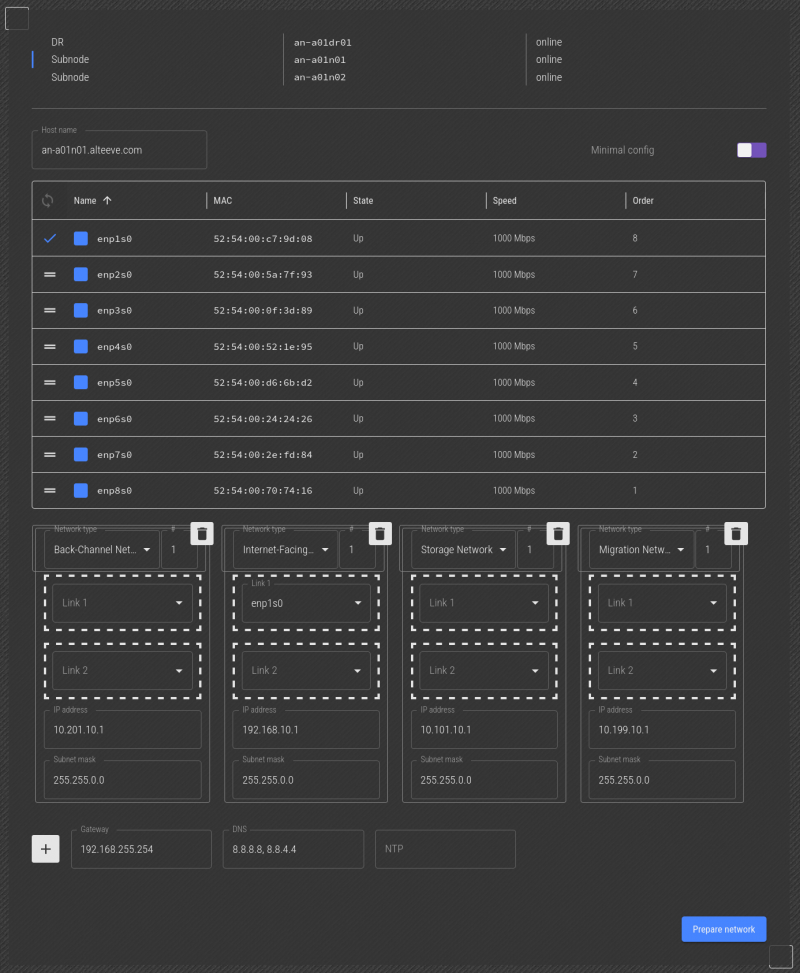

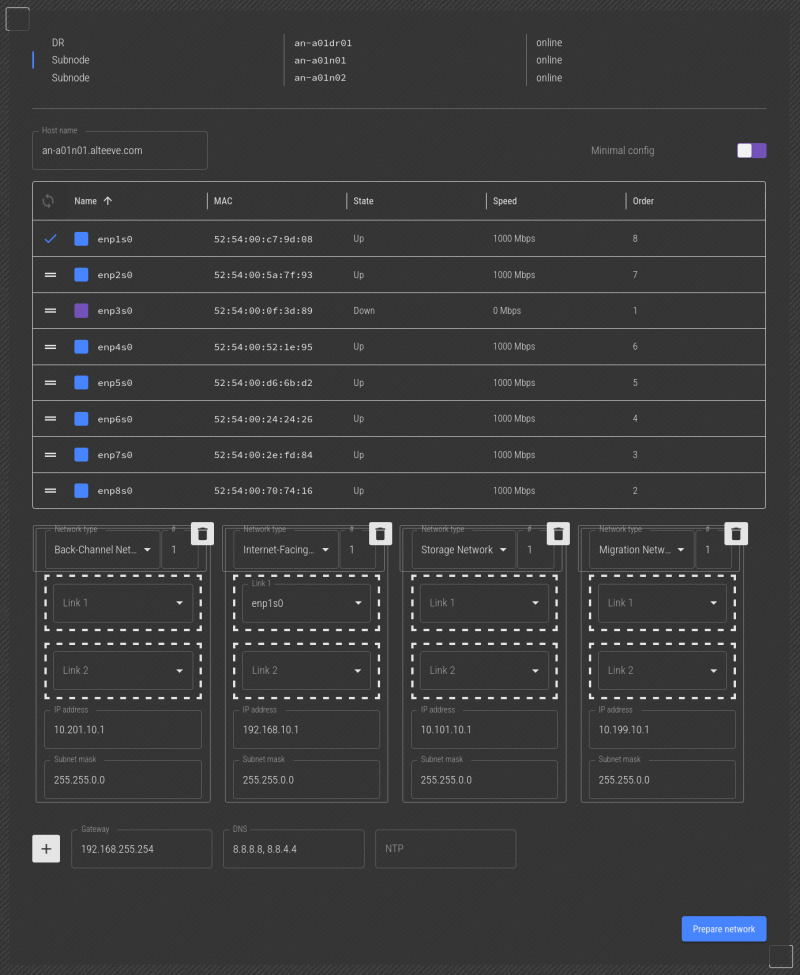

Full Anvil! subnodes must use redundant networks, specifically, active-backup bonds. They must have a network connections in the Back-Channel, Internet-Facing and Storage Networks. In our example here, we're going to also create a connection in the Migration Network as well, as we've got 8 interfaces to work with.

| Note: The network mapping function provides a way to map physical network ports to MAC addresses. This works by unplugging a cable, and seeing the corresponding network interface name go offline. This works over a network, and can sometimes not work when the interface being unplugged was in use. Another way to do this is to run 'anvil-monitor-network' at the console of the node while unplugging and plugging in network interfaces. |

With our subnodes initialized, we can now click on Prepare Network. This opens up a list of subnodes and DR hosts. In our case, there are three unconfigured hosts; an-a01n01, an-a01n02 and the DR host an-a01dr01.

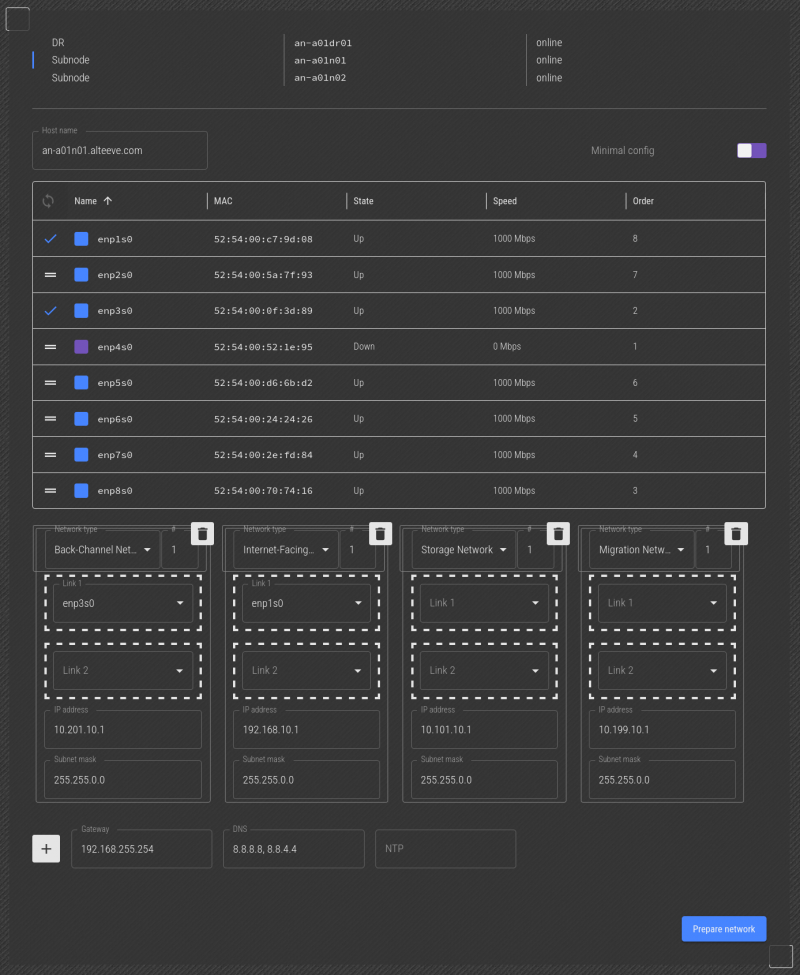

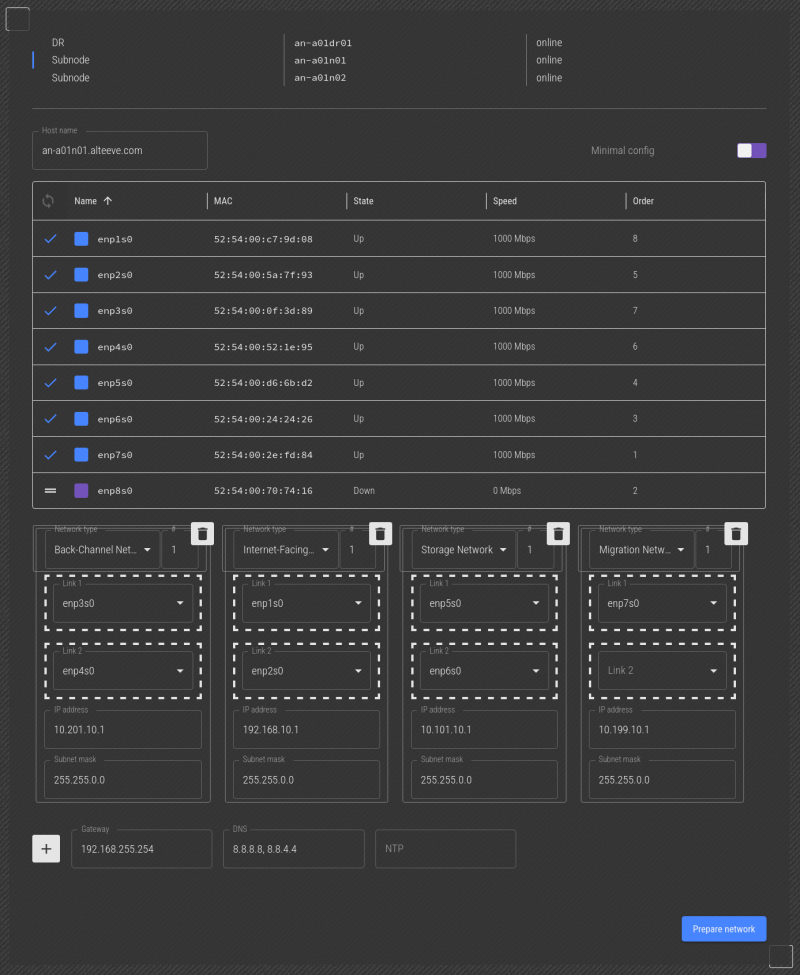

We'll start with the subnode an-a01n01. Click on it's name on the left to select it for configuration.

This works the same way as how we configured the Striker, except for two things. First, and most obvious, there are a lot more interfaces to work with.

Second, and more importantly, when we unplug the cable that the Striker dashboard is using to talk to the node, we will lose communication for a while. If that happens, you will not see the interface go down. When this happens, plug the interface back in, and move on to the next interface. When you're done mapping the network, only one interface and one slot will be left.

Lets start! Here, the network interface we want to make "BCN 1 - Link 1" is unplugged. With the cable unplugged, we can see that the enp3s0 interface is down. So we know that is the link we want. Click and drag it to the "Back-Channel Network 1" -> "Link 1" slot.

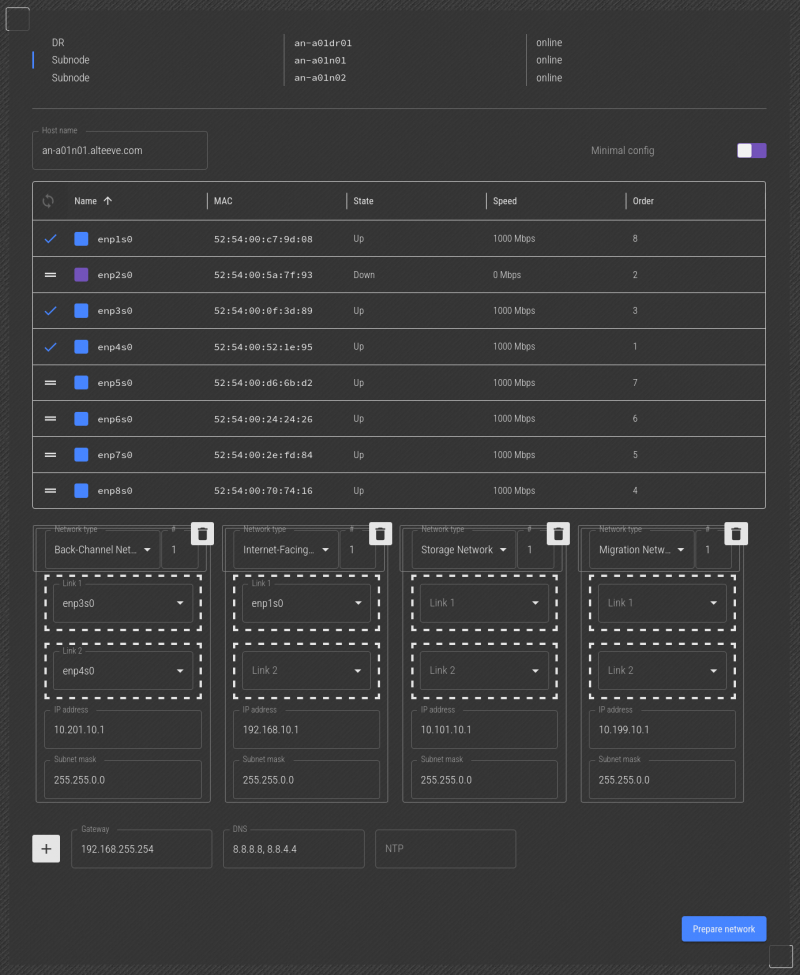

Next we see that enp4s0 is unplugged. So now we can drag that to the "Back-Channel Network 1" -> "Link 2" slot.

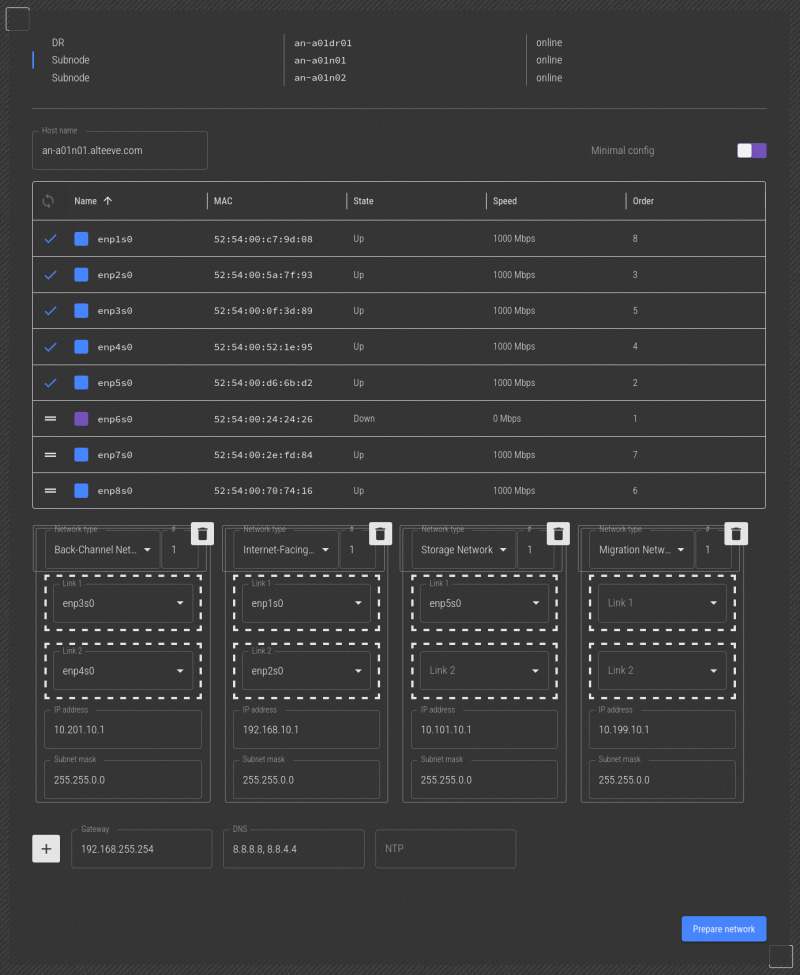

Now, we ran into the issue mentioned above. When we unplugged the cable to the interface we want to make "Internet-Facing Network, Link 1", we lost connection to the subnode, and could not see the interface go offline. So we'll skip it, plug it back in, and unplug the interface we want to be "Internet-Facing Network, Link 2".

Next we see that enp2s0 is unplugged. So now we can drag that to the "Internet-Facing Network 1" -> "Link 2" slot. This is a pretty good indication that the program's guess that enp1s0 as being Link 1 was correct.

Next, we'll unplug the cable going to the network interface we want to use as "Storage Network, Link 1". We see that enp5s0 is down, so we'll drag that to the "Internet-Facing Network 1" -> "Link 1" slot.

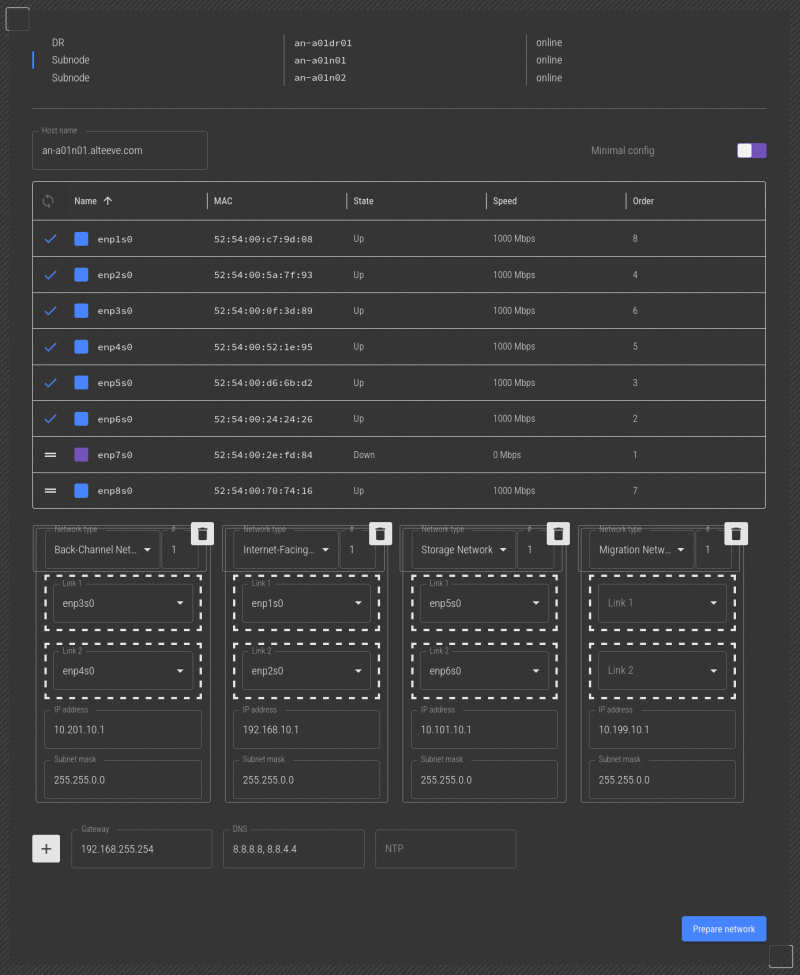

Next, we'll unplug the cable going to the network interface we want to use as "Storage Network, Link 2". We see that enp6s0 is down, so we'll drag that to the "Internet-Facing Network 1" -> "Link 2" slot.

| Note: If you only have six interfaces, this is where you'll be finished. In our example, we have 8, so the migration network is available. |

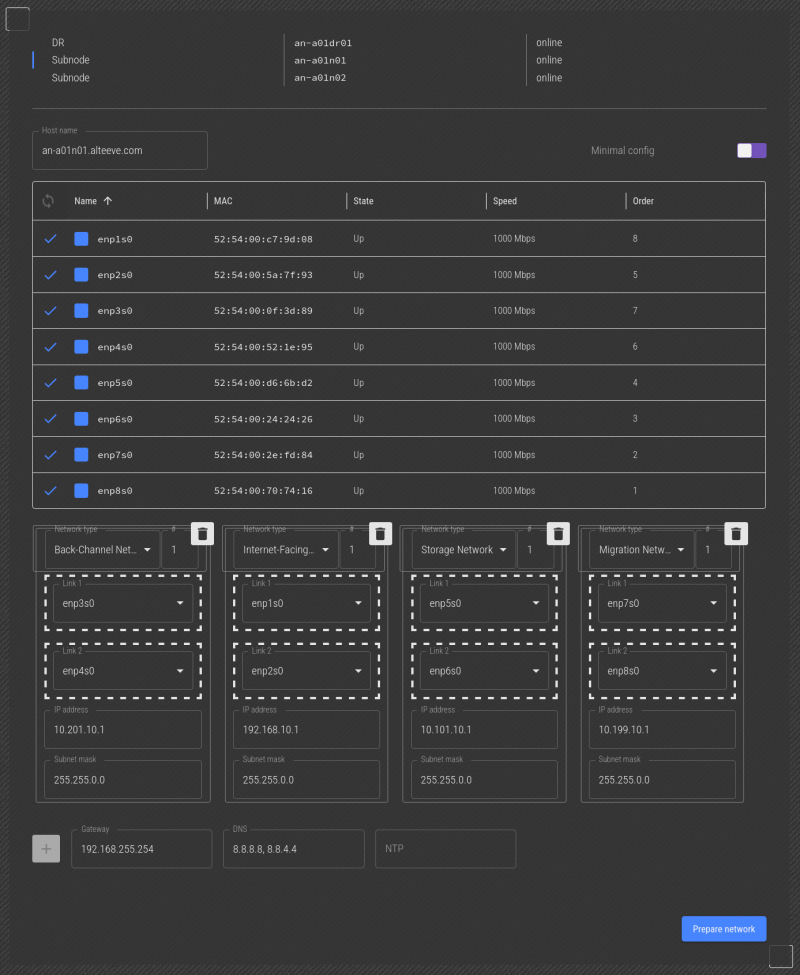

For the last network, we've unplugged the network cable going to the "Migration Network, Link 1" and we see enp7s0 is down. So we can drag that into the "Migration Network 1" -> "Link 1" slot.

Lastly, we unplugged the network cable going to the "Migration Network, Link 2" and we see enp8s0 is down. So we can drag that into the "Migration Network 1" -> "Link 2" slot.

With that, the only interface we didn't actually see disconnect was ens1p0, so we can confirm for sure that the guess that it was "Internet-Facing Network" -> "Link 1" was correct.

That's it, all our network interfaces have now been identified!

Assigning IP Addresses

The last step is to verify/assign IP addresses. The subnet prefixes for all but the IFN are set, so you can add the IPs you want. Given this is Anvil! node 1, the IPs we want to set are:

| host | Back-Channel Network 1 | Internet-Facing Network 1 | Storage Network 1 | Migration Network 1 |

|---|---|---|---|---|

| an-a01n01 | 10.201.10.1/16 | 192.168.10.1/16 | 10.101.10.1/16 | 10.199.10.1/16 |

| an-a01n02 | 10.201.10.2/16 | 192.168.10.2/16 | 10.101.10.2/16 | 10.199.10.2/16 |

| an-a01dr01 | 10.201.10.3/16 | 192.168.10.3/16 | 10.101.10.3/16 | n/a |

| Note: You don't need to specify which network is the default gateway. The IP you enter will be matched against the IP and subnet masks automatically to determine which network the gateway belongs to. |

Enter all the IP addresses and their subnet masks, the gateway and the DNS servers to use. You can specify multiple DNS servers using a comma to separate them, like 8.8.8.8,8.8.4.4. The order they're written will become the order they're searched.

In our example above, all the guessed IPs were correct. So there are no changes needed.

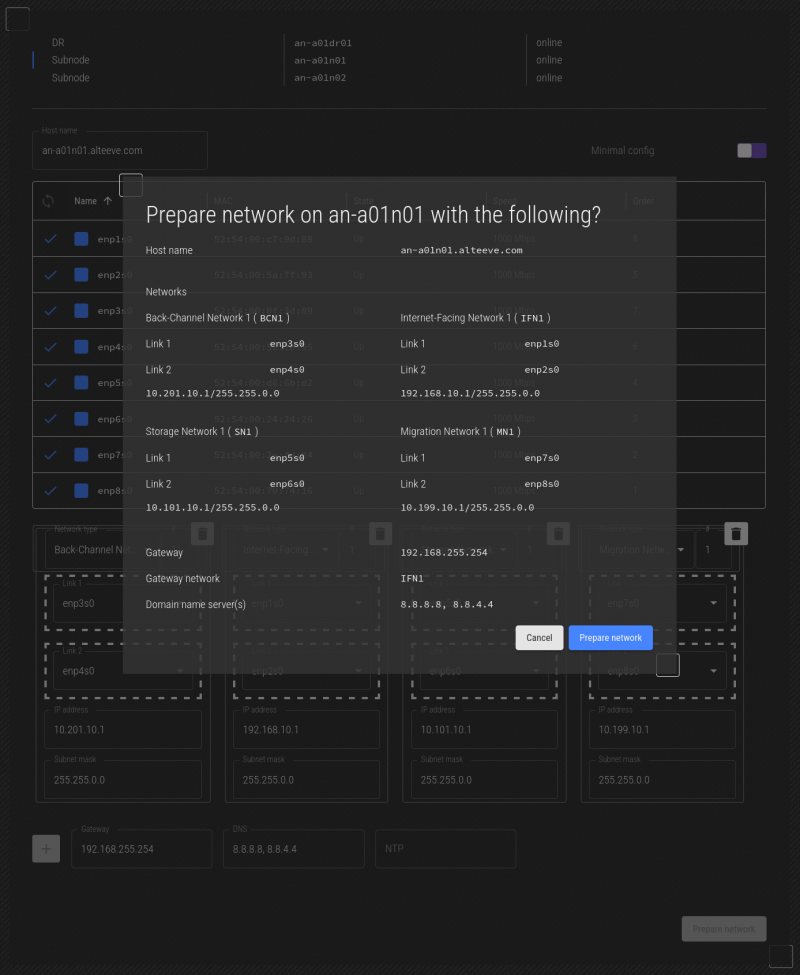

Double-check everything, and when you're happy, click on Prepare Network. You will be given a summary of what will be done.

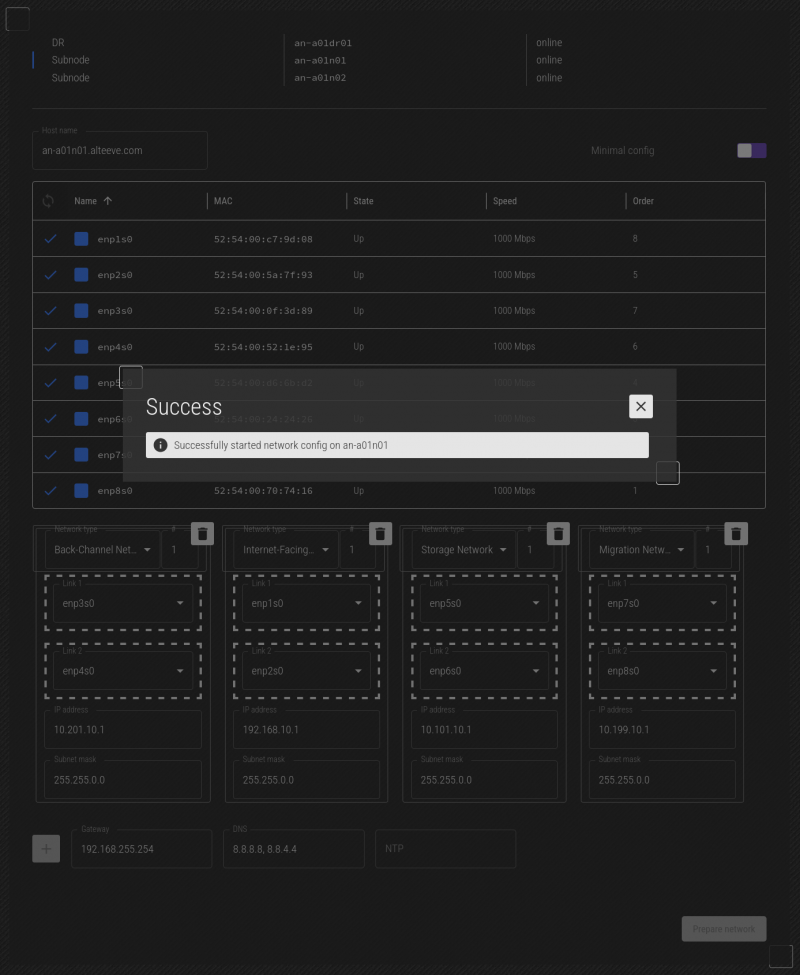

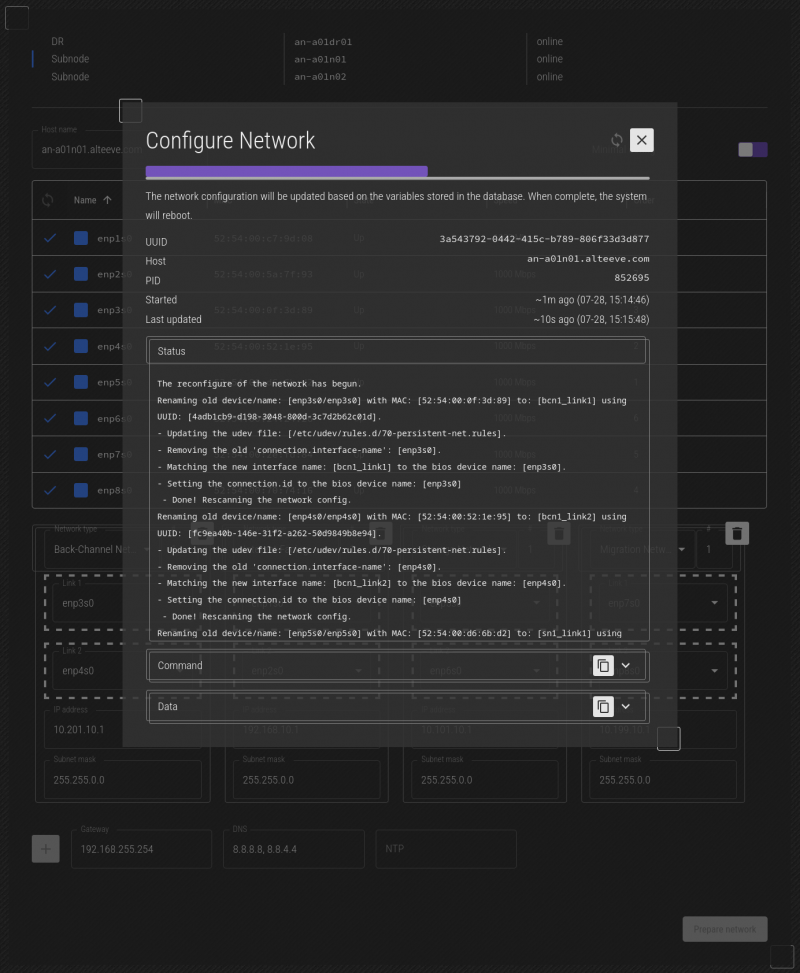

You can monitor the progress of the job by clicking on the "Jobs" icon at the top right of the screen.

If you click on the job itself, you can monitor the details of the job while it runs.

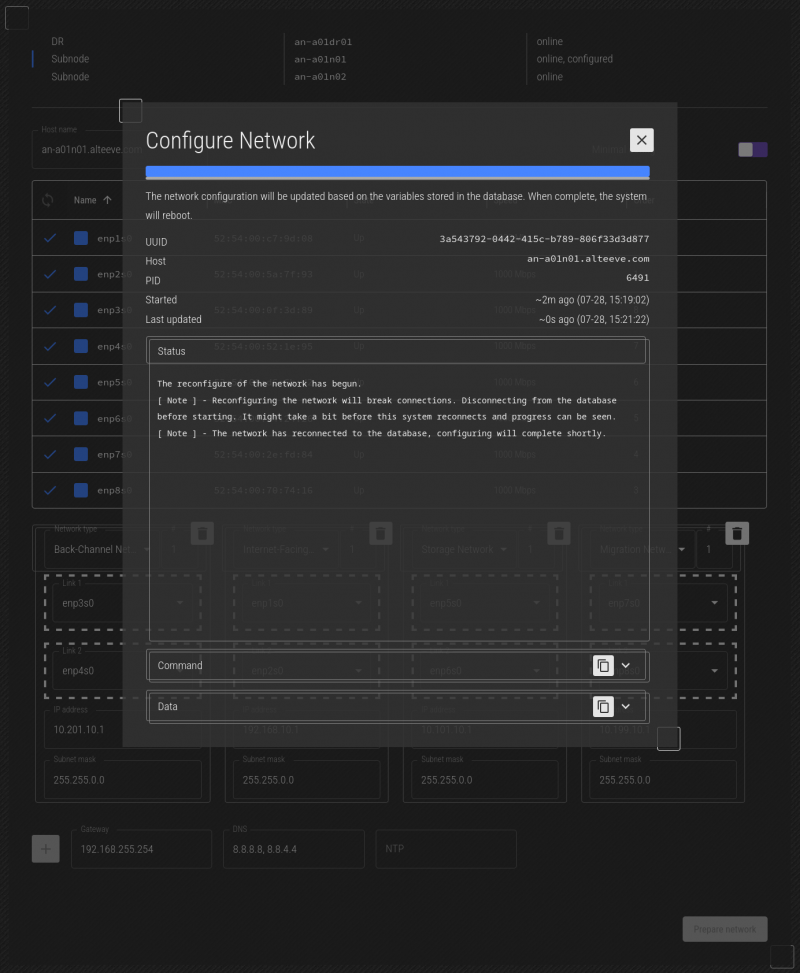

After a few moments, you should see the mapped machine, an-a01n01 in this case, reboot.

After reboot, the job "restarts", so if you watch carefully, you'll see the progress bar roll back, before completing. This is normal with network jobs.

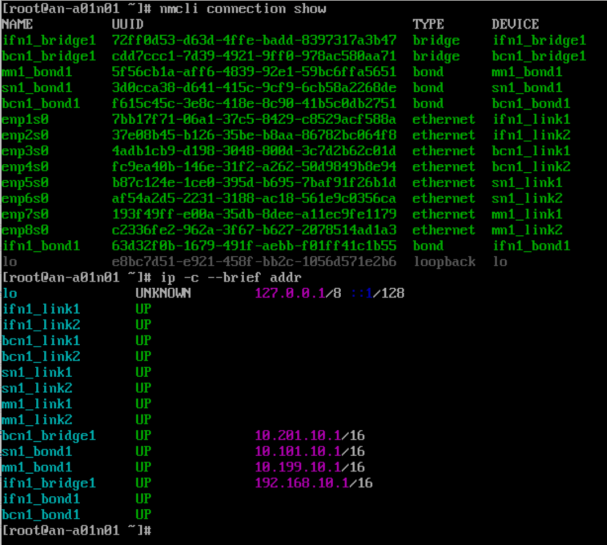

You do not need to log into it or do anything else at this point. If you're curious or want to confirm though, you can log into an-a01n01 and running nmcli connection show and / or ip -c --brief addr to see the new configuration.

| Note: Map the network on the other subnode(s) and DR host(s). Once all are mapped, we're ready to move on! |

Adding Fence Devices

Before we begin, lets review why fence devices are so important. Here's an article talking about fencing and why it's important;

If you've working with High-Availability Clusters before, you may have heard that you need to have a third "quorum" node for vote tie breaking. We humbly argue this is wrong, and explain why here;

In our example Anvil! cluster, the subnodes have IPMI BMCs, and those will be the primary fence method. IPMI are special in that the Anvil! cluster can auto-detect and auto-configure BMCs. So even though we're going to configure fence devices here, we do not need to configure them manually.

So our secondary method of fencing is using APC branded PDUs. These are basically smart power bars which, via a network connection, can be logged into and have individual outlets (ports) powered down or up on command. So, if for some reason the IPMI fence failed, the backup would be to log into a pair of PDUs (one per PSU on the target subnode) and cut the power. This way, we can sever all power to the target node, forcing it off.

At this point, we're not going to configure nodes and ports. The purpose of this stage of the configuration is simply to define which PDUs exist. So lets add a pair.

We're going to be configuring a pair of APC AP7900B PDUs.

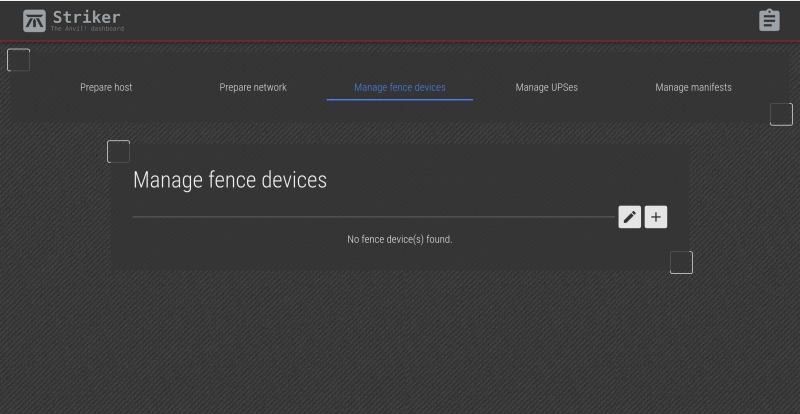

Clicking on the Manage Fence Devices section of the top bar will open the Fence menu.

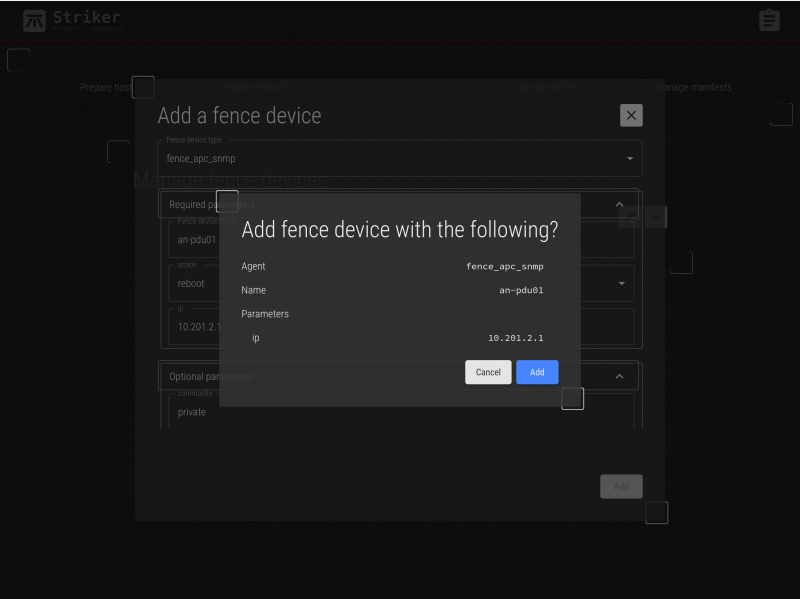

Click on the + icon on the right to open the new fence device menu.

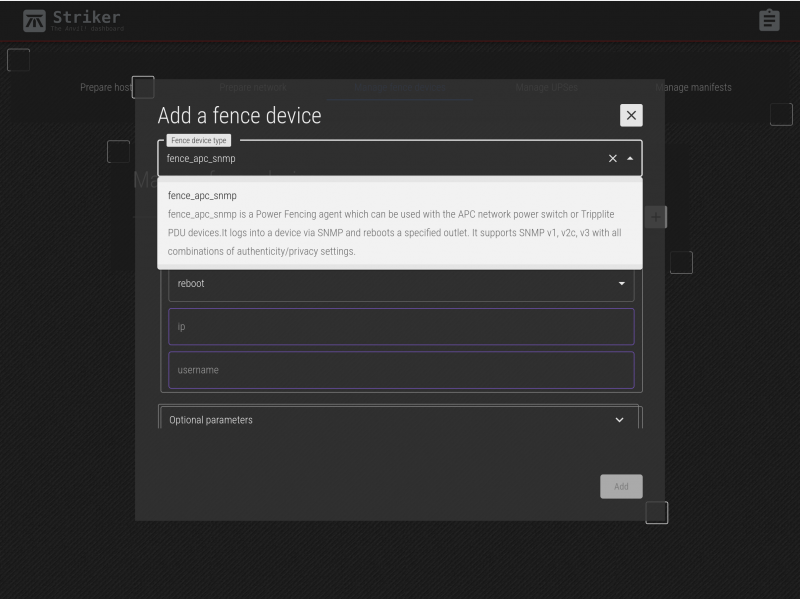

| Note: There are two fence agents for APC PDUs. Be sure to select 'scan_apc_snmp'! |

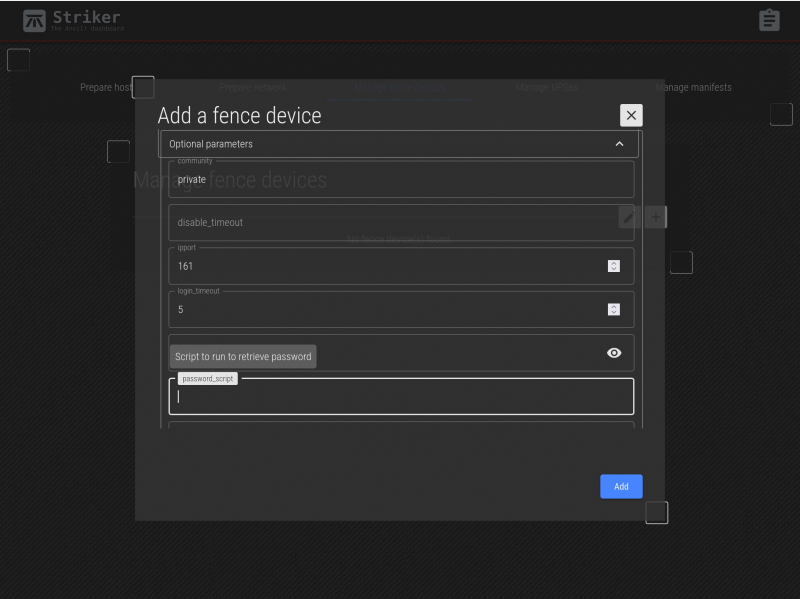

Every fence agent has a series of parameters that are required, and some that are optional. The required fields are displayed first, and the optional fields can be displayed by clicking on Optional Parameters to expand the list of those parameters.

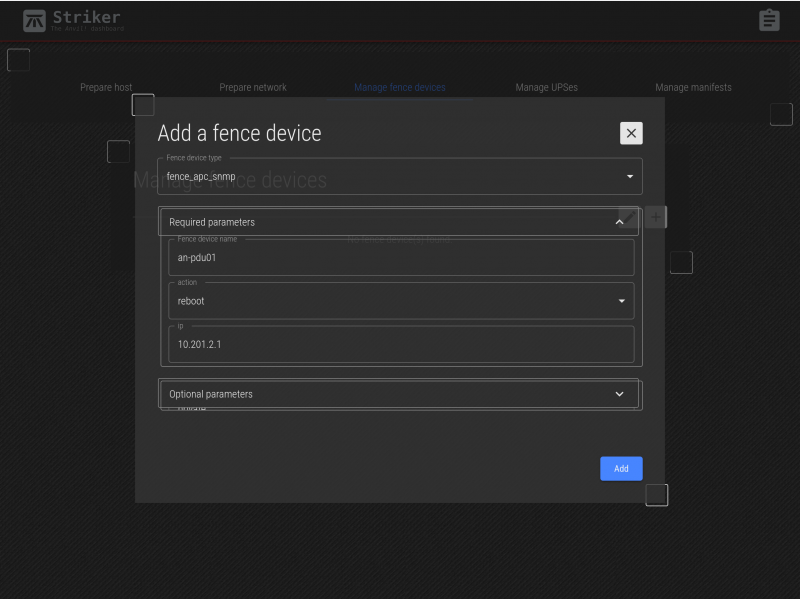

In our example, we'll first add an-pdu01 as the name, we will leave action as reboot, and we'll set the IP address to 10.201.2.1.

We do not need to set any of the optional parameters, but above we show what the menu looks like. Note how mousing over a field will display it's help (as provided by the fence agent itself).

Click on Add and the summary of what you entered. Check it over, and if you're happy, click on Add to save it.

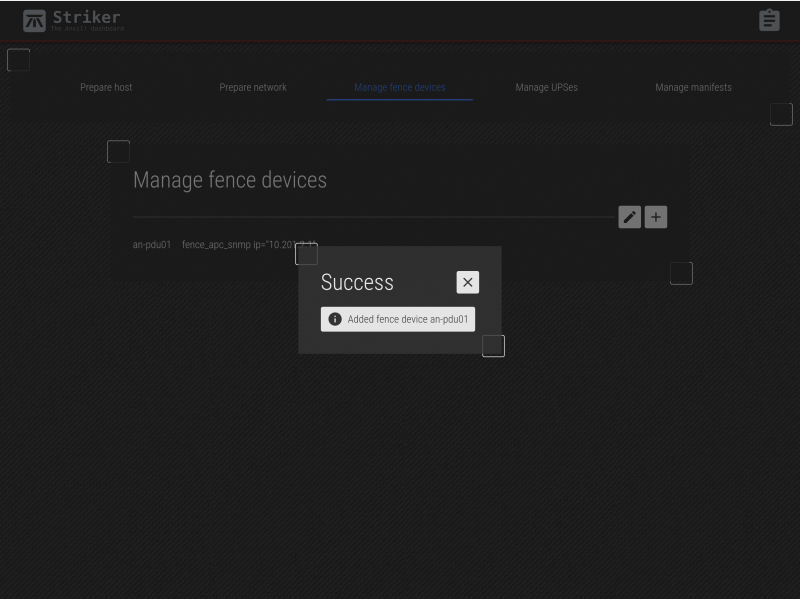

The new device is saved!

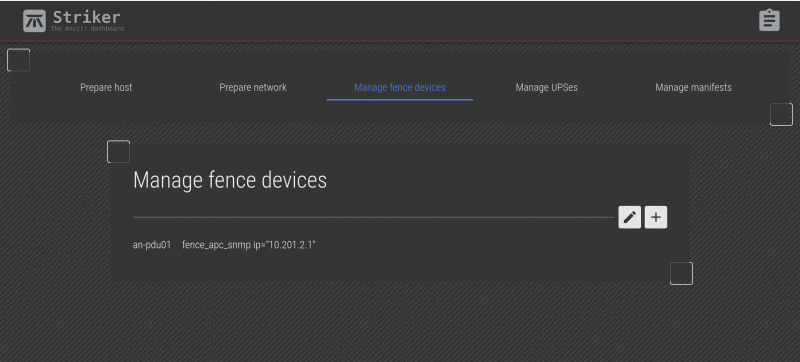

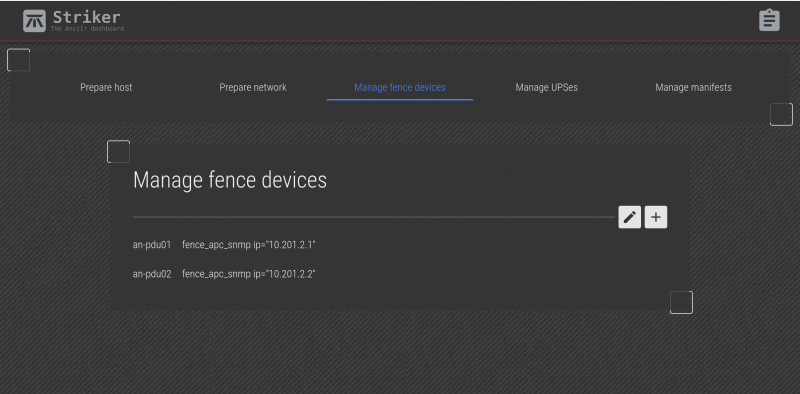

You can now see the PDU you created. Repeat the process to add additional fence device.

Now we can see the two new fence devices that we added!

Adding UPSes

The UPSes provide emergency power in the case mains power is lost. As important though, Scancore uses the power status to monitor for power problems before they turn to power outages.

We're going to be configuring a pair of APC SMT1500RM2U UPSes.

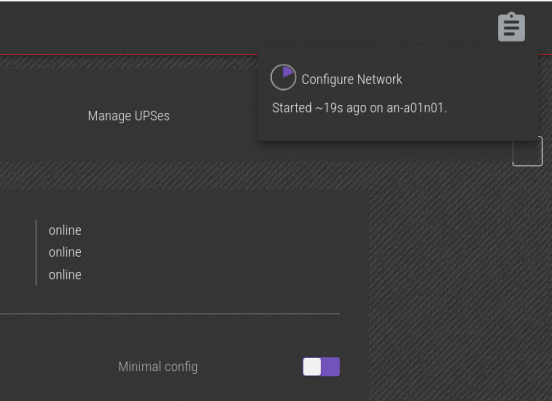

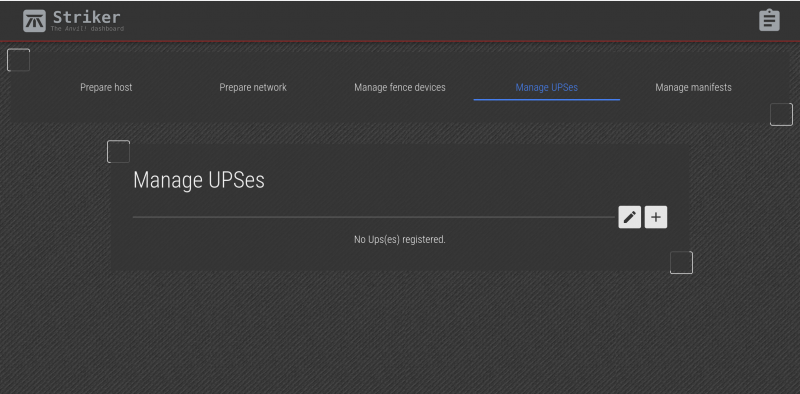

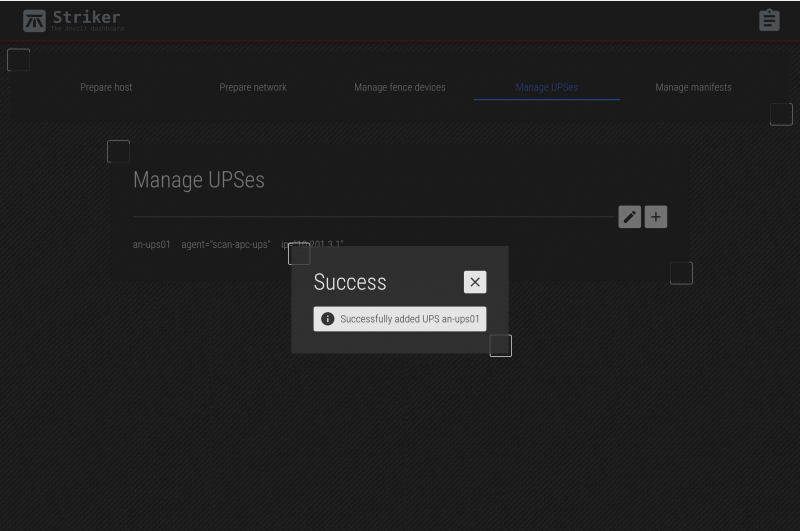

Clicking on the Manage UPSes tab brings up the UPSes menu.

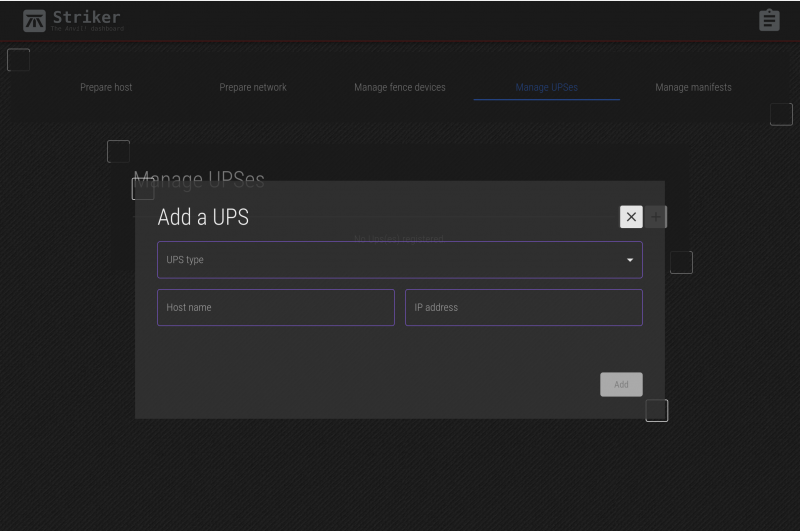

Click on + to add a new UPS.

| Note: At this time, only APC brand UPSes are supported. If you would like a certain brand to be supported, please contact us and we'll try to add support. |

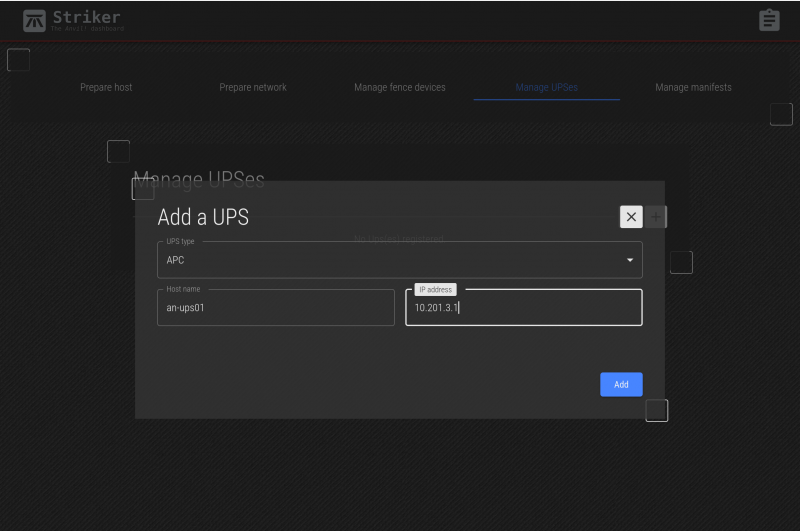

Select the APC and then enter the name and IP address. For our first UPS, we'll use the name an-ups01 and the IP address 10.201.3.1.

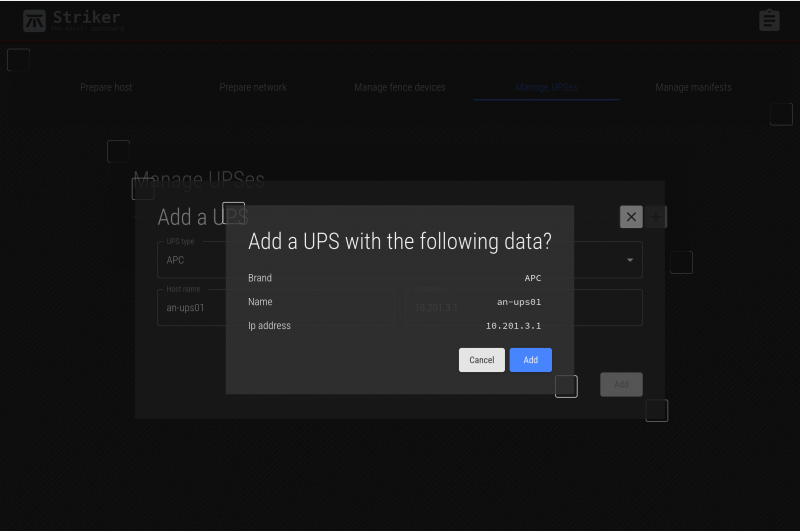

Click on Add and confirm that what you entered it accurate.

Click on Add again to save the new UPS.

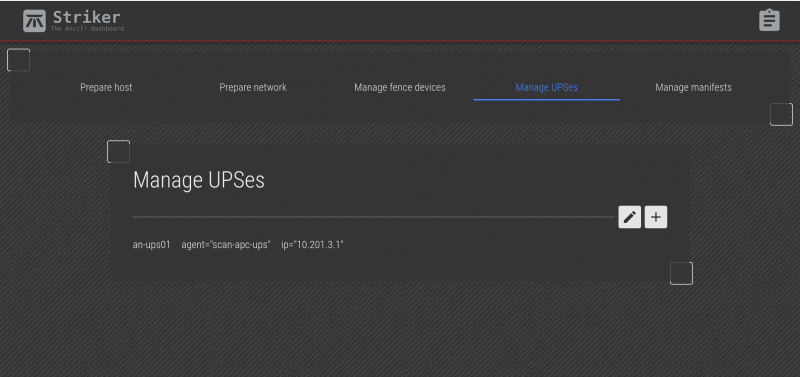

Close the confirmation, and you will see the new UPS.

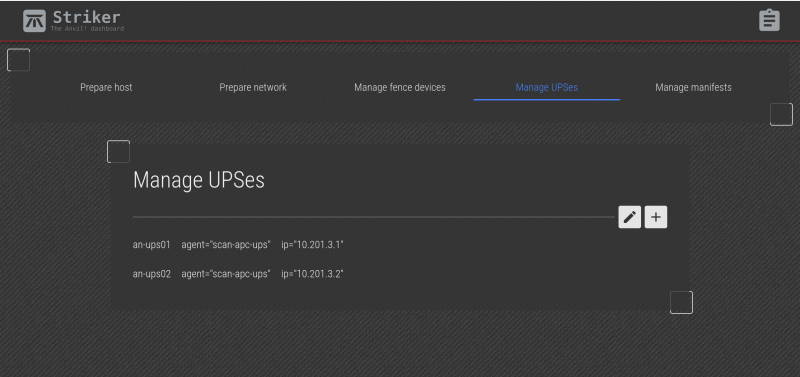

Repeat the process to add 'an-ups02'.

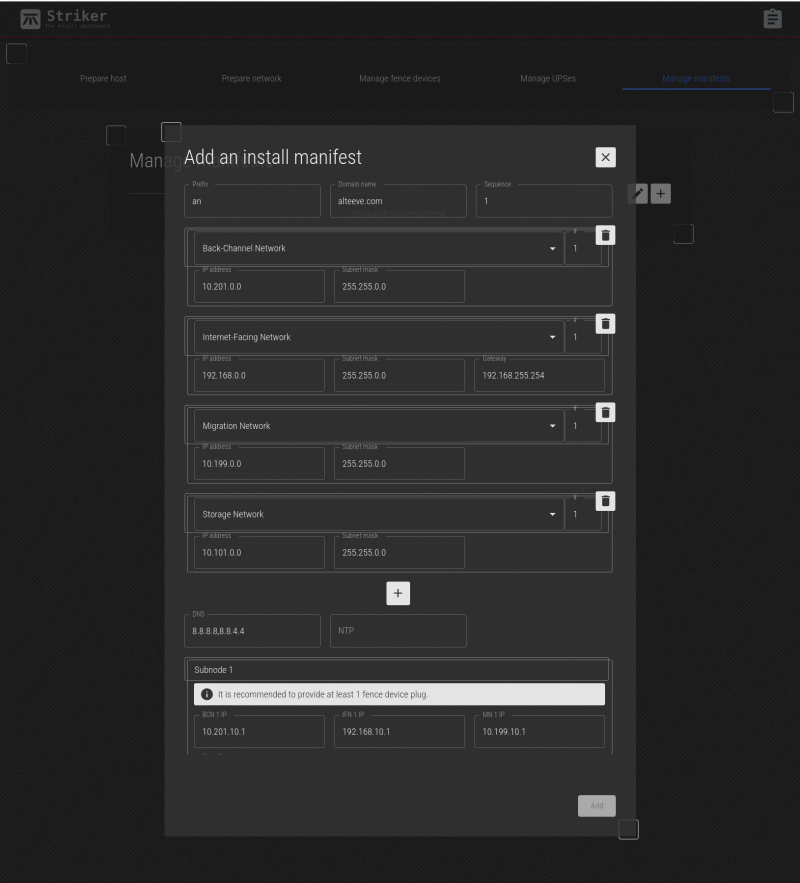

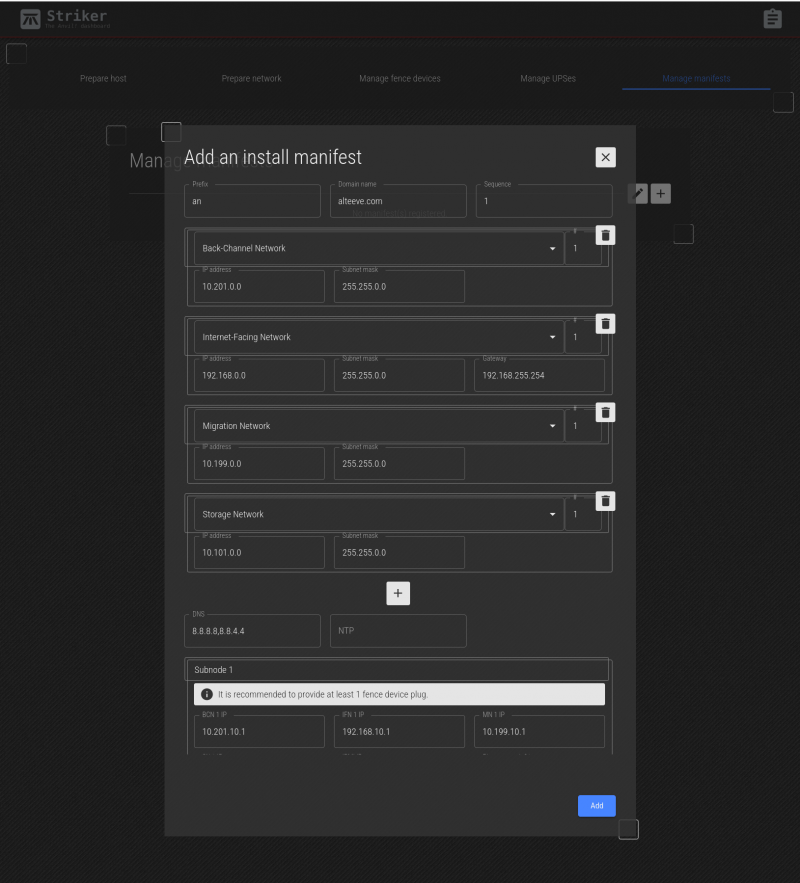

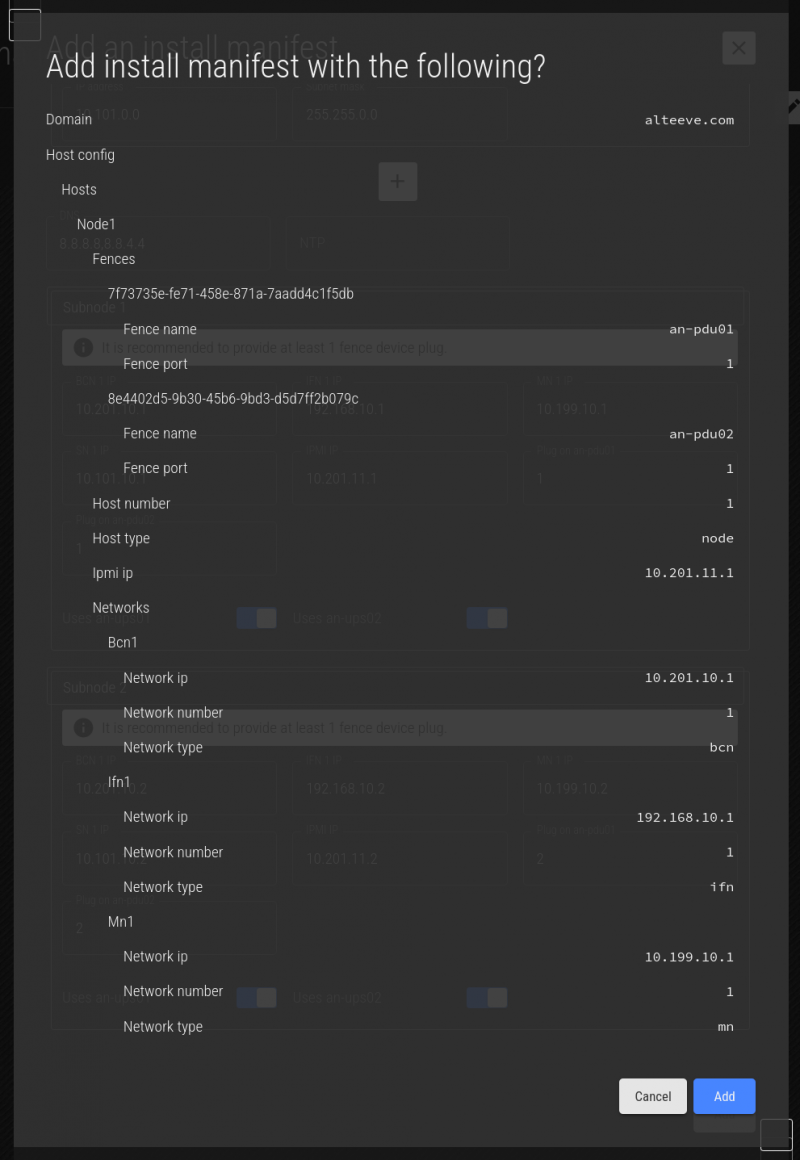

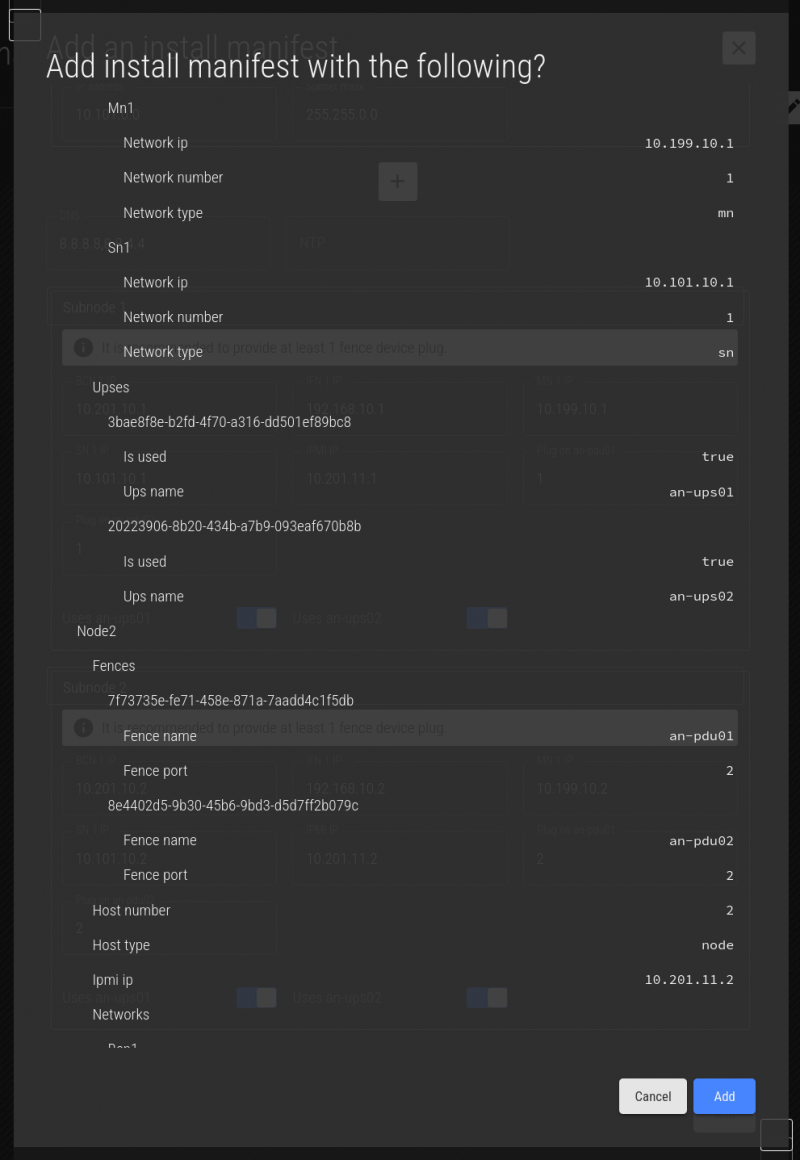

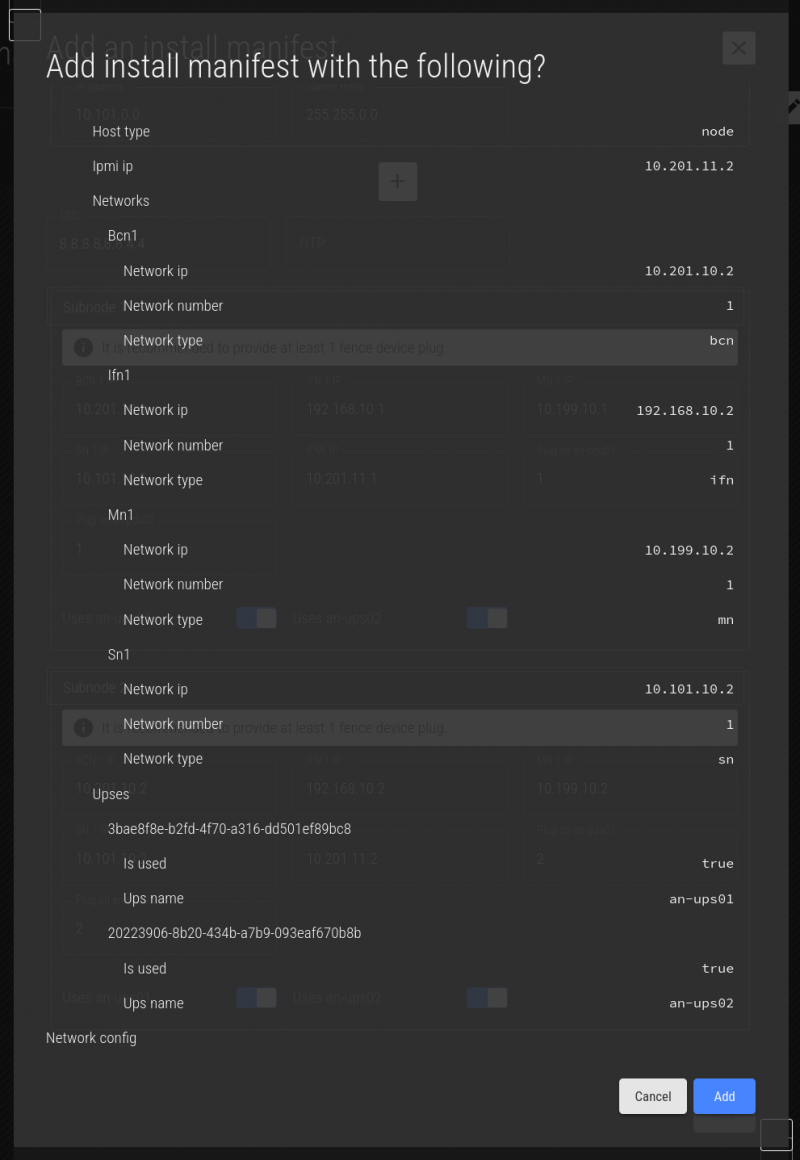

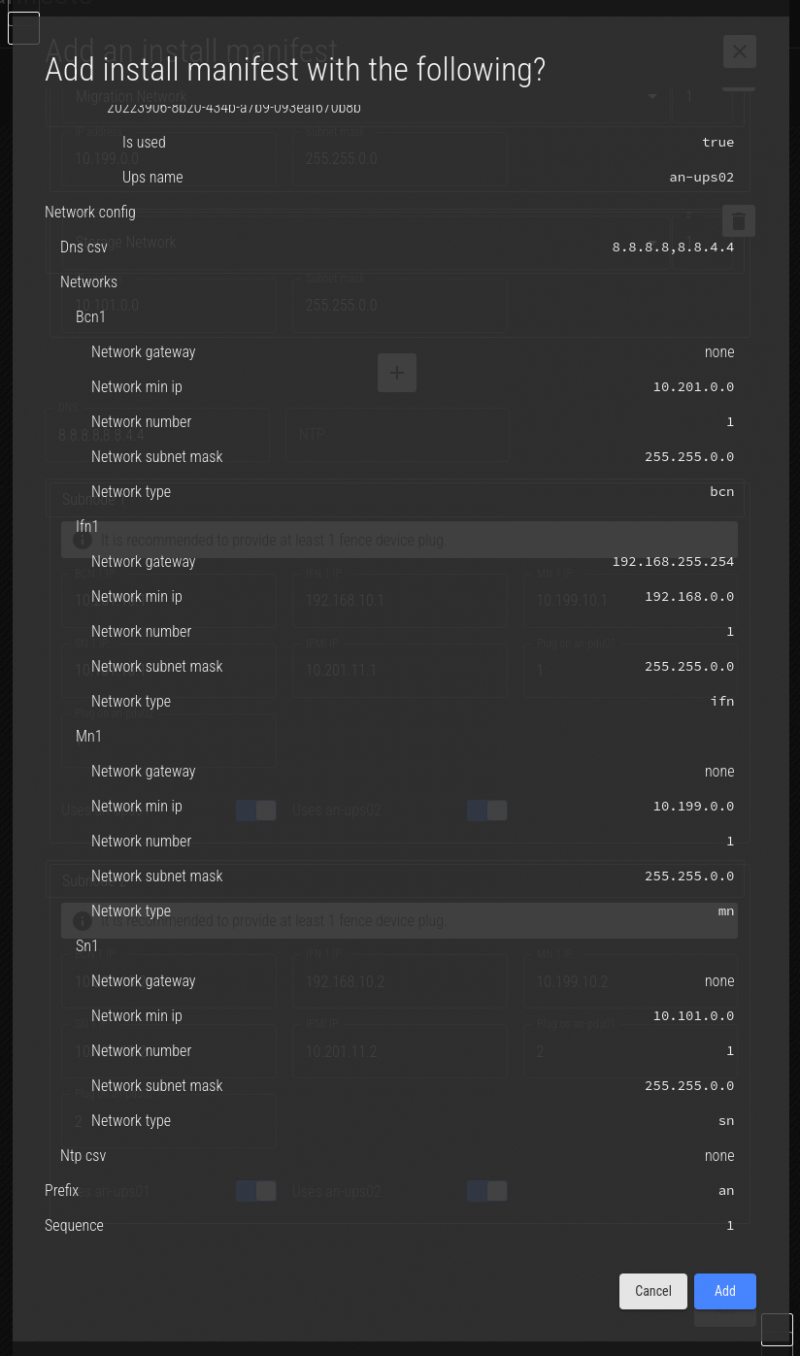

Creating an 'Install Manifest'

Before we start, we need to discuss what an "Install Manifest" is.

In the simplest form, an Install Manifest is a "recipe" that defines how a node is to be configured. It links specific machines to be subnodes, sets their host names and IPs, and links subnodes to fence devices and UPSes.

So lets start.

Click on the "Install Manifest" tab to open the manifest menu.

The manifest menu has several fields. Lets look at them;

Fields;

| Prefix | This is a prefix, 1 to 5 characters long, used to identify where the node will be used. It's a short, descriptive string attached to the start of short host names. |

|---|---|

| Domain Name | This is the domain name used to describe the owner / operator of the node. |

| Sequence | Starting at '1', this indicated the node's sequence number. This will be used to determine the IP addresses assigned to the subnodes. See Anvil! Networking for how this works in detail. |

| Networks Group | The first four network fields define the subnets, and are not where actual IPs are assigned. Below the default three networks is a + button to add additional networks. If you do not plan to use a given network, you can leave the default values and simply ignore the network. |

| DNS | This is where the DNS IP(s) can be assigned. You can specify multiple by using a comma-separated list, with no spaces. (ie: 8.8.8.8,8.8.4.4). |

| NTP | If you run your own NTP server, particularly if you restrict access to outside time servers, define your NTP servers here, as a comma-separated list if there are multiple. |

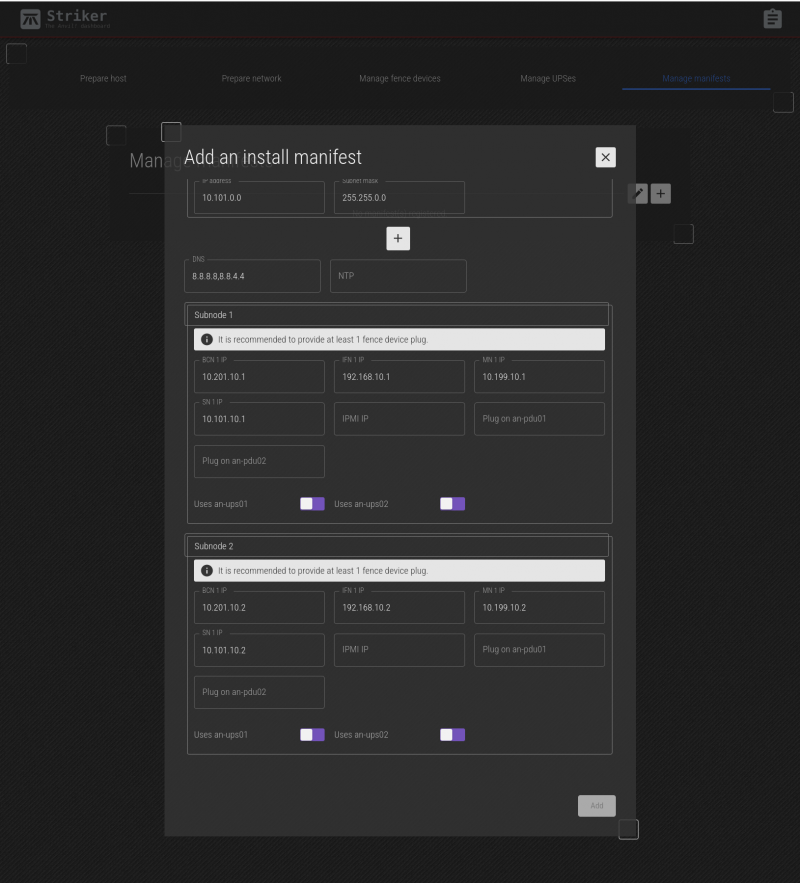

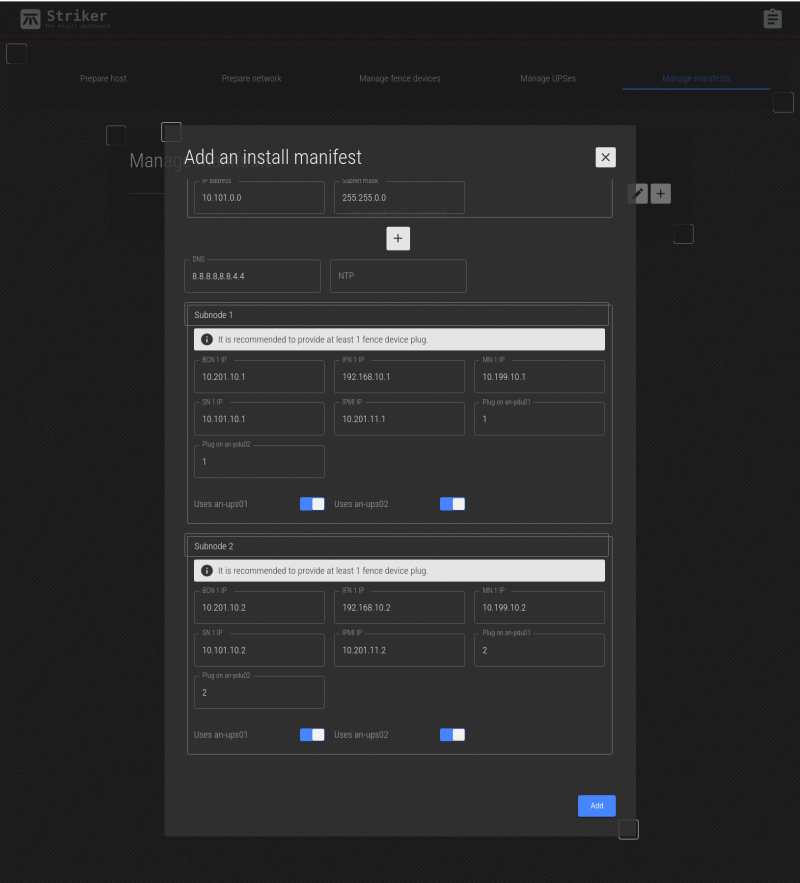

| Subnode 1 and 2 | This section is where you can set the IP address, a selection of which UPSes power the subnodes, and where needed, fence device ports used to isolate the subnode in an emergency. |

Below this are the networks being used on this Anvil! node. The BCN, SN and IFN are always defined. In our case, we also have a MN, so we'll click on the + to add it.

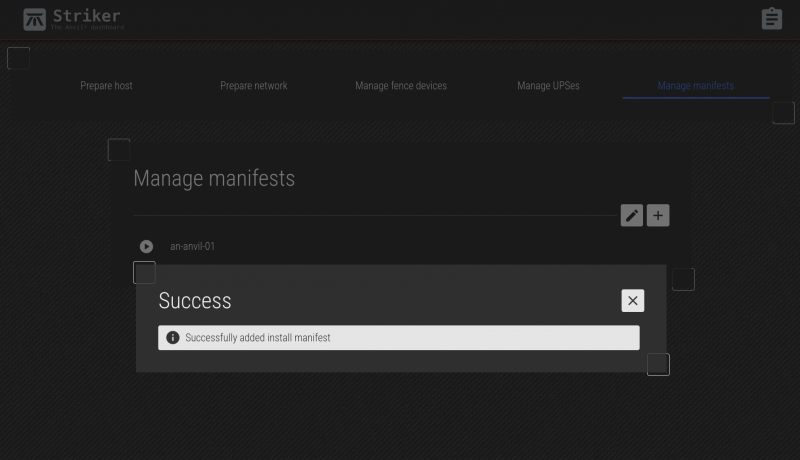

Click Add, and you'll see a summary of the manifest. Review the summary carefully.

If you're happy, click Add. You'll see the saved message, and then you can click on the red X to close the menu.

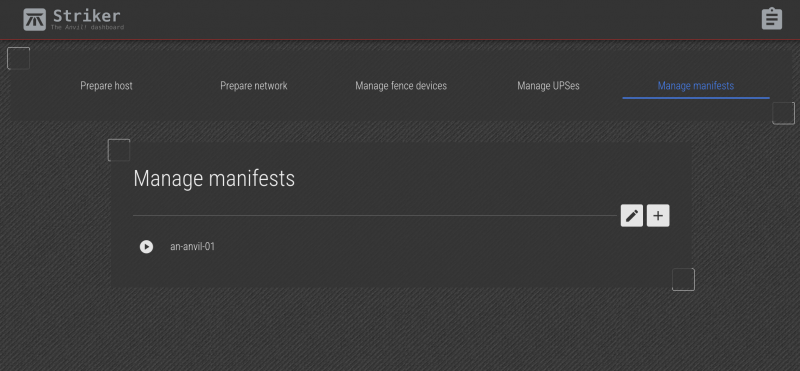

Now we see the new an-anvil-01 install manifest!

Running an Install Manifest

Now we're ready to create our first Anvil! node!

To build an Anvil! node, we use the manifest to take the config we want, and apply them to the physical subnodes we prepared earlier. This will take those two unassigned subnodes and tie them together into an Anvil! node.

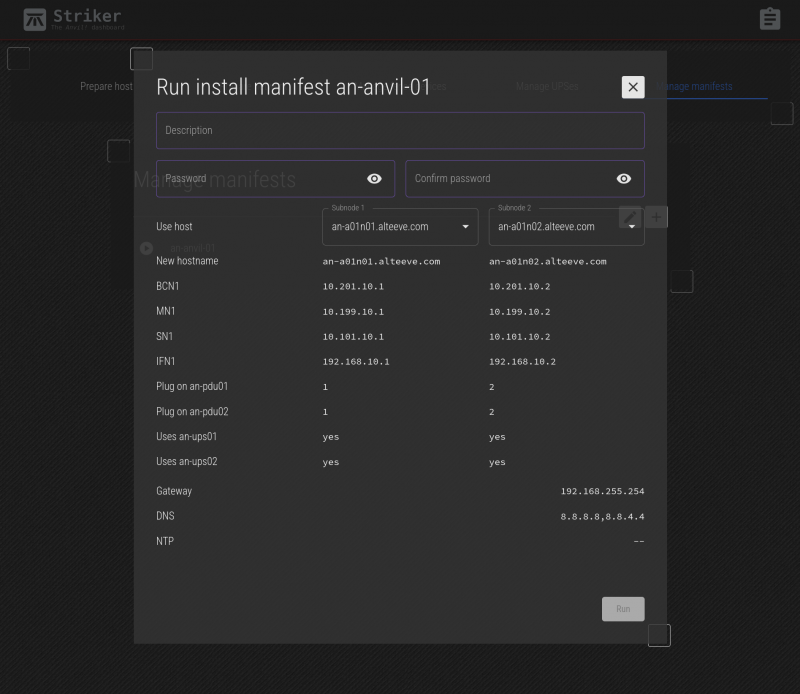

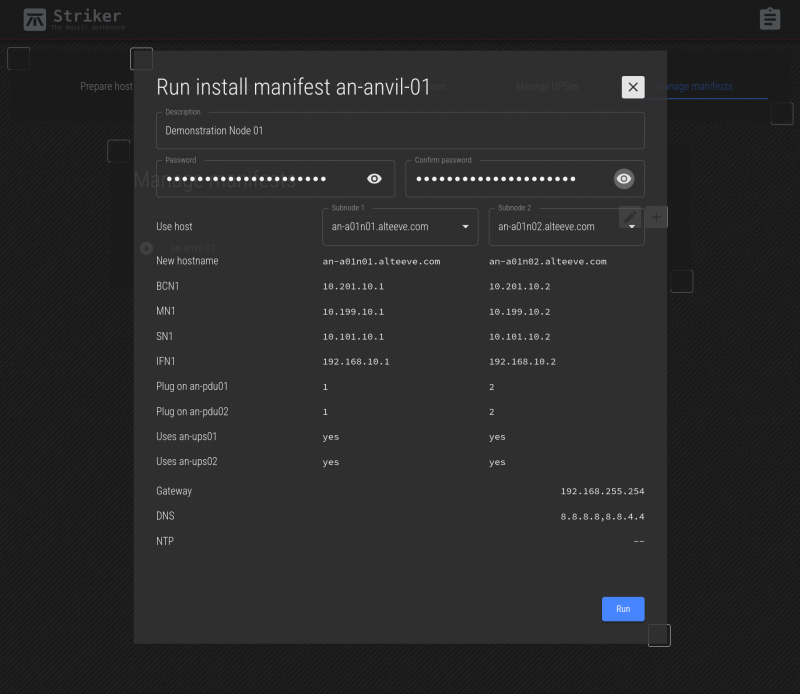

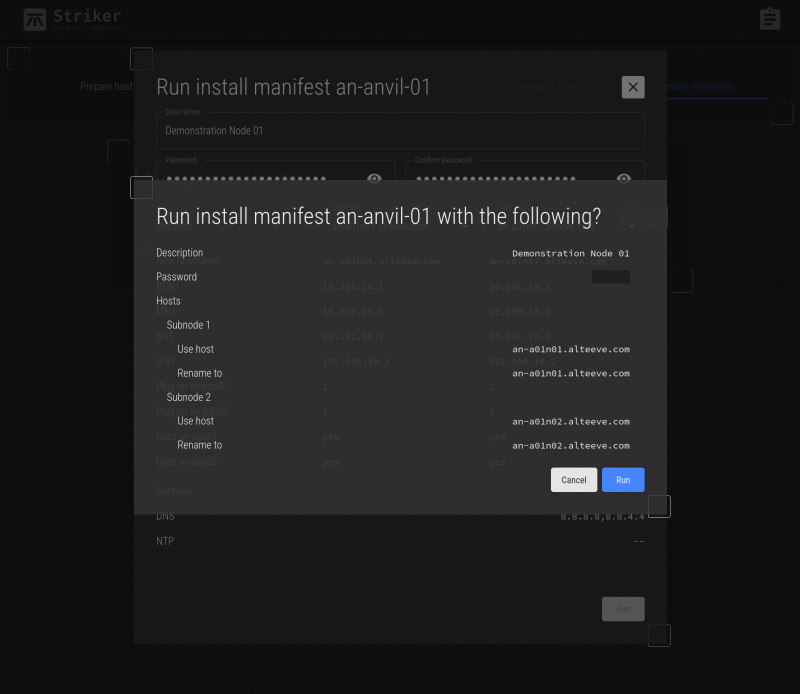

Click on the play icon to the left of the manifest you want to run. In our case, there's only an-anvil-01. This will open the manifest menu.

Fields;

| Description | This is a free-form field where you can describe this node. |

|---|---|

| (Confirm) Password | Enter the password you want to set for this node. In general, you should use the same password on all nodes. |

| Subnode 1/2 | There are two drop-down boxes where you can choose with subnodes will be in this node. All machines initialized as "nodes" (that is, have anvil-node installed) will be available. If you are building a new node, as we are here, select the two newly initialized nodes. If you're rebuilding a node, select the two subnodes already in the node to rebuild. If you're replacing a subnode, select the surviving subnode and the replacement subnode. |

This form is pretty simple, because most of our work was done in previous steps. Simply enter a description, the password to set, and select the two subnodes we prepared earlier.

Verify that you're about to run the right manifest against the right subnodes. When done, click Run.

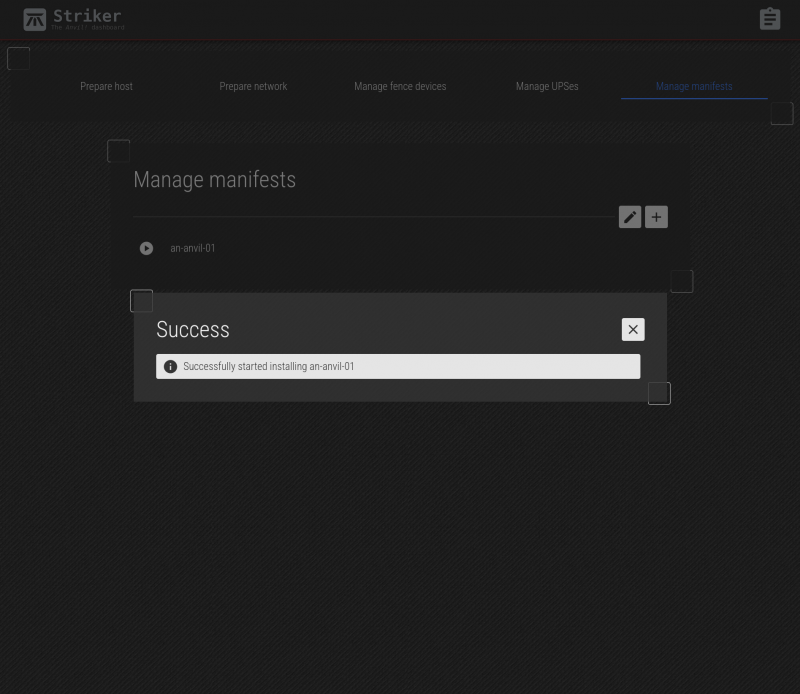

The job to run the manifest and assemble the two subnods into a node is now saved. It will take a few minutes for the job to complete, but it should now be underway.

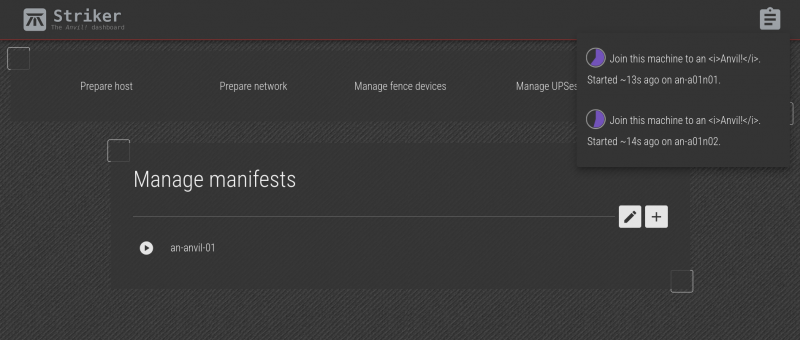

Something we've not looked at yet, but that is useful here, is the job progress icon. At the top-right, there's a clipboard icon with a blue dot. Clicking on it shows the progress of running jobs. This shows all jobs that are running, or that finished in the last five minutes.

In the example above, the join anvil node 1 and join anvil node 2 are already complete!

We're now ready to provision servers!

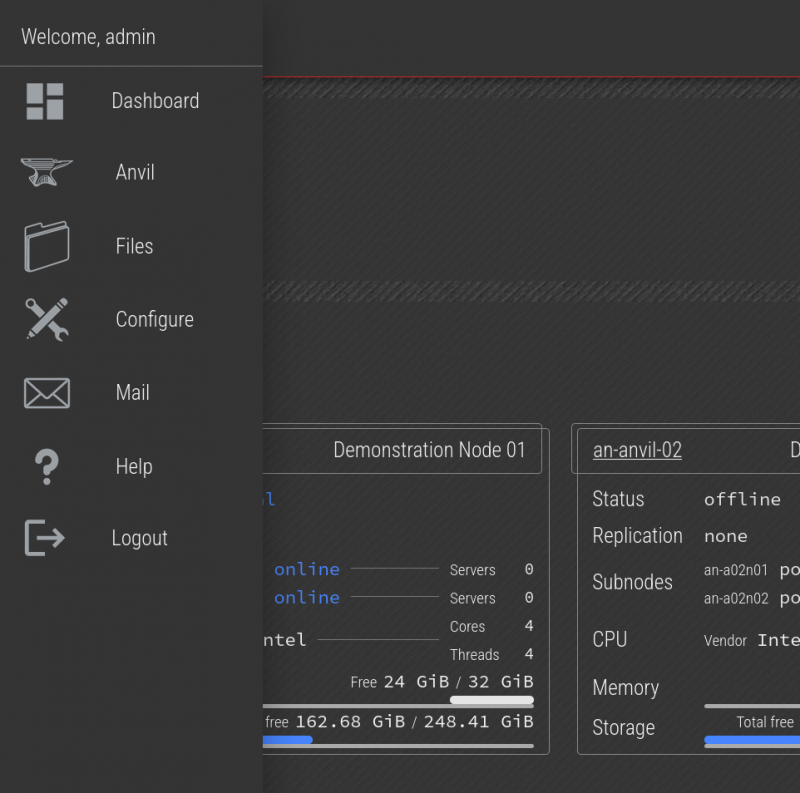

The New Anvil! Node Tile

When the manifest completes the new node build, it will show up as a tile on the dashboard. Return the the Dashboard and within a few minutes, the new node tile will appear.

You only need one node to start provisioning servers. If you add more nodes in the future, they will appear as additional tiles. Generally you do not need to interact with the nodes, as they should simply add resources to those available for new or existing servers.

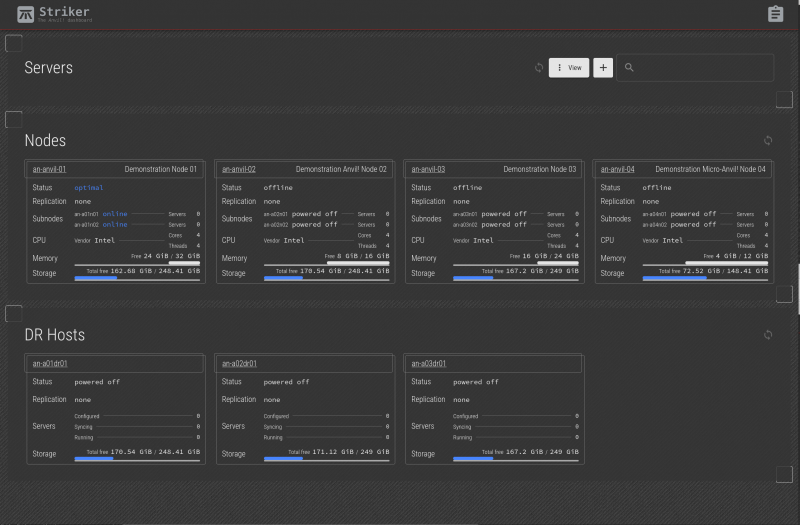

Here we see what a larger Anvil! cluster looks like. This one shows four nodes and three DR hosts. You certainly don't need more than one node to get started, but having a multi-node cluster will show how some of the features and prompts work.

A Note on Server Allocation

If you have two or more nodes, where a new server will run will depends on a few criteria;

- If the requested RAM or disk space for the new server is more than what is available on any given node, it won't be run there.

- If your new server could run on two or more nodes, you can manually choose which it will run on.

- If you do not choose which node to run a server on, the node with the least existing servers will be chosen to spread the load out.

Provision Servers

The fundamental goal has been to build servers, and now with our first node built, we're ready to do so!

Uploading Install Media

Before we can build our first server, we need to upload the install media the OS will install from.

The vast majority of Anvil! users run windows, so we're going to install a Windows Server 2025 machine. For this, we'll also need the latest virtio drivers.

Getting the Windows 2025 ISO (DVD image) will require purchasing or getting an evaluation version from Microsoft at the link above.

Getting the latest stable virtio drivers can be downloaded from here, which is version 0.1.271 at the time of writing.

With these two files downloaded on your local computer, we're ready to upload them to the cluster.

Click on the Striker logo to open the menu, and then click on the Files option.

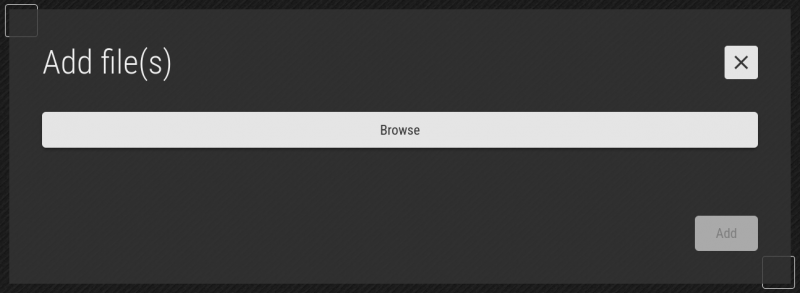

Click on the + on the right to upload the first file.

We're also going to want the latest virtio drivers. To improve performance of Windows servers, the hypervisor will emulate hardware that is designed for the best performance in a virtual environment. Specifically, it emulates a storage controller (like a SCSI or RAID controller) called virtio-block, and a network controller called virtio-net.

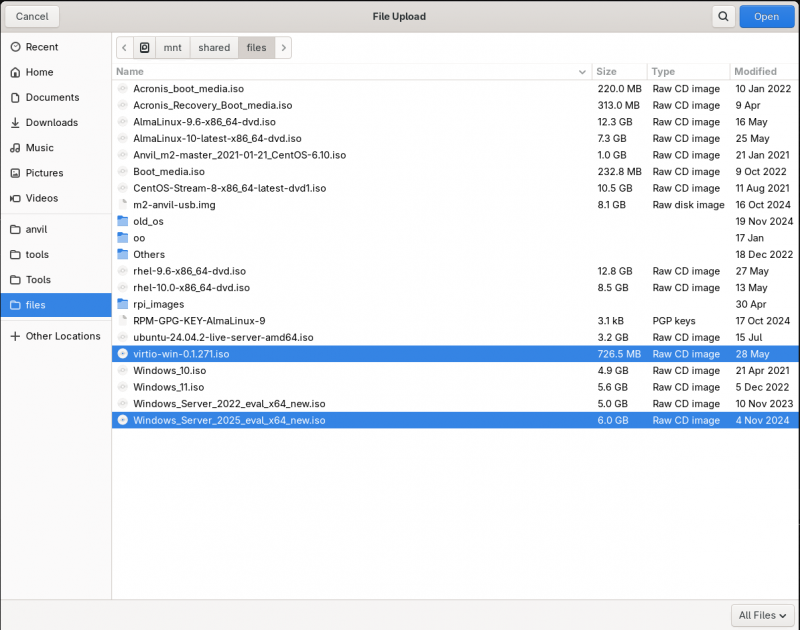

Given the storage controller is emulated, and that the virtio drivers are not on the install ISO, we'll need to provide the driver during the OS install. So we'll also upload the virtio-win-0.1.271.iso file at the same time.

Clicking on 'Browse', your operating system's file browser. Use it to find the Windows ISO you will upload. Click on the first (or only) file you want to upload. Then press and hold the 'ctrl' key, and click to select any additional files. Once all are selected, click on 'open' (or your OS's selection button) to begin the upload.

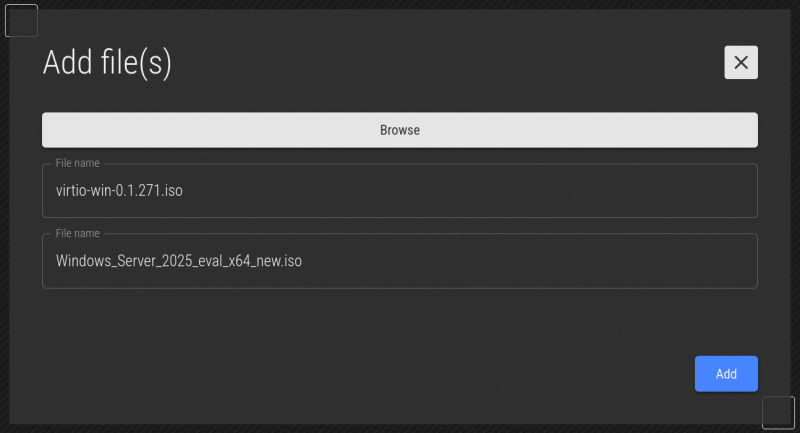

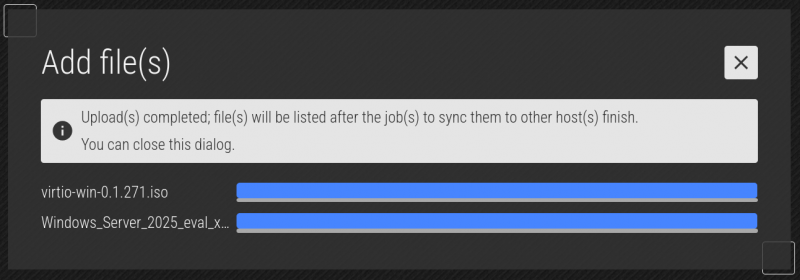

Once you select the files to upload, you will see what's going to be uploaded. If you're happy, click Upload.

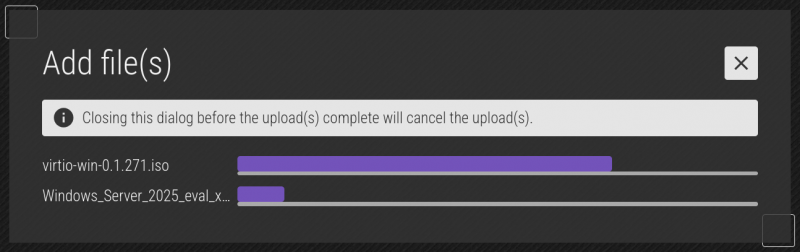

As the file uploads, you'll the see the progress. How long this takes depends on the file size and the bandwidth between your computer and the Striker dashboard.

The progress bar changes colour when the file is finished uploading. When the files are done uploading, click on the 'X' to close the menu.

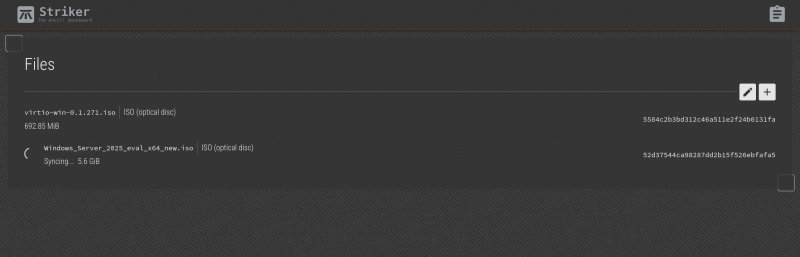

| Note: After the upload completes, it could take a minute or three for the file to appear. |

This is because the md5sum for the uploaded files are calculated. How long this takes depends on the size of the file and and the speed of the dashboard's processor. Once calculated, then the file is added to the database and it will start sync'ing around the Anvil! cluster.

The virtio driver ISO is small, and shows up quickly. The Windows ISO is large, and takes larger to be processed.

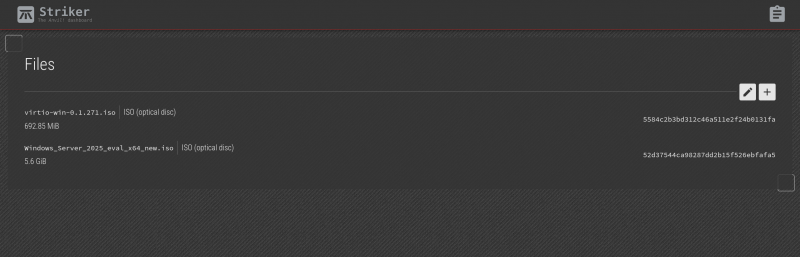

After the files are processed, they then start getting copied out to all other machines in the cluster. Depending on how many Strikers, subnodes and DR hosts you have, and the speed of the network connection, the sync'ing could take a while.

| Note: If any systems in the cluster are offline, the file will show as sync'ing until it boots and gets the file. |

So please be patient.

After the file is actually uploaded, it needs to be added to the cluster's shared storage. This starts by calculating an md5sum sum of the file, adding it to the database, and then it starts sending it out to the various machines in the cluster.

Once the sums are calculated, you will see the files appear in the file list. Repeat if needed to upload the ISOs of other OSes you plan to install.

Provisioning Servers

Now, finally, we're at the most fun part!

Building 'srv01-primary-dc'

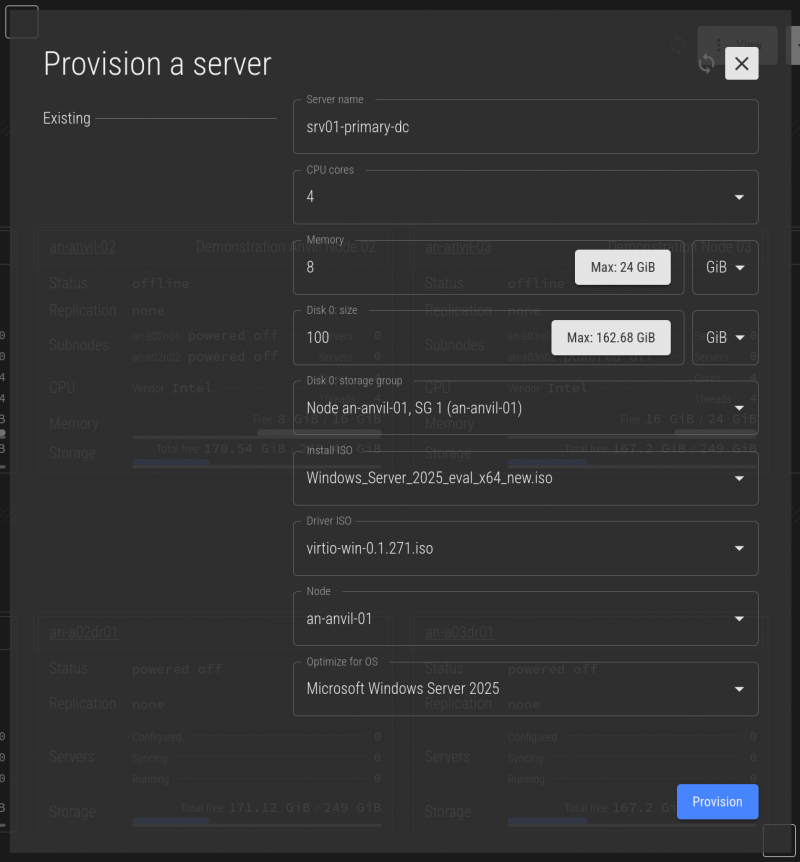

Lets build our first server. It won't actually do anything, but lets pretend it's going to be an Active Directory server.

Click on the Striker logo and select Dashboard.

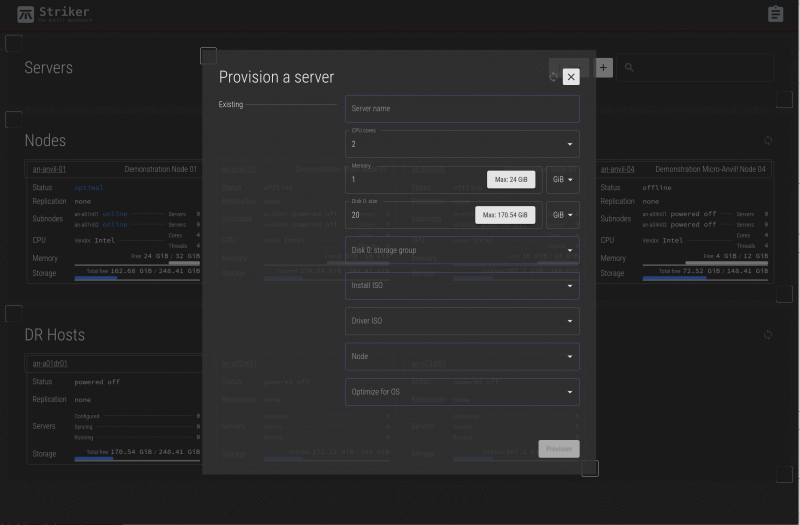

At this point, there's nothing to see yet, so the dashboard is pretty bare. Click on the + on the right of the search bar to start provisioning (building) the first server.

This is where you get to decide what resources are allocated to your new server.

Fields;

| Server name | This is the name of the server. It can be any string of letters, numbers, hyphens and underscore characters. It's not at all required, but we generally use the format 'srvXX-<description>', and we will use that here. This works for us because the srvXX- prefix is incremented constantly, which means that if a new server is replacing an old server, the descriptive part can be the same without conflict. |

|---|---|

| CPU Cores | This is how many CPU cores you want to allocate to this server. Please see the note below! |

| Memory | This is the amount of RAM to allocate to this server |

| Disk Size | This is the size of the (first) drive on the server. If you want a second drive, you can add it after the server has been created. |

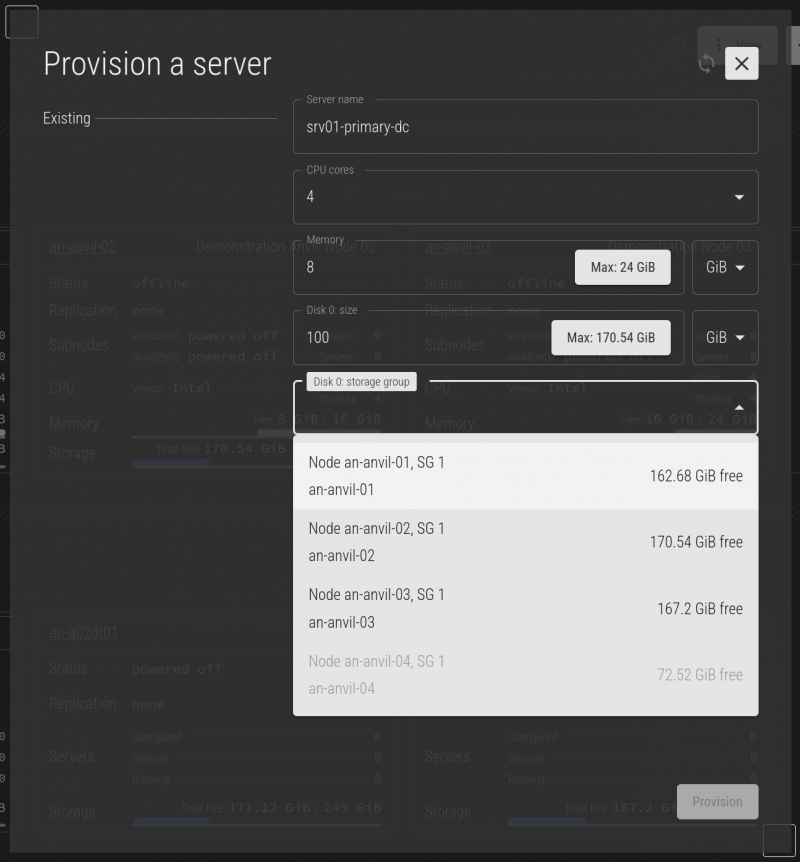

| Storage Group | This allows you to choose which storage group to use when provisioning this new server. In most cases, there is only one storage group. In some cases, a node might have two storage arrays, for example, one that is smaller, more high performance and anther which is large, but slower. |

| Install ISO | This is the ISO uploaded earlier to use for the OS installation. |

| Driver ISO | This is the optional driver disk needed to complete the OS install. Generally this is not needed for Linux servers, and is required for the virtio drivers for Windows servers. |

| Anvil Node | If your Anvil! cluster has multiple nodes, this is allows you to manually choose which node will host the new server. |

| Optimize for OS | Important: This gives you the chance to optimize the hypervisor, that is the component that emulates the hardware the server will run on, for the OS you plan to install. Selecting this properly can significantly help (or hurt) the performance of your server. |

In this demo, our cluster has four nodes. We requested a 100 GiB hard drive, and this is more than is available on the an-anvil-04 has available. This means that that node is no longer an install candidate, so it's storage group is disabled (as is the node itself.

When the form is first presented, it shows the maximum available CPU cores, RAM, and disk size across all nodes. As you fill out the form, the list of available nodes is re-evaluated. If you ask for more CPU core, ram, or disk space than any given node can provide, it drops off the list and the new maximum values are shown. For this reason, you may see the maximum values change and you fill out more and more of the form.

| Note: The 'Optimize for OS' field is quite long. You can type to search / refine the list to quickly find your desired OS. |

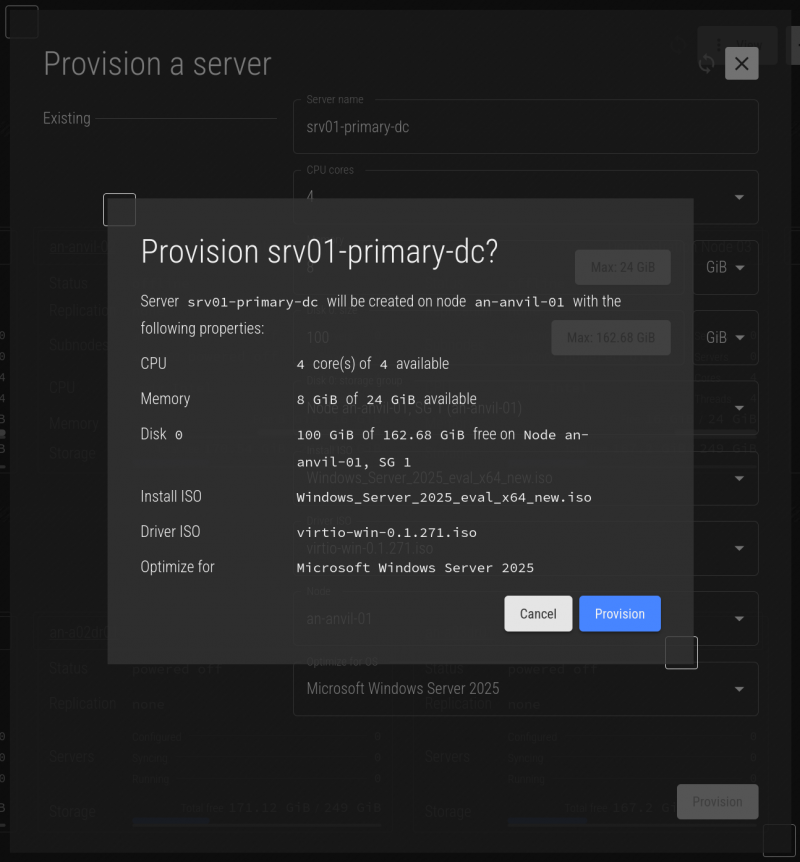

For our first server, we're going to create a server called srv01-primary-dc. We'll give it 4 cores, 8 GiB of RAM and 100 GiB of disk space. Once you're happy with your selection, press Provision.

You will see a summary of the server that is about to be created. Confirm that all is well, and then click on Provision to confirm.

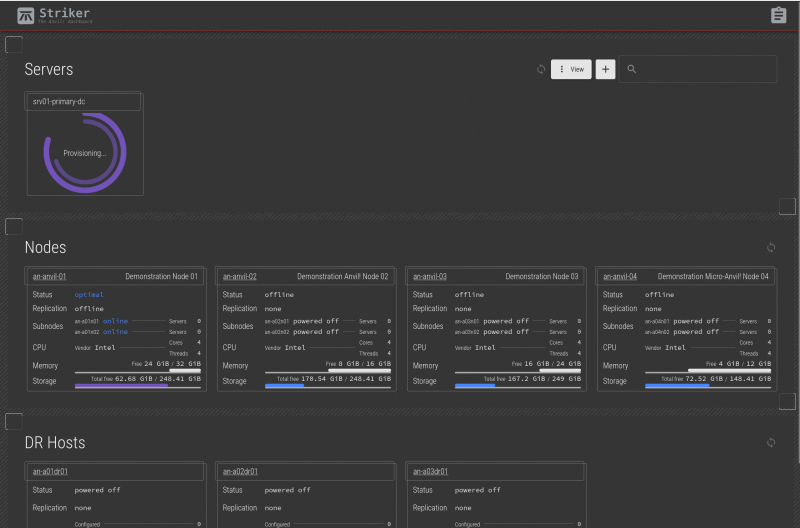

When the job is saved, it will soon show the new server tile with a double-ring showing the provision progress on the two sub-nodes that belong to the target node. It will take a minute or two for the OS to boot and start the install.

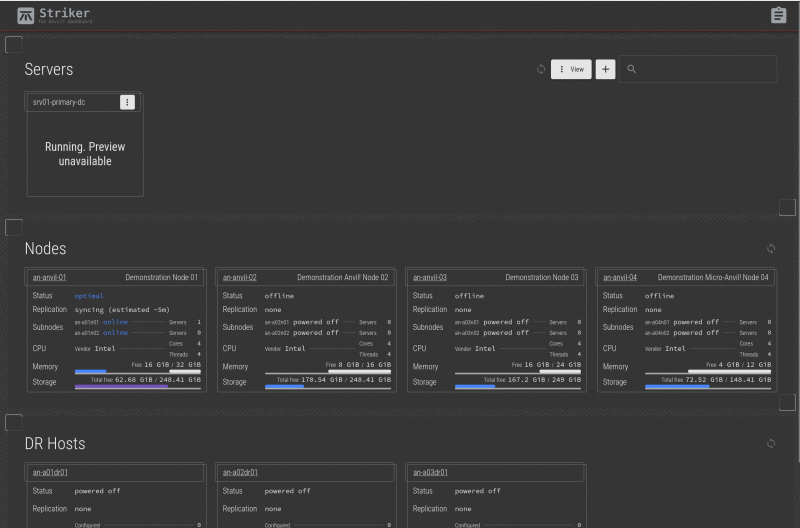

The Striker dashboards take screenshots about once per minute. So it's normal for the server to momentarily show "Running. Preview unavailable".

Shortly after the server boots, the first screenshot will be taken. Note that you don't have to wait for the screenshot to appear. You can click on the message or the screenshot at any time to connect to the server.

"Ding!"

The server is ready!

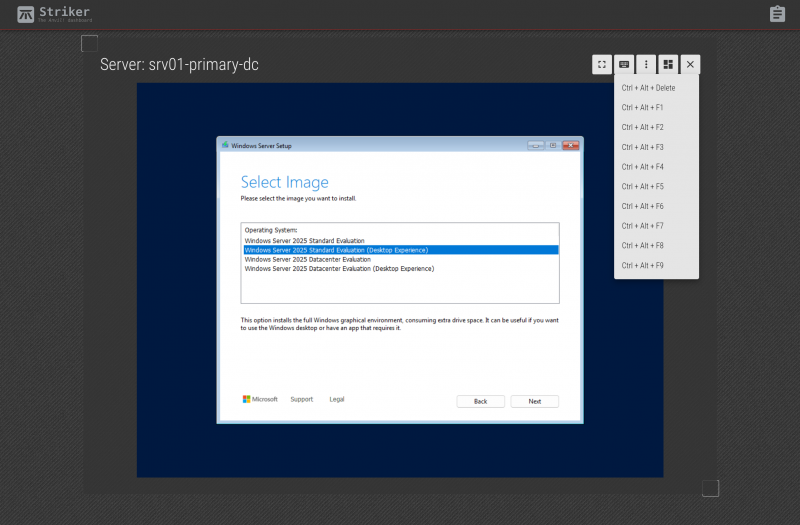

Click on the server and it will open up to in-browser terminal access to the new server.

This access is just like having a monitor plugged into a real physical machine. You are essentially using a keyboard and mouse plugged into the server. No network connection is required on the guest (as there can't be at this stage of the OS install), and you will be able to watch the full boot up and shutdown sequence of the server, without any client being installed.

It's not needed yet, but it's important to show that you can click on the keyboard icon on the top-right to send special key combinations, like "<ctrl> + <alt> + " to the guest. This will be needed later to login after the OS is installed.

The button bar has five options;

The five buttons are, from left to right;

| Full Screen |

|

This makes the server's display full screen. Press <esc> to exit full screen. |

|---|---|---|

| Keyboard |

|

Send special key commands. |

| Additional Control |

|

This provides the last scanned IP, if any, a link to the server management menu, and the ability to control the server's power. |

| Dashboard |

|

Return to the dashboard |

| Server Manager |

|

This will close the desktop connection and take you to the server manager. |

| Note: From this point on, we're doing a standard Windows Server OS install. We're not showing all the steps, but we will show key steps. |

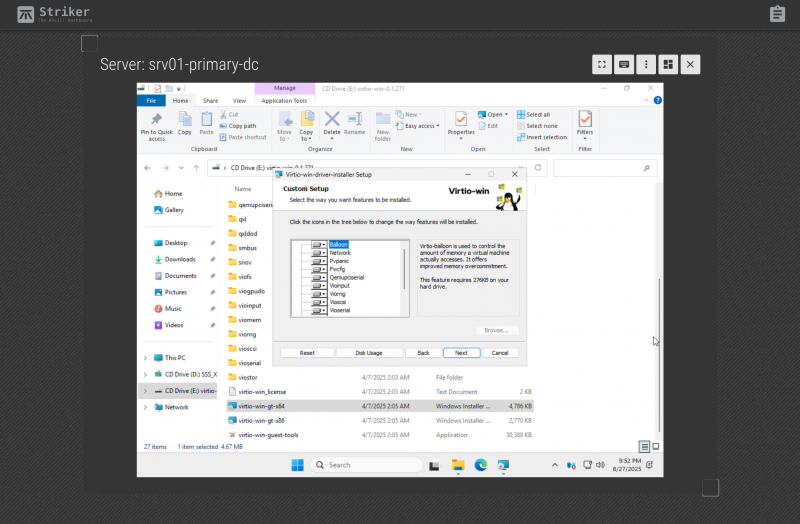

Recall that we included the "virtio" driver disk? This is the step where that comes in. For optimal performance, the hypervisor creates a "virtio-block" storage controller that is optimized for performance in a virtual environment. Microsoft doesn't include the driver for this natively, so when we get to the storage section, it initially sees no hard drive.

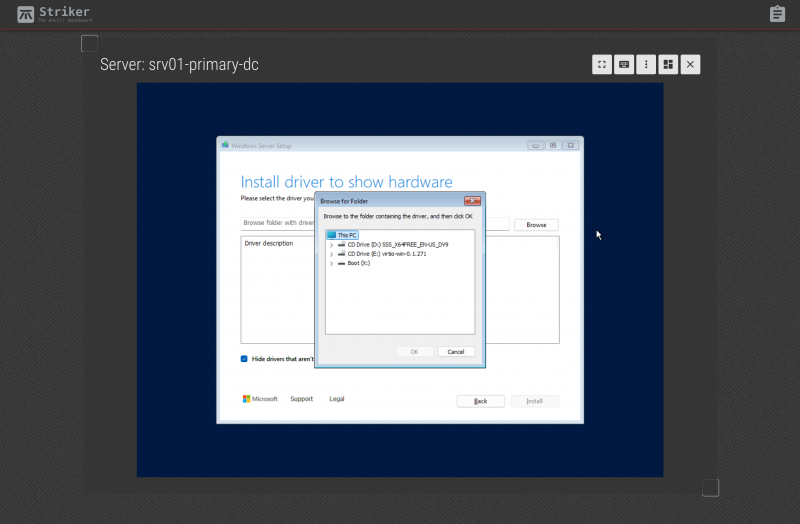

Click on the "Load Driver" button on the lower left.

Click on "Browse".

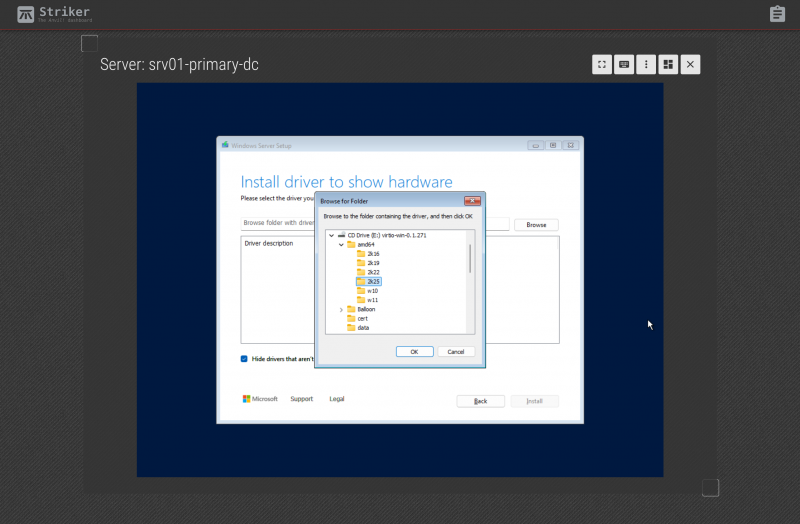

Click to expand "CD Drive (E:) virtio-win-0.1.271" drive and browse to "E:\amd64\2k25".

| Note: This example is for Windows 2025 server, if you're installing a different version, choose the directory that best applies. Note that "amd64" really just means "64-bit". Use that regardless of the make of your physical CPU if you're installing a 64-bit Windows OS, as most are these days. |

Select the "E:\amd64\2k25" directory and then click "OK".

The "Red Hat VirtIO SCSI controller (E:\amd64\2k25\viostor.inf)" option will appear, and this is the storage controller driver we want. Select it, and click on "Next" on the lower-right corner.

The driver will load, and after a moment, the "Drive 0 Unallocated Space" will appear. At this point, you can proceed with the OS install as you normally would.

Now we're at the login screen! Click on the keyboard icon we mentioned earlier, and choose ctrl + alt + del. to sent that key combination to bring up the login prompt.

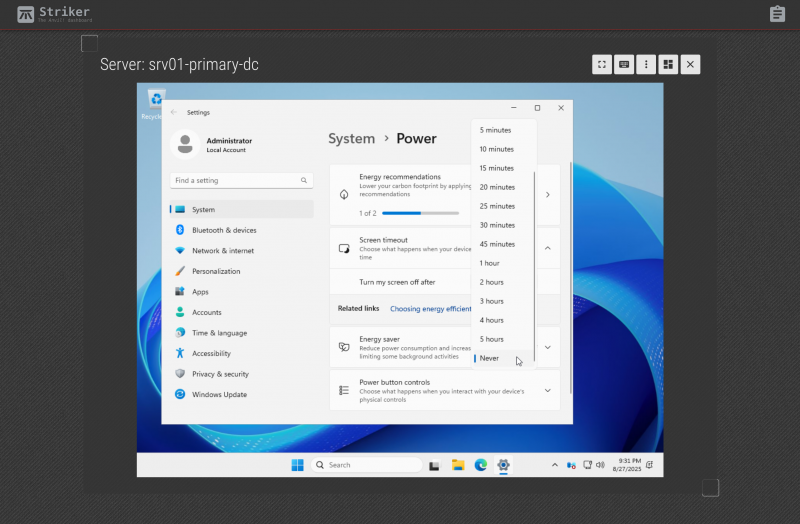

| Warning: This step is very important! Windows will ignore power button events when it things it has powered down the display. It does this to prevent someone accidentally powering off a computer that the user things is already off. This blocks Scancore's ability to gracefully shut down the server when needed! |

Windows, by default, turns off the power to the display (monitor) after ten minutes. This is a problem as Windows will then ignore power button events. The Anvil! has no agents that run inside the guest (we treat your servers as black boxes), so the only way we can request a power off is by (virtually) pressing the power button.

It is very important that Windows shuts down when the power button is pressed!

Imagine the scenario where power has been lost, and the UPSes powering the node the server is on are about to die. Scancore will press the power button to gracefully shut the server down. If windows ignores this, there's nothing more the Anvil! can do, and the node will stay up and running until the power runs out, resulting in a hard shut down.

Additional Drivers and Tools

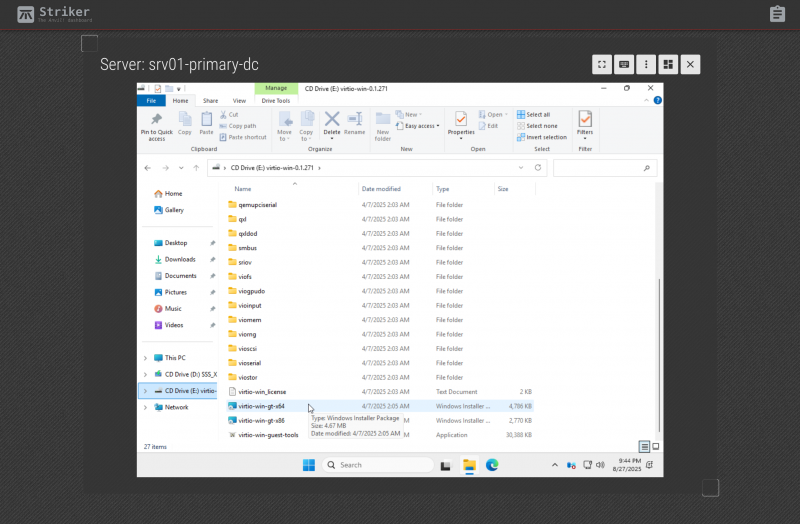

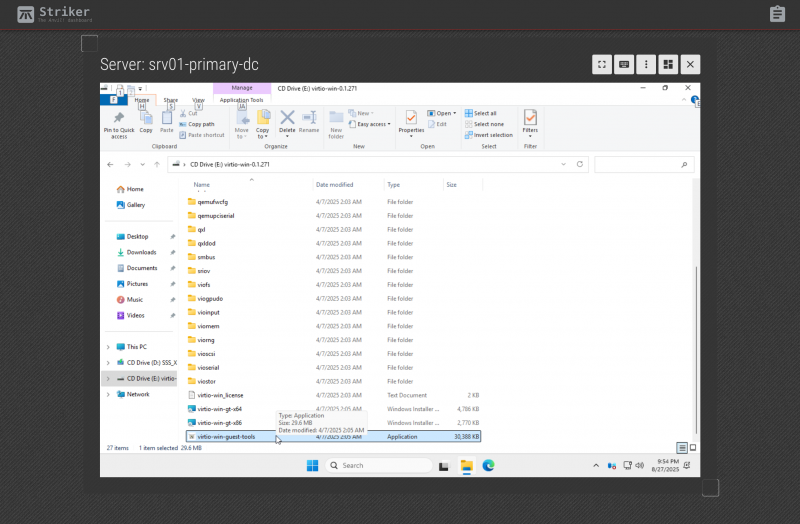

Some additional hardware, like the video card, requires additional drivers for optimal performance. The virtio driver disk has easy tools for installing these drivers and optional utilities.

If you browse to E:\virtio-win-gt-x64 and run it, you will be able to install additional drivers. This will allow you to change the desktop resolution to a higher resolution, in particular.

Installing all the additional virtio driver pack.

If you browse to E:\virtio-win-guest-tools and run it, you will be able to install the windows guest tools.

And that's it!

From this point on, proceed with your windows server setup as you normally would.

Administrative Tasks

This section covers common tasks.

- Configuring a Disaster Recovery Host

- Configure and Manage Alerts in an M3 Anvil! Cluster

- Replacing a Failed Machine in an M3 Anvil! Cluster

- Upgrading the Base OS in an M3 Anvil! Cluster

Command Line Tools

The Anvil! cluster is built around a large collection of command line tools. Most of the Striker UI functions work by creating jobs, which in turn run these command line tools for you behind the scenes.

This was a conscious decision to allow experienced administrators the ability to work on their Anvil! clusters, even over high latency, low bandwidth connections.

| Any questions, feedback, advice, complaints or meanderings are welcome. | |||

| Alteeve's Niche! | Alteeve Enterprise Support | Community Support | |

| © 2025 Alteeve. Intelligent Availability® is a registered trademark of Alteeve's Niche! Inc. 1997-2025 | |||

| legal stuff: All info is provided "As-Is". Do not use anything here unless you are willing and able to take responsibility for your own actions. | |||