2-Node Red Hat KVM Cluster Tutorial - Archive: Difference between revisions

| Line 1,257: | Line 1,257: | ||

== Configuring DRBD == | == Configuring DRBD == | ||

DRBD is configured in two parts; | |||

* Global and common configuration options | |||

* Resource configurations | |||

We will be creating three separate DRBD resources, so we will create three separate resource configuration files. More on that in a moment. | |||

=== Configuring DRBD Global and Common Options === | |||

The first file to edit is <span class="code">/etc/drbd.d/global_common.conf</span>. In this file, we will set global configuration options and set default resource configuration options. These default resource options can be overwritten in the actual resource files which we'll create once we're done here. | |||

<source lang="bash"> | |||

cp /etc/drbd.d/global_common.conf /etc/drbd.d/global_common.conf.orig | |||

vim /etc/drbd.d/global_common.conf | |||

diff -u /etc/drbd.d/global_common.conf.orig /etc/drbd.d/global_common.conf | |||

</source> | |||

<source lang="diff"> | |||

--- /etc/drbd.d/global_common.conf.orig 2011-09-14 14:03:56.364566109 -0400 | |||

+++ /etc/drbd.d/global_common.conf 2011-09-14 14:23:37.287566400 -0400 | |||

@@ -15,24 +15,81 @@ | |||

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root"; | |||

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k"; | |||

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh; | |||

+ | |||

+ # This script is a wrapper for RHCS's 'fence_node' command line | |||

+ # tool. It will call a fence against the other node and return | |||

+ # the appropriate exit code to DRBD. | |||

+ fence-peer "/sbin/obliterate-peer.sh"; | |||

} | |||

startup { | |||

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb | |||

+ | |||

+ # This tells DRBD to promote both nodes to Primary on start. | |||

+ become-primary-on both; | |||

+ | |||

+ # This tells DRBD to wait five minutes for the other node to | |||

+ # connect. This should be longer than it takes for cman to | |||

+ # timeout and fence the other node *plus* the amount of time it | |||

+ # takes the other node to reboot. If you set this too short, | |||

+ # you could corrupt your data. If you want to be extra safe, do | |||

+ # not use this at all and DRBD will wait for the other node | |||

+ # forever. | |||

+ wfc-timeout 300; | |||

+ | |||

+ # This tells DRBD to wait for the other node for three minutes | |||

+ # if the other node was degraded the last time it was seen by | |||

+ # this node. This is a way to speed up the boot process when | |||

+ # the other node is out of commission for an extended duration. | |||

+ degr-wfc-timeout 120; | |||

} | |||

disk { | |||

# on-io-error fencing use-bmbv no-disk-barrier no-disk-flushes | |||

# no-disk-drain no-md-flushes max-bio-bvecs | |||

+ | |||

+ # This tells DRBD to block IO and fence the remote node (using | |||

+ # the 'fence-peer' helper) when connection with the other node | |||

+ # is unexpectedly lost. This is what helps prevent split-brain | |||

+ # condition and it is incredible important in dual-primary | |||

+ # setups! | |||

+ fencing resource-and-stonith; | |||

} | |||

net { | |||

# sndbuf-size rcvbuf-size timeout connect-int ping-int ping-timeout max-buffers | |||

# max-epoch-size ko-count allow-two-primaries cram-hmac-alg shared-secret | |||

# after-sb-0pri after-sb-1pri after-sb-2pri data-integrity-alg no-tcp-cork | |||

+ | |||

+ # This tells DRBD to allow two nodes to be Primary at the same | |||

+ # time. It is needed when 'become-primary-on both' is set. | |||

+ allow-two-primaries; | |||

+ | |||

+ # The following three commands tell DRBD how to react should | |||

+ # our best efforts fail and a split brain occurs. You can learn | |||

+ # more about these options by reading the drbd.conf man page. | |||

+ # NOTE! It is not possible to safely recover from a split brain | |||

+ # where both nodes were primary. This care requires human | |||

+ # intervention, so 'disconnect' is the only safe policy. | |||

+ after-sb-0pri discard-zero-changes; | |||

+ after-sb-1pri discard-secondary; | |||

+ after-sb-2pri disconnect; | |||

} | |||

syncer { | |||

# rate after al-extents use-rle cpu-mask verify-alg csums-alg | |||

+ | |||

+ # This alters DRBD's default syncer rate. Note that is it | |||

+ # *very* important that you do *not* configure the syncer rate | |||

+ # to be too fast. If it is too fast, it can significantly | |||

+ # impact applications using the DRBD resource. If it's set to a | |||

+ # rate higher than the underlying network and storage can | |||

+ # handle, the sync can stall completely. | |||

+ # This should be set to ~30% of the *tested* sustainable read | |||

+ # or write speed of the raw /dev/drbdX device (whichever is | |||

+ # slower). In this example, the underlying resource was tested | |||

+ # as being able to sustain roughly 60 MB/sec, so this is set to | |||

+ # one third of that rate, 20M. | |||

+ rate 20M; | |||

} | |||

} | |||

</source> | |||

Revision as of 02:26, 15 September 2011

|

Alteeve Wiki :: How To :: 2-Node Red Hat KVM Cluster Tutorial - Archive |

This paper has one goal;

- Creating a 2-node, high-availability cluster hosting KVM virtual machines using RHCS "stable 3" with DRBD and clustered LVM for synchronizing storage data. This is an updated version of the earlier Red Hat Cluster Service 2 Tutorial Tutorial. You will find much in common with that tutorial if you've previously followed that document. Please don't skip large sections though. There are some differences that are subtle but important.

Grab a coffee, a comfy chair, put on some nice music and settle in for some geekly fun.

The Task Ahead

Before we start, let's take a few minutes to discuss clustering and it's complexities.

Technologies We Will Use

- Enterprise Linux 6; You can use a derivative like CentOS v6.

- Red Hat Cluster Services "Stable" version 3. This describes the following core components:

- Corosync; Provides cluster communications using the totem protocol.

- Cluster Manager (cman); Manages the starting, stopping and managing of the cluster.

- Resource Manager (rgmanager); Manages cluster resources and services. Handles service recovery during failures.

- Clustered Logical Volume Manager (clvm); Cluster-aware (disk) volume manager. Backs GFS2 filesystems and KVM virtual machines.

- Global File Systems version 2 (gfs2); Cluster-aware, concurrently mountable file system.

- Distributed Redundant Block Device (DRBD); Keeps shared data synchronized across cluster nodes.

- KVM; Hypervisor that controls and supports virtual machines.

A Note on Patience

There is nothing inherently hard about clustering. However, there are many components that you need to understand before you can begin. The result is that clustering has an inherently steep learning curve.

You must have patience. Lots of it.

Many technologies can be learned by creating a very simple base and then building on it. The classic "Hello, World!" script created when first learning a programming language is an example of this. Unfortunately, there is no real analogue to this in clustering. Even the most basic cluster requires several pieces be in place and working together. If you try to rush by ignoring pieces you think are not important, you will almost certainly waste time. A good example is setting aside fencing, thinking that your test cluster's data isn't important. The cluster software has no concept of "test". It treats everything as critical all the time and will shut down if anything goes wrong.

Take your time, work through these steps, and you will have the foundation cluster sooner than you realize. Clustering is fun because it is a challenge.

Network

The cluster will use three Class B networks, broken down as:

| Purpose | Subnet | Notes |

|---|---|---|

| Internet-Facing Network (IFN) | 10.255.0.0/16 |

|

| Storage Network (SN) | 10.10.0.0/16 |

|

| Back-Channel Network (BCN) | 10.20.0.0/16 |

Miscellaneous equipment in the cluster, like managed switches, will use 10.20.3.z where z is a simple sequence. |

We will be using six interfaces, bonded into three pairs of two NICs in Active/Passive (mode 1). Each link of each bond will be on alternate, unstacked switches. This configuration is the only configuration supported by Red Hat in clusters.

If you can not install six interfaces in your server, then four interfaces will do with the SN and BCN networks merged.

In this tutorial, we will use two D-Link DGS-3100-24, unstacked, using three VLANs to isolate the three networks.

You could just as easily use four or six unmanaged 5 port or 8 port switches. What matters is that the three subnets are isolated and that each link of each bond is on a separate switch. Lastly, only connect the IFN switches or VLANs to the Internet for security reasons.

The actual mapping of interfaces to bonds to networks will be:

| Subnet | Link 1 | Link 2 | Bond | IP |

|---|---|---|---|---|

| BCN | eth0 | eth3 | bond0 | 10.20.0.x |

| SN | eth1 | eth4 | bond1 | 10.10.0.x |

| IFN | eth2 | eth5 | bond2 | 10.255.0.x |

Setting Up the Network

| Warning: The following steps can easily get confusing, given how many files we need to edit. Losing access to your server's network is a very real possibility! Do not continue without direct access to your servers! If you have out-of-band access via iKVM, console redirection or similar, be sure to test that it is working before proceeding. |

Making Sure We Know Our Interfaces

When you installed the operating system, the network interfaces names are somewhat randomly assigned to the physical network interfaces. It more than likely that you will want to re-order.

Before you start moving interface names around, you will want to consider which physical interfaces you will want to use on which networks. At the end of the day, the names themselves have no meaning. At the very least though, make them consistent across nodes.

Some things to consider, in order of importance:

- If you have a shared interface for your out-of-band management interface, like IPMI or iLO, you will want that interface to be on the Back-Channel Network.

- For redundancy, you want to spread out which interfaces are paired up. In my case, I have three interfaces on my mainboard and three additional add-in cards. I will pair each onboard interface with an add-in interface. In my case, my IPMI interface physically piggy-backs on one of the onboard interfaces so this interface will need to be part of the BCN bond.

- Your interfaces with the lowest latency should be used for the back-channel network.

- Your two fastest interfaces should be used for your storage network.

- The remaining two slowest interfaces should be used for the Internet-Facing Network bond.

In my case, all six interfaces are identical, so there is little to consider. The left-most interface on my system has IPMI, so it's paired network interface will be eth0. I simply work my way left, incrementing as I go. What you do will be whatever makes most sense to you.

There is a separate, short tutorial on re-ordering network interface;

Once you have the physical interfaces named the way you like, proceed to the next step.

Planning Our Network

To setup our network, we will need to edit the ifcfg-ethX, ifcfg-bondX and ifcfg-vbrX scripts. The last one will create bridges which will be used to route network connections to the virtual machines. We won't be creating an vbr1 bridge though, and bond1 will be dedicated to the storage and never used by a VM. The bridges will have the IP addresses, not the bonded interfaces. They will instead be slaved to their respective bridges.

We're going to be editing a lot of files. It's best to lay out what we'll be doing in a chart. So our setup will be:

| Node | BCN IP and Device | SN IP and Device | IFN IP and Device |

|---|---|---|---|

| an-node01 | 10.20.0.1 on vbr0 (bond0 slaved) | 10.10.0.1 on bond1 | 10.255.0.1 on vbr2 (bond2 slaved) |

| an-node02 | 10.20.0.2 on vbr0 (bond0 slaved) | 10.10.0.2 on bond1 | 10.255.0.2 on vbr2 (bond2 slaved) |

Creating Some Network Configuration Files

Bridge configuration files must have a file name that sorts after the interfaces and bridges. The actual device name can be whatever you want though. If the system tries to start the bridge before it's interface is up, it will fail. I personally like to use the name vbrX for "virtual machine bridge". You can use whatever makes sense to you, with the above concern in mind.

Start by touching the configuration files we will need.

touch /etc/sysconfig/network-scripts/ifcfg-bond{0,1,2}

touch /etc/sysconfig/network-scripts/ifcfg-vbr{0,2}

Now make a backup of your configuration files, in case something goes wrong and you want to start over.

mkdir /root/backups/

rsync -av /etc/sysconfig/network-scripts/ifcfg-eth* /root/backups/

sending incremental file list

ifcfg-eth0

ifcfg-eth1

ifcfg-eth2

ifcfg-eth3

ifcfg-eth4

ifcfg-eth5

sent 1467 bytes received 126 bytes 3186.00 bytes/sec

total size is 1119 speedup is 0.70

Configuring Our Bridges

Now lets start in reverse order. We'll write the bridge configuration, then the bond interfaces and finally alter the interface configuration files. The reason for doing this in reverse is to minimize the amount of time where a sudden restart would leave us without network access.

an-node01 BCN Bridge:

vim /etc/sysconfig/network-scripts/ifcfg-vbr0

# Back-Channel Network - Bridge

DEVICE="vbr0"

TYPE="Bridge"

BOOTPROTO="static"

IPADDR="10.20.0.1"

NETMASK="255.255.0.0"

an-node01 IFN Bridge:

vim /etc/sysconfig/network-scripts/ifcfg-vbr2

# Internet-Facing Network - Bridge

DEVICE="vbr2"

TYPE="Bridge"

BOOTPROTO="static"

IPADDR="10.255.0.1"

NETMASK="255.255.0.0"

GATEWAY="10.255.255.254"

DNS1="192.139.81.117"

DNS2="192.139.81.1"

DEFROUTE="yes"

Creating the Bonded Interfaces

Now we can create the actual bond configuration files.

To explain the BONDING_OPTS options;

- mode=1 sets the bonding mode to active-backup.

- The miimon=100 tells the bonding driver to check if the network cable has been unplugged or plugged in every 100 milliseconds.

- The use_carrier=1 tells the driver to use the driver to maintain the link state. Some drivers don't support that. If you run into trouble, try changing this to 0.

- The updelay=0 and downdelay=0 tells the driver not to wait before changing the state of an interface when the link goes up or down. There are some cases where the link will report that it is ready before the switch can push data. Other times, you might have the switch drop the link for a very brief time. Altering these values gives you a chance to either delay the use of an interface until the switch is ready or prevent dropping a link when the link is just bouncing.

an-node01 BCN Bond:

vim /etc/sysconfig/network-scripts/ifcfg-bond0

# Back-Channel Network - Bond

DEVICE="bond0"

BRIDGE="vbr0"

BOOTPROTO="none"

NM_CONTROLLED="no"

ONBOOT="yes"

BONDING_OPTS="mode=1 miimon=100 use_carrier=1 updelay=0 downdelay=0"

an-node01 SN Bond:

vim /etc/sysconfig/network-scripts/ifcfg-bond1

# Storage Network - Bond

DEVICE="bond1"

BOOTPROTO="static"

NM_CONTROLLED="no"

ONBOOT="yes"

BONDING_OPTS="mode=1 miimon=100 use_carrier=1 updelay=0 downdelay=0"

IPADDR="10.10.0.1"

NETMASK="255.255.0.0"

an-node01 IFN Bond:

vim /etc/sysconfig/network-scripts/ifcfg-bond2

# Internet-Facing Network - Bond

DEVICE="bond2"

BRIDGE="vbr2"

BOOTPROTO="none"

NM_CONTROLLED="no"

ONBOOT="yes"

BONDING_OPTS="mode=1 miimon=100 use_carrier=1 updelay=0 downdelay=0"

Alter The Interface Configurations

Now, finally, alter the interfaces themselves to join their respective bonds.

an-node01's eth0, the BCN bond0, Link 1:

vim /etc/sysconfig/network-scripts/ifcfg-eth0

# Back-Channel Network - Link 1

HWADDR="00:E0:81:C7:EC:49"

DEVICE="eth0"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond0"

SLAVE="yes"

an-node01's eth1, the SN bond1, Link 1:

vim /etc/sysconfig/network-scripts/ifcfg-eth0

# Storage Network - Link 1

HWADDR="00:E0:81:C7:EC:48"

DEVICE="eth1"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond1"

SLAVE="yes"

an-node01's eth2, the IFN bond2, Link 1:

vim /etc/sysconfig/network-scripts/ifcfg-eth0

# Internet-Facing Network - Link 1

HWADDR="00:E0:81:C7:EC:47"

DEVICE="eth2"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond2"

SLAVE="yes"

an-node01's eth3, the BCN bond0, Link 2:

vim /etc/sysconfig/network-scripts/ifcfg-eth3

# Back-Channel Network - Link 2

DEVICE="eth3"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond0"

SLAVE="yes"

an-node01's eth4, the SN bond1, Link 2:

vim /etc/sysconfig/network-scripts/ifcfg-eth0

# Storage Network - Link 2

HWADDR="00:1B:21:BF:6F:FE"

DEVICE="eth4"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond1"

SLAVE="yes"

an-node01's eth5, the IFN bond2, Link 2:

vim /etc/sysconfig/network-scripts/ifcfg-eth0

# Internet-Facing Network - Link 2

HWADDR="00:1B:21:BF:70:02"

DEVICE="eth5"

NM_CONTROLLED="no"

ONBOOT="yes"

BOOTPROTO="none"

MASTER="bond2"

SLAVE="yes"

Loading The New Network Configuration

Simple restart the network service.

/etc/init.d/network restart

Updating /etc/hosts

On both nodes, update the /etc/hosts file to reflect your network configuration. Remember to add entries for your IPMI, switched PDUs and other devices.

vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

# an-node01

10.20.0.1 an-node01 an-node01.bcn an-node01.alteeve.com

10.20.1.1 an-node01.ipmi

10.10.0.1 an-node01.sn

10.255.0.1 an-node01.ifn

# an-node01

10.20.0.2 an-node02 an-node02.bcn an-node02.alteeve.com

10.20.1.2 an-node02.ipmi

10.10.0.2 an-node02.sn

10.255.0.2 an-node02.ifn

# Fence devices

10.20.2.1 pdu1 pdu1.alteeve.com

10.20.2.2 pdu2 pdu2.alteeve.com

Configuring The Cluster Foundation

We need to configure the cluster in two stages. This is because we have something of a chicken-and-egg problem.

- We need clustered storage for our virtual machines.

- Our clustered storage needs the cluster for fencing.

Conveniently, clustering has two logical parts;

- Cluster communication and membership.

- Cluster resource management.

The first, communication and membership, covers which nodes are part of the cluster and ejecting faulty nodes from the cluster, among other tasks. The second part, resource management, is provided by a second tool called rgmanager. It's this second part that we will set aside for later.

Installing Required Programs

Installing the cluster software is pretty simple;

yum install cman corosync rgmanager ricci gfs2-utils

Configuration Methods

In Red Hat Cluster Services, the heart of the cluster is found in the /etc/cluster/cluster.conf XML configuration file.

There are three main ways of editing this file. Two are already well documented, so I won't bother discussing them, beyond introducing them. The third way is by directly hand-crafting the cluster.conf file. This method is not very well documented, and directly manipulating configuration files is my preferred method. As my boss loves to say; "The more computers do for you, the more they do to you".

The first two, well documented, graphical tools are:

- system-config-cluster, older GUI tool run directly from one of the cluster nodes.

- Conga, comprised of the ricci node-side client and the luci web-based server (can be run on machines outside the cluster).

I do like the tools above, but I often find issues that send me back to the command line. I'd recommend setting them aside for now as well. Once you feel comfortable with cluster.conf syntax, then by all means, go back and use them. I'd recommend not relying on them though, which might be the case if you try to use them too early in your studies.

The First cluster.conf Foundation Configuration

The very first stage of building the cluster is to create a configuration file that is as minimal as possible. To do that, we need to define a few thing;

- The name of the cluster and the cluster file version.

- Define cman options

- The nodes in the cluster

- The fence method for each node

- Define fence devices

- Define fenced options

That's it. Once we've defined this minimal amount, we will be able to start the cluster for the first time! So lets get to it, finally.

Name the Cluster and Set The Configuration Version

The cluster tag is the parent tag for the entire cluster configuration file.

vim /etc/cluster/cluster.conf

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="1">

</cluster>

This has two attributes that we need to set are name="" and config_version="".

The name="" attribute defines the name of the cluster. It must be unique amongst the clusters on your network. It should be descriptive, but you will not want to make it too long, either. You will see this name in the various cluster tools and you will enter in, for example, when creating a GFS2 partition later on. This tutorial uses the cluster name an_clusterA. The reason for the A is to help differentiate it from the nodes which use sequence numbers.

The config_version="" attribute is an integer marking the version of the configuration file. Whenever you make a change to the cluster.conf file, you will need to increment this version number by 1. If you don't increment this number, then the cluster tools will not know that the file needs to be reloaded. As this is the first version of this configuration file, it will start with 1. Note that this tutorial will increment the version after every change, regardless of whether it is explicitly pushed out to the other nodes and reloaded. The reason is to help get into the habit of always increasing this value.

Configuring cman Options

We are going to setup a special case for our cluster; A 2-Node cluster.

This is a special case because traditional quorum will not be useful. With only two nodes, each having a vote of 1, the total votes is 2. Quorum needs 50% + 1, which means that a single node failure would shut down the cluster, as the remaining node's vote is 50% exactly. That kind of defeats the purpose to having a cluster at all.

So to account for this special case, there is a special attribute called two_node="1". This tells the cluster manager to continue operating with only one vote. This option requires that the expected_votes="" attribute be set to 1. Normally, expected_votes is set automatically to the total sum of the defined cluster nodes' votes (which itself is a default of 1). This is the other half of the "trick", as a single node's vote of 1 now always provides quorum (that is, 1 meets the 50% + 1 requirement).

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="2">

<cman expected_votes="1" two_node="1"/>

</cluster>

Take note of the self-closing <... /> tag. This is an XML syntax that tells the parser not to look for any child or a closing tags.

Defining Cluster Nodes

This example is a little artificial, please don't load it into your cluster as we will need to add a few child tags, but one thing at a time.

This actually introduces two tags.

The first is parent clusternodes tag, which takes no variables of it's own. It's sole purpose is to contain the clusternode child tags.

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="3">

<cman expected_votes="1" two_node="1"/>

<clusternodes>

<clusternode name="an-node01.alteeve.com" nodeid="1" />

<clusternode name="an-node02.alteeve.com" nodeid="2" />

</clusternodes>

</cluster>

The clusternode tag defines each cluster node. There are many attributes available, but we will look at just the two required ones.

The first is the name="" attribute. This should match the name given by uname -n ($HOSTNAME) when run on each node. The IP address that the name resolves to also sets the interface and subnet that the totem ring will run on. That is, the main cluster communications, which we are calling the Back-Channel Network. This is why it is so important to setup our /etc/hosts file correctly. Please see the clusternode's name attribute document for details on how name to interface mapping is resolved.

The second attribute is nodeid="". This must be a unique integer amongst the <clusternode ...> tags. It is used by the cluster to identify the node.

Defining Fence Devices

Fencing devices are designed to forcible eject a node from a cluster. This is generally done by forcing it to power off or reboot. Some SAN switches can logically disconnect a node from the shared storage device, which has the same effect of guaranteeing that the defective node can not alter the shared storage. A common, third type of fence device is one that cuts the mains power to the server.

In this tutorial, our nodes support IPMI, which we will use as the primary fence device. We also have an APC brand switched PDU which will act as a backup in case a fault in the node disables the IPMI BMC.

| Note: Not all brands of switched PDUs are supported as fence devices. Before you purchase a fence device, confirm that it is supported. |

All fence devices are contained within the parent fencedevices tag. This parent tag has no attributes. Within this parent tag are one or more fencedevice child tags.

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="4">

<cman expected_votes="1" two_node="1"/>

<clusternodes>

<clusternode name="an-node01.alteeve.com" nodeid="1" />

<clusternode name="an-node02.alteeve.com" nodeid="2" />

</clusternodes>

<fencedevices>

<fencedevice name="ipmi_an01" agent="fence_ipmilan" ipaddr="an-node01.ipmi" login="root" passwd="secret" />

<fencedevice name="ipmi_an02" agent="fence_ipmilan" ipaddr="an-node02.ipmi" login="root" passwd="secret" />

<fencedevice name="pdu2" agent="fence_apc" ipaddr="pdu2.alteeve.com" login="apc" passwd="secret" />

</fencedevices>

</cluster>

Every fence device used in your cluster will have it's own fencedevice tag. If you are using IPMI, this means you will have a fencedevice entry for each node, as each physical IPMI BMC is a unique fence device. On the other hand, fence devices that support multiple nodes, like switched PDUs, will have just one entry. In our case, we're using both types, so we have three fences devices; The two IPMI BMCs plus the switched PDU.

All fencedevice tags share two basic attributes; name="" and agent="".

- The name attribute must be unique among all the fence devices in your cluster. As we will see in the next step, this name will be used within the <clusternode...> tag.

- The agent tag tells the cluster which fence agent to use when the fenced daemon needs to communicate with the physical fence device. A fence agent is simple a shell script that acts as a glue layer between the fenced daemon and the fence hardware. This agent takes the arguments from the daemon, like what port to act on and what action to take, and executes the node. The agent is responsible for ensuring that the execution succeeded and returning an appropriate success or failure exit code, depending. For those curious, the full details are described in the FenceAgentAPI. If you have two or more of the same fence device, like IPMI, then you will use the same fence agent value a corresponding number of times.

Beyond these two attributes, each fence agent will have it's own subset of attributes. The scope of which is outside this tutorial, though we will see examples for IPMI and a switched PDU. Most, if not all, fence agents have a corresponding man page that will show you what attributes it accepts and how they are used. The two fence agents we will see here have their attributes defines in the following man pages.

- man fence_ipmilan - IPMI fence agent.

- man fence_apc - APC-brand switched PDU.

The example above is what this tutorial will use.

Example <fencedevice...> Tag For IPMI

Here we will show what IPMI <fencedevice...> tags look like. We won't be using it ourselves, but it is quite popular as a fence device so I wanted to show an example of it's use.

...

<fence>

<method name="ipmi">

<device name="ipmi_an01" action="reboot"/>

</method>

</fence>

...

<fencedevices>

<fencedevice name="ipmi_an01" agent="fence_ipmilan" ipaddr="an-node01.ipmi" login="root" passwd="secret" />

<fencedevice name="ipmi_an02" agent="fence_ipmilan" ipaddr="an-node02.ipmi" login="root" passwd="secret" />

</fencedevices>

- ipaddr; This is the resolvable name or IP address of the device. If you use a resolvable name, it is strongly advised that you put the name in /etc/hosts as DNS is another layer of abstraction which could fail.

- login; This is the login name to use when the fenced daemon connects to the device.

- passwd; This is the login password to use when the fenced daemon connects to the device.

- name; This is the name of this particular fence device within the cluster which, as we will see shortly, is matched in the <clusternode...> element where appropriate.

| Note: We will see shortly that, unlike switched PDUs or other network fence devices, IPMI does not have ports. This is because each IPMI BMC supports just it's host system. More on that later. |

Example <fencedevice...> Tag For APC Switched PDUs

Here we will show how to configure APC switched PDU <fencedevice...> tags. We won't be using it in this tutorial, but in the real world, it is highly recommended as a backup fence device for IPMI and similar primary fence devices.

...

<fence>

<method name="pdu">

<device name="pdu2" port="1" action="reboot"/>

</method>

</fence>

...

<fencedevices>

<fencedevice name="pdu2" agent="fence_apc" ipaddr="pdu2.alteeve.com" login="apc" passwd="secret" />

</fencedevices>

- ipaddr; This is the resolvable name or IP address of the device. If you use a resolvable name, it is strongly advised that you put the name in /etc/hosts as DNS is another layer of abstraction which could fail.

- login; This is the login name to use when the fenced daemon connects to the device.

- passwd; This is the login password to use when the fenced daemon connects to the device.

- name; This is the name of this particular fence device within the cluster which, as we will see shortly, is matched in the <clusternode...> element where appropriate.

Using the Fence Devices

Now we have nodes and fence devices defined, we will go back and tie them together. This is done by:

- Defining a fence tag containing all fence methods and devices.

Here is how we implement IPMI as the primary fence device with the switched PDU as the backup method.

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="5">

<cman expected_votes="1" two_node="1"/>

<clusternodes>

<clusternode name="an-node01.alteeve.com" nodeid="1">

<fence>

<method name="ipmi">

<device name="ipmi_an01" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="1" action="reboot"/>

</method>

</fence>

</clusternode>

<clusternode name="an-node02.alteeve.com" nodeid="2">

<fence>

<method name="ipmi">

<device name="ipmi_an02" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="2" action="reboot"/>

</method>

</fence>

</clusternode>

</clusternodes>

<fencedevices>

<fencedevice name="ipmi_an01" agent="fence_ipmilan" ipaddr="an-node01.ipmi" login="root" passwd="secret" />

<fencedevice name="ipmi_an02" agent="fence_ipmilan" ipaddr="an-node02.ipmi" login="root" passwd="secret" />

<fencedevice name="pdu2" agent="fence_apc" ipaddr="pdu2.alteeve.com" login="apc" passwd="secret" />

</fencedevices>

</cluster>

First, notice that the fence tag has no attributes. It's merely a container for the method(s).

The next level is the method named ipmi. This name is merely a description and can be whatever you feel is most appropriate. It's purpose is simply to help you distinguish this method from other methods. The reason for method tags is that some fence device calls will have two or more steps. A classic example would be a node with a redundant power supply on a switch PDU acting as the fence device. In such a case, you will need to define multiple device tags, one for each power cable feeding the node. In such a case, the cluster will not consider the fence a success unless and until all contained device calls execute successfully.

The same pair of method and device tags are supplied a second time. The first pair defined the IPMI interfaces, and the second pair defined the switched PDU. Note that the PDU definition needs a port="" attribute where the IPMI fence device does not. When a fence call is needed, the fence devices will be called in the order they are found here. If both devices fail, the cluster will go back to the start and try again, looping indefinitely until one device succeeds.

The actual fence device configuration is the final piece of the puzzle. It is here that you specify per-node configuration options and link these attributes to a given fencedevice. Here, we see the link to the fencedevice via the name, fence_na01 in this example.

Let's step through an example fence call to help show how the per-cluster and fence device attributes are combined during a fence call.

- The cluster manager decides that a node needs to be fenced. Let's say that the victim is an-node02.

- The first method in the fence section under an-node02 is consulted. Within it there are two method entries, named ipmi and pdu. The IPMI method's device has one attribute while the PDU's device has two attributes;

- port; only found in the PDU method, this tells the cluster that an-node02 is connected to switched PDU's port number 2.

- action; Found on both devices, this tells the cluster that the fence action to take is reboot. How this action is actually interpreted depends on the fence device in use, though the name certainly implies that the node will be forced off and then restarted.

- The cluster searches in fencedevices for a fencedevice matching the name ipmi_an02. This fence device has four attributes;

- agent; This tells the cluster to call the fence_ipmilan fence agent script, as we discussed earlier.

- ipaddr; This tells the fence agent where on the network to find this particular IPMI BMC. This is how multiple fence devices of the same type can be used in the cluster.

- login; This is the login user name to use when authenticating against the fence device.

- passwd; This is the password to supply along with the login name when authenticating against the fence device.

- Should the IPMI fence call fail for some reason, the cluster will move on to the second pdu method, repeating the steps above but using the PDU values.

When the cluster calls the fence agent, it does so by initially calling the fence agent script with no arguments.

/usr/sbin/fence_ipmilan

Then it will pass to that agent the following arguments:

ipaddr=an-node02.ipmi

login=root

passwd=secret

action=reboot

As you can see then, the first three arguments are from the fencedevice attributes and the last one is from the device attributes under an-node02's clusternode's fence tag.

If this method fails, then the PDU will be called in a very similar way, but with an extra argument from the device attributes.

/usr/sbin/fence_apc

Then it will pass to that agent the following arguments:

ipaddr=pdu2.alteeve.com

login=root

passwd=secret

port=2

action=reboot

Should this fail, the cluster will go back and try the IPMI interface again. It will loop through the fence device methods forever until one of the methods succeeds.

Give Nodes More Time To Start

Clusters with more than three nodes will have to gain quorum before they can fence other nodes. As we saw earlier though, this is not really the case when using the two_node="1" attribute in the cman tag. What this means in practice is that if you start the cluster on one node and then wait too long to start the cluster on the second node, the first will fence the second.

The logic behind this is; When the cluster starts, it will try to talk to it's fellow node and then fail. With the special two_node="1" attribute set, the cluster knows that it is allowed to start clustered services, but it has no way to say for sure what state the other node is in. It could well be online and hosting services for all it knows. So it has to proceed on the assumption that the other node is alive and using shared resources. Given that, and given that it can not talk to the other node, it's only safe option is to fence the other node. Only then can it be confident that it is safe to start providing clustered services.

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="7">

<cman expected_votes="1" two_node="1"/>

<clusternodes>

<clusternode name="an-node01.alteeve.com" nodeid="1">

<fence>

<method name="ipmi">

<device name="ipmi_an01" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="1" action="reboot"/>

</method>

</fence>

</clusternode>

<clusternode name="an-node02.alteeve.com" nodeid="2">

<fence>

<method name="ipmi">

<device name="ipmi_an02" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="2" action="reboot"/>

</method>

</fence>

</clusternode>

</clusternodes>

<fencedevices>

<fencedevice name="ipmi_an01" agent="fence_ipmilan" ipaddr="an-node01.ipmi" login="root" passwd="secret" />

<fencedevice name="ipmi_an02" agent="fence_ipmilan" ipaddr="an-node02.ipmi" login="root" passwd="secret" />

<fencedevice name="pdu2" agent="fence_apc" ipaddr="pdu2.alteeve.com" login="apc" passwd="secret" />

</fencedevices>

<fence_daemon post_join_delay="30"/>

</cluster>

The new tag is fence_daemon, seen near the bottom if the file above. The change is made using the post_join_delay="60" attribute. By default, the cluster will declare the other node dead after just 6 seconds. The reason is that the larger this value, the slower the start-up of the cluster services will be. During testing and development though, I find this value to be far too short and frequently led to unnecessary fencing. Once your cluster is setup and working, it's not a bad idea to reduce this value to the lowest value that you are comfortable with.

Configuring Totem

This is almost a misnomer, as we're more or less not configuring the totem protocol in this cluster.

<?xml version="1.0"?>

<cluster name="an-clusterA" config_version="8">

<cman expected_votes="1" two_node="1"/>

<clusternodes>

<clusternode name="an-node01.alteeve.com" nodeid="1">

<fence>

<method name="ipmi">

<device name="ipmi_an01" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="1" action="reboot"/>

</method>

</fence>

</clusternode>

<clusternode name="an-node02.alteeve.com" nodeid="2">

<fence>

<method name="ipmi">

<device name="ipmi_an02" action="reboot"/>

</method>

<method name="pdu">

<device name="pdu2" port="2" action="reboot"/>

</method>

</fence>

</clusternode>

</clusternodes>

<fencedevices>

<fencedevice name="ipmi_an01" agent="fence_ipmilan" ipaddr="an-node01.ipmi" login="root" passwd="secret" />

<fencedevice name="ipmi_an02" agent="fence_ipmilan" ipaddr="an-node02.ipmi" login="root" passwd="secret" />

<fencedevice name="pdu2" agent="fence_apc" ipaddr="pdu2.alteeve.com" login="apc" passwd="secret" />

</fencedevices>

<fence_daemon post_join_delay="30"/>

<totem rrp_mode="none" secauth="off"/>

</cluster>

| Note: At this time, redundant ring protocol is not supported (RHEL6.1 and lower) and will be in technology preview mode (RHEL6.2 and above). For this reason, we will not be using it. However, we are using bonding, so we still have removed a single point of failure. |

RRP is an optional second ring that can be used for cluster communication in the case of a break down in the first ring. However, if you wish to explore it further, please take a look at the clusternode element tag called <altname...>. When altname is used though, then the rrp_mode attribute will need to be changed to either active or passive (the details of which are outside the scope of this tutorial).

The second option we're looking at here is the secauth="off" attribute. This controls whether the cluster communications are encrypted or not. We can safely disable this because we're working on a known-private network, which yields two benefits; It's simpler to setup and it's a lot faster. If you must encrypt the cluster communications, then you can do so here. The details of which are also outside the scope of this tutorial though.

Validating and Pushing the /etc/cluster/cluster.conf File

One of the most noticeable changes in RHCS cluster stable 3 is that we no longer have to make a long, cryptic xmllint call to validate our cluster configuration. Now we can simply call ccs_config_validate.

ccs_config_validate

Configuration validates

If there was a problem, you need to go back and fix it. DO NOT proceed until your configuration validates. Once it does, we're ready to move on!

With it validated, we need to push it to the other node. As the cluster is not running yet, we will push it out using rsync.

rsync -av /etc/cluster/cluster.conf root@an-node02:/etc/cluster/

sending incremental file list

cluster.conf

sent 1228 bytes received 31 bytes 2518.00 bytes/sec

total size is 1148 speedup is 0.91

Setting Up ricci

Another change from RHCS stable 2 is how configuration changes are propagated. Before, after a change, we'd push out the updated cluster configuration by calling ccs_tool update /etc/cluster/cluster.conf. Now this is done with cman_tool version -r. More fundamentally though, the cluster needs to authenticate against each node and does this using the local ricci system user. The user has no password initially, so we need to set one.

On both nodes:

passwd ricci

Changing password for user ricci.

New password:

Retype new password:

passwd: all authentication tokens updated successfully.

You will need to enter this password once from each node against the other node. We will see this later.

Now make sure that the ricci daemon is set to start on boot and is running now.

chkconfig ricci on

/etc/init.d/ricci start

Starting ricci: [ OK ]

| Note: If you don't see [ OK ], don't worry, it is probably because it was already running. |

Starting the Cluster for the First Time

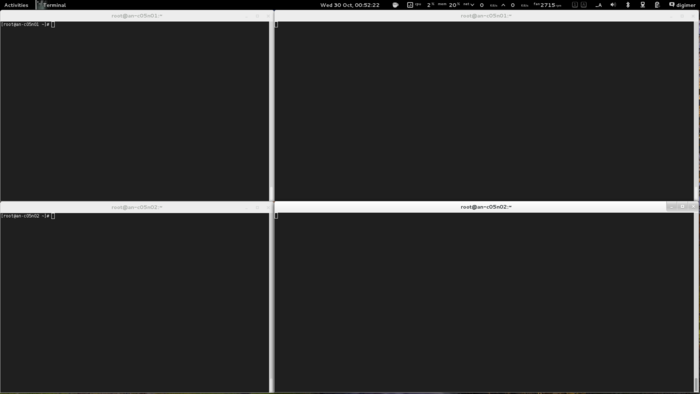

It's a good idea to open a second terminal on either node and tail the /var/log/messages syslog file. All cluster messages will be recorded here and it will help to debug problems if you can watch the logs. To do this, in the new terminal windows run;

clear; tail -f -n 0 /var/log/messages

This will clear the screen and start watching for new lines to be written to syslog. When you are done watching syslog, press the <ctrl> + c key combination.

How you lay out your terminal windows is, obviously, up to your own preferences. Below is a configuration I have found very useful.

With the terminals setup, lets start the cluster!

| Warning: If you don't start cman on both nodes within 30 seconds, the slower node will be fenced. |

On both nodes, run:

/etc/init.d/cman start

Starting cluster:

Checking Network Manager... [ OK ]

Global setup... [ OK ]

Loading kernel modules... [ OK ]

Mounting configfs... [ OK ]

Starting cman... [ OK ]

Waiting for quorum... [ OK ]

Starting fenced... [ OK ]

Starting dlm_controld... [ OK ]

Starting gfs_controld... [ OK ]

Unfencing self... [ OK ]

Joining fence domain... [ OK ]

Here is what you should see in syslog:

Sep 14 13:33:58 an-node01 kernel: DLM (built Jun 27 2011 19:51:46) installed

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Corosync Cluster Engine ('1.2.3'): started and ready to provide service.

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Corosync built-in features: nss rdma

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Successfully read config from /etc/cluster/cluster.conf

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Successfully parsed cman config

Sep 14 13:33:58 an-node01 corosync[18897]: [TOTEM ] Initializing transport (UDP/IP).

Sep 14 13:33:58 an-node01 corosync[18897]: [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0).

Sep 14 13:33:58 an-node01 corosync[18897]: [TOTEM ] The network interface [10.20.0.1] is now up.

Sep 14 13:33:58 an-node01 corosync[18897]: [QUORUM] Using quorum provider quorum_cman

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync cluster quorum service v0.1

Sep 14 13:33:58 an-node01 corosync[18897]: [CMAN ] CMAN 3.0.12 (built Jul 4 2011 22:35:06) started

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync CMAN membership service 2.90

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: openais checkpoint service B.01.01

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync extended virtual synchrony service

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync configuration service

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync cluster closed process group service v1.01

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync cluster config database access v1.01

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync profile loading service

Sep 14 13:33:58 an-node01 corosync[18897]: [QUORUM] Using quorum provider quorum_cman

Sep 14 13:33:58 an-node01 corosync[18897]: [SERV ] Service engine loaded: corosync cluster quorum service v0.1

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Compatibility mode set to whitetank. Using V1 and V2 of the synchronization engine.

Sep 14 13:33:58 an-node01 corosync[18897]: [TOTEM ] A processor joined or left the membership and a new membership was formed.

Sep 14 13:33:58 an-node01 corosync[18897]: [CMAN ] quorum regained, resuming activity

Sep 14 13:33:58 an-node01 corosync[18897]: [QUORUM] This node is within the primary component and will provide service.

Sep 14 13:33:58 an-node01 corosync[18897]: [QUORUM] Members[1]: 1

Sep 14 13:33:58 an-node01 corosync[18897]: [QUORUM] Members[1]: 1

Sep 14 13:33:58 an-node01 corosync[18897]: [CPG ] downlist received left_list: 0

Sep 14 13:33:58 an-node01 corosync[18897]: [CPG ] chosen downlist from node r(0) ip(10.20.0.1)

Sep 14 13:33:58 an-node01 corosync[18897]: [MAIN ] Completed service synchronization, ready to provide service.

Sep 14 13:34:02 an-node01 corosync[18897]: [TOTEM ] A processor joined or left the membership and a new membership was formed.

Sep 14 13:34:02 an-node01 corosync[18897]: [QUORUM] Members[2]: 1 2

Sep 14 13:34:02 an-node01 corosync[18897]: [QUORUM] Members[2]: 1 2

Sep 14 13:34:02 an-node01 corosync[18897]: [CPG ] downlist received left_list: 0

Sep 14 13:34:02 an-node01 corosync[18897]: [CPG ] downlist received left_list: 0

Sep 14 13:34:02 an-node01 corosync[18897]: [CPG ] chosen downlist from node r(0) ip(10.20.0.1)

Sep 14 13:34:02 an-node01 corosync[18897]: [MAIN ] Completed service synchronization, ready to provide service.

Sep 14 13:34:02 an-node01 fenced[18954]: fenced 3.0.12 started

Sep 14 13:34:02 an-node01 dlm_controld[18978]: dlm_controld 3.0.12 started

Sep 14 13:34:02 an-node01 gfs_controld[19000]: gfs_controld 3.0.12 started

Now to confirm that the cluster is operating properly, run cman_tool status;

cman_tool status

Version: 6.2.0

Config Version: 8

Cluster Name: an-clusterA

Cluster Id: 29382

Cluster Member: Yes

Cluster Generation: 8

Membership state: Cluster-Member

Nodes: 2

Expected votes: 1

Total votes: 2

Node votes: 1

Quorum: 1

Active subsystems: 7

Flags: 2node

Ports Bound: 0

Node name: an-node01.alteeve.com

Node ID: 1

Multicast addresses: 239.192.114.57

Node addresses: 10.20.0.1

We can see that the both nodes are talking because of the Nodes: 2 entry.

If you ever want to see the nitty-gritty configuration, you can run corosync-objctl.

corosync-objctl

cluster.name=an-clusterA

cluster.config_version=8

cluster.cman.expected_votes=1

cluster.cman.two_node=1

cluster.cman.nodename=an-node01.alteeve.com

cluster.cman.cluster_id=29382

cluster.clusternodes.clusternode.name=an-node01.alteeve.com

cluster.clusternodes.clusternode.nodeid=1

cluster.clusternodes.clusternode.fence.method.name=ipmi

cluster.clusternodes.clusternode.fence.method.device.name=ipmi_an01

cluster.clusternodes.clusternode.fence.method.device.action=reboot

cluster.clusternodes.clusternode.fence.method.name=pdu

cluster.clusternodes.clusternode.fence.method.device.name=pdu2

cluster.clusternodes.clusternode.fence.method.device.port=1

cluster.clusternodes.clusternode.fence.method.device.action=reboot

cluster.clusternodes.clusternode.name=an-node02.alteeve.com

cluster.clusternodes.clusternode.nodeid=2

cluster.clusternodes.clusternode.fence.method.name=ipmi

cluster.clusternodes.clusternode.fence.method.device.name=ipmi_an02

cluster.clusternodes.clusternode.fence.method.device.action=reboot

cluster.clusternodes.clusternode.fence.method.name=pdu

cluster.clusternodes.clusternode.fence.method.device.name=pdu2

cluster.clusternodes.clusternode.fence.method.device.port=2

cluster.clusternodes.clusternode.fence.method.device.action=reboot

cluster.fencedevices.fencedevice.name=ipmi_an01

cluster.fencedevices.fencedevice.agent=fence_ipmilan

cluster.fencedevices.fencedevice.ipaddr=an-node01.ipmi

cluster.fencedevices.fencedevice.login=root

cluster.fencedevices.fencedevice.passwd=secret

cluster.fencedevices.fencedevice.name=ipmi_an02

cluster.fencedevices.fencedevice.agent=fence_ipmilan

cluster.fencedevices.fencedevice.ipaddr=an-node02.ipmi

cluster.fencedevices.fencedevice.login=root

cluster.fencedevices.fencedevice.passwd=secret

cluster.fencedevices.fencedevice.name=pdu2

cluster.fencedevices.fencedevice.agent=fence_apc

cluster.fencedevices.fencedevice.ipaddr=pdu2.alteeve.com

cluster.fencedevices.fencedevice.login=apc

cluster.fencedevices.fencedevice.passwd=secret

cluster.fence_daemon.post_join_delay=30

cluster.totem.rrp_mode=none

cluster.totem.secauth=off

totem.rrp_mode=none

totem.secauth=off

totem.version=2

totem.nodeid=1

totem.vsftype=none

totem.token=10000

totem.join=60

totem.fail_recv_const=2500

totem.consensus=2000

totem.key=an-clusterA

totem.interface.ringnumber=0

totem.interface.bindnetaddr=10.20.0.1

totem.interface.mcastaddr=239.192.114.57

totem.interface.mcastport=5405

libccs.next_handle=7

libccs.connection.ccs_handle=3

libccs.connection.config_version=8

libccs.connection.fullxpath=0

libccs.connection.ccs_handle=4

libccs.connection.config_version=8

libccs.connection.fullxpath=0

libccs.connection.ccs_handle=5

libccs.connection.config_version=8

libccs.connection.fullxpath=0

logging.timestamp=on

logging.to_logfile=yes

logging.logfile=/var/log/cluster/corosync.log

logging.logfile_priority=info

logging.to_syslog=yes

logging.syslog_facility=local4

logging.syslog_priority=info

aisexec.user=ais

aisexec.group=ais

service.name=corosync_quorum

service.ver=0

service.name=corosync_cman

service.ver=0

quorum.provider=quorum_cman

service.name=openais_ckpt

service.ver=0

runtime.services.quorum.service_id=12

runtime.services.cman.service_id=9

runtime.services.ckpt.service_id=3

runtime.services.ckpt.0.tx=0

runtime.services.ckpt.0.rx=0

runtime.services.ckpt.1.tx=0

runtime.services.ckpt.1.rx=0

runtime.services.ckpt.2.tx=0

runtime.services.ckpt.2.rx=0

runtime.services.ckpt.3.tx=0

runtime.services.ckpt.3.rx=0

runtime.services.ckpt.4.tx=0

runtime.services.ckpt.4.rx=0

runtime.services.ckpt.5.tx=0

runtime.services.ckpt.5.rx=0

runtime.services.ckpt.6.tx=0

runtime.services.ckpt.6.rx=0

runtime.services.ckpt.7.tx=0

runtime.services.ckpt.7.rx=0

runtime.services.ckpt.8.tx=0

runtime.services.ckpt.8.rx=0

runtime.services.ckpt.9.tx=0

runtime.services.ckpt.9.rx=0

runtime.services.ckpt.10.tx=0

runtime.services.ckpt.10.rx=0

runtime.services.ckpt.11.tx=2

runtime.services.ckpt.11.rx=3

runtime.services.ckpt.12.tx=0

runtime.services.ckpt.12.rx=0

runtime.services.ckpt.13.tx=0

runtime.services.ckpt.13.rx=0

runtime.services.evs.service_id=0

runtime.services.evs.0.tx=0

runtime.services.evs.0.rx=0

runtime.services.cfg.service_id=7

runtime.services.cfg.0.tx=0

runtime.services.cfg.0.rx=0

runtime.services.cfg.1.tx=0

runtime.services.cfg.1.rx=0

runtime.services.cfg.2.tx=0

runtime.services.cfg.2.rx=0

runtime.services.cfg.3.tx=0

runtime.services.cfg.3.rx=0

runtime.services.cpg.service_id=8

runtime.services.cpg.0.tx=4

runtime.services.cpg.0.rx=8

runtime.services.cpg.1.tx=0

runtime.services.cpg.1.rx=0

runtime.services.cpg.2.tx=0

runtime.services.cpg.2.rx=0

runtime.services.cpg.3.tx=16

runtime.services.cpg.3.rx=23

runtime.services.cpg.4.tx=0

runtime.services.cpg.4.rx=0

runtime.services.cpg.5.tx=2

runtime.services.cpg.5.rx=3

runtime.services.confdb.service_id=11

runtime.services.pload.service_id=13

runtime.services.pload.0.tx=0

runtime.services.pload.0.rx=0

runtime.services.pload.1.tx=0

runtime.services.pload.1.rx=0

runtime.services.quorum.service_id=12

runtime.connections.active=6

runtime.connections.closed=110

runtime.connections.fenced:18954:16.service_id=8

runtime.connections.fenced:18954:16.client_pid=18954

runtime.connections.fenced:18954:16.responses=5

runtime.connections.fenced:18954:16.dispatched=9

runtime.connections.fenced:18954:16.requests=5

runtime.connections.fenced:18954:16.sem_retry_count=0

runtime.connections.fenced:18954:16.send_retry_count=0

runtime.connections.fenced:18954:16.recv_retry_count=0

runtime.connections.fenced:18954:16.flow_control=0

runtime.connections.fenced:18954:16.flow_control_count=0

runtime.connections.fenced:18954:16.queue_size=0

runtime.connections.dlm_controld:18978:24.service_id=8

runtime.connections.dlm_controld:18978:24.client_pid=18978

runtime.connections.dlm_controld:18978:24.responses=5

runtime.connections.dlm_controld:18978:24.dispatched=8

runtime.connections.dlm_controld:18978:24.requests=5

runtime.connections.dlm_controld:18978:24.sem_retry_count=0

runtime.connections.dlm_controld:18978:24.send_retry_count=0

runtime.connections.dlm_controld:18978:24.recv_retry_count=0

runtime.connections.dlm_controld:18978:24.flow_control=0

runtime.connections.dlm_controld:18978:24.flow_control_count=0

runtime.connections.dlm_controld:18978:24.queue_size=0

runtime.connections.dlm_controld:18978:19.service_id=3

runtime.connections.dlm_controld:18978:19.client_pid=18978

runtime.connections.dlm_controld:18978:19.responses=0

runtime.connections.dlm_controld:18978:19.dispatched=0

runtime.connections.dlm_controld:18978:19.requests=0

runtime.connections.dlm_controld:18978:19.sem_retry_count=0

runtime.connections.dlm_controld:18978:19.send_retry_count=0

runtime.connections.dlm_controld:18978:19.recv_retry_count=0

runtime.connections.dlm_controld:18978:19.flow_control=0

runtime.connections.dlm_controld:18978:19.flow_control_count=0

runtime.connections.dlm_controld:18978:19.queue_size=0

runtime.connections.gfs_controld:19000:22.service_id=8

runtime.connections.gfs_controld:19000:22.client_pid=19000

runtime.connections.gfs_controld:19000:22.responses=5

runtime.connections.gfs_controld:19000:22.dispatched=8

runtime.connections.gfs_controld:19000:22.requests=5

runtime.connections.gfs_controld:19000:22.sem_retry_count=0

runtime.connections.gfs_controld:19000:22.send_retry_count=0

runtime.connections.gfs_controld:19000:22.recv_retry_count=0

runtime.connections.gfs_controld:19000:22.flow_control=0

runtime.connections.gfs_controld:19000:22.flow_control_count=0

runtime.connections.gfs_controld:19000:22.queue_size=0

runtime.connections.fenced:18954:25.service_id=8

runtime.connections.fenced:18954:25.client_pid=18954

runtime.connections.fenced:18954:25.responses=5

runtime.connections.fenced:18954:25.dispatched=8

runtime.connections.fenced:18954:25.requests=5

runtime.connections.fenced:18954:25.sem_retry_count=0

runtime.connections.fenced:18954:25.send_retry_count=0

runtime.connections.fenced:18954:25.recv_retry_count=0

runtime.connections.fenced:18954:25.flow_control=0

runtime.connections.fenced:18954:25.flow_control_count=0

runtime.connections.fenced:18954:25.queue_size=0

runtime.connections.corosync-objctl:19188:23.service_id=11

runtime.connections.corosync-objctl:19188:23.client_pid=19188

runtime.connections.corosync-objctl:19188:23.responses=435

runtime.connections.corosync-objctl:19188:23.dispatched=0

runtime.connections.corosync-objctl:19188:23.requests=438

runtime.connections.corosync-objctl:19188:23.sem_retry_count=0

runtime.connections.corosync-objctl:19188:23.send_retry_count=0

runtime.connections.corosync-objctl:19188:23.recv_retry_count=0

runtime.connections.corosync-objctl:19188:23.flow_control=0

runtime.connections.corosync-objctl:19188:23.flow_control_count=0

runtime.connections.corosync-objctl:19188:23.queue_size=0

runtime.totem.pg.mrp.srp.orf_token_tx=2

runtime.totem.pg.mrp.srp.orf_token_rx=744

runtime.totem.pg.mrp.srp.memb_merge_detect_tx=365

runtime.totem.pg.mrp.srp.memb_merge_detect_rx=365

runtime.totem.pg.mrp.srp.memb_join_tx=3

runtime.totem.pg.mrp.srp.memb_join_rx=5

runtime.totem.pg.mrp.srp.mcast_tx=46

runtime.totem.pg.mrp.srp.mcast_retx=0

runtime.totem.pg.mrp.srp.mcast_rx=57

runtime.totem.pg.mrp.srp.memb_commit_token_tx=4

runtime.totem.pg.mrp.srp.memb_commit_token_rx=4

runtime.totem.pg.mrp.srp.token_hold_cancel_tx=4

runtime.totem.pg.mrp.srp.token_hold_cancel_rx=7

runtime.totem.pg.mrp.srp.operational_entered=2

runtime.totem.pg.mrp.srp.operational_token_lost=0

runtime.totem.pg.mrp.srp.gather_entered=2

runtime.totem.pg.mrp.srp.gather_token_lost=0

runtime.totem.pg.mrp.srp.commit_entered=2

runtime.totem.pg.mrp.srp.commit_token_lost=0

runtime.totem.pg.mrp.srp.recovery_entered=2

runtime.totem.pg.mrp.srp.recovery_token_lost=0

runtime.totem.pg.mrp.srp.consensus_timeouts=0

runtime.totem.pg.mrp.srp.mtt_rx_token=1903

runtime.totem.pg.mrp.srp.avg_token_workload=0

runtime.totem.pg.mrp.srp.avg_backlog_calc=0

runtime.totem.pg.mrp.srp.rx_msg_dropped=0

runtime.blackbox.dump_flight_data=no

runtime.blackbox.dump_state=no

cman_private.COROSYNC_DEFAULT_CONFIG_IFACE=xmlconfig:cmanpreconfig

Installing DRBD

DRBD is an open-source application for real-time, block-level disk replication created and maintained by Linbit. We will use this to keep the data on our cluster consistent between the two nodes.

To install it, we have two choices;

- Install from source files.

- Install from ELRepo.

Installing from source ensures that you have full control over the installed software. However, you become solely responsible for installing future patches and bugfixes.

Installing from ELRepo means seceding some control to the ELRepo maintainers, but it also means that future patches and bugfixes are applied as part of a standard update.

Which you choose is, ultimately, a decision you need to make.

Option A - Install From Source

On Both nodes run:

# Obliterate peer - fence via cman

wget -c https://alteeve.com/files/an-cluster/sbin/obliterate-peer.sh -O /sbin/obliterate-peer.sh

chmod a+x /sbin/obliterate-peer.sh

ls -lah /sbin/obliterate-peer.sh

# Download, compile and install DRBD

wget -c http://oss.linbit.com/drbd/8.3/drbd-8.3.11.tar.gz

tar -xvzf drbd-8.3.11.tar.gz

cd drbd-8.3.11

./configure \

--prefix=/usr \

--localstatedir=/var \

--sysconfdir=/etc \

--with-utils \

--with-km \

--with-udev \

--with-pacemaker \

--with-rgmanager \

--with-bashcompletion

make

make install

chkconfig --add drbd

chkconfig drbd off

Option B - Install From ELRepo

On Both nodes run:

# Obliterate peer - fence via cman

wget -c https://alteeve.com/files/an-cluster/sbin/obliterate-peer.sh -O /sbin/obliterate-peer.sh

chmod a+x /sbin/obliterate-peer.sh

ls -lah /sbin/obliterate-peer.sh

# Install the ELRepo GPG key, add the repo and install DRBD.

rpm --import http://elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://elrepo.org/elrepo-release-6-4.el6.elrepo.noarch.rpm

yum install drbd83-utils kmod-drbd83

Configuring DRBD

DRBD is configured in two parts;

- Global and common configuration options

- Resource configurations

We will be creating three separate DRBD resources, so we will create three separate resource configuration files. More on that in a moment.

Configuring DRBD Global and Common Options

The first file to edit is /etc/drbd.d/global_common.conf. In this file, we will set global configuration options and set default resource configuration options. These default resource options can be overwritten in the actual resource files which we'll create once we're done here.

cp /etc/drbd.d/global_common.conf /etc/drbd.d/global_common.conf.orig

vim /etc/drbd.d/global_common.conf

diff -u /etc/drbd.d/global_common.conf.orig /etc/drbd.d/global_common.conf

--- /etc/drbd.d/global_common.conf.orig 2011-09-14 14:03:56.364566109 -0400

+++ /etc/drbd.d/global_common.conf 2011-09-14 14:23:37.287566400 -0400

@@ -15,24 +15,81 @@

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

+

+ # This script is a wrapper for RHCS's 'fence_node' command line

+ # tool. It will call a fence against the other node and return

+ # the appropriate exit code to DRBD.

+ fence-peer "/sbin/obliterate-peer.sh";

}

startup {

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb

+

+ # This tells DRBD to promote both nodes to Primary on start.

+ become-primary-on both;

+

+ # This tells DRBD to wait five minutes for the other node to

+ # connect. This should be longer than it takes for cman to

+ # timeout and fence the other node *plus* the amount of time it

+ # takes the other node to reboot. If you set this too short,

+ # you could corrupt your data. If you want to be extra safe, do

+ # not use this at all and DRBD will wait for the other node

+ # forever.

+ wfc-timeout 300;

+

+ # This tells DRBD to wait for the other node for three minutes

+ # if the other node was degraded the last time it was seen by

+ # this node. This is a way to speed up the boot process when

+ # the other node is out of commission for an extended duration.

+ degr-wfc-timeout 120;

}

disk {

# on-io-error fencing use-bmbv no-disk-barrier no-disk-flushes

# no-disk-drain no-md-flushes max-bio-bvecs

+

+ # This tells DRBD to block IO and fence the remote node (using

+ # the 'fence-peer' helper) when connection with the other node

+ # is unexpectedly lost. This is what helps prevent split-brain

+ # condition and it is incredible important in dual-primary

+ # setups!

+ fencing resource-and-stonith;

}

net {

# sndbuf-size rcvbuf-size timeout connect-int ping-int ping-timeout max-buffers

# max-epoch-size ko-count allow-two-primaries cram-hmac-alg shared-secret

# after-sb-0pri after-sb-1pri after-sb-2pri data-integrity-alg no-tcp-cork

+

+ # This tells DRBD to allow two nodes to be Primary at the same

+ # time. It is needed when 'become-primary-on both' is set.

+ allow-two-primaries;

+

+ # The following three commands tell DRBD how to react should

+ # our best efforts fail and a split brain occurs. You can learn

+ # more about these options by reading the drbd.conf man page.

+ # NOTE! It is not possible to safely recover from a split brain

+ # where both nodes were primary. This care requires human

+ # intervention, so 'disconnect' is the only safe policy.

+ after-sb-0pri discard-zero-changes;

+ after-sb-1pri discard-secondary;

+ after-sb-2pri disconnect;

}

syncer {

# rate after al-extents use-rle cpu-mask verify-alg csums-alg

+

+ # This alters DRBD's default syncer rate. Note that is it

+ # *very* important that you do *not* configure the syncer rate

+ # to be too fast. If it is too fast, it can significantly

+ # impact applications using the DRBD resource. If it's set to a

+ # rate higher than the underlying network and storage can

+ # handle, the sync can stall completely.

+ # This should be set to ~30% of the *tested* sustainable read

+ # or write speed of the raw /dev/drbdX device (whichever is

+ # slower). In this example, the underlying resource was tested

+ # as being able to sustain roughly 60 MB/sec, so this is set to

+ # one third of that rate, 20M.

+ rate 20M;

}

}

Thanks

| Any questions, feedback, advice, complaints or meanderings are welcome. | |||

| Alteeve's Niche! | Alteeve Enterprise Support | Community Support | |

| © 2025 Alteeve. Intelligent Availability® is a registered trademark of Alteeve's Niche! Inc. 1997-2025 | |||

| legal stuff: All info is provided "As-Is". Do not use anything here unless you are willing and able to take responsibility for your own actions. | |||