AN!Cluster Tutorial 2

| Warning: This updated tutorial is not yet complete. Please do not follow this tutorial until this warning has been removed! |

| Note: This is the second release of the 2-Node Red Hat KVM Cluster Tutorial. |

This paper has one goal;

- Create an easy to use, fully redundant platform for virtual servers.

What's New?

In the last two years, we've learned a lot about how to make an even more solid high-availability platform. We've created tools to make monitoring and management of the virtual servers and nodes trivially easy. This updated release of our tutorial brings these advances to you!

- Many refinements to the cluster stack that protect against corner cases seen over the last two years.

- Configuration naming convention changes to support the new AN!CDB dashboard.

- Addition of the AN!CM monitoring and alert system.

A Note on Terminology

In this tutorial, we will use the following terms;

- Anvil!: This is our name for the HA platform as a whole.

- Nodes: The physical hardware servers used as members in the cluster and which host the virtual servers.

- Servers: The virtual servers themselves.

- Compute Pack: This describes a pair of nodes that work together to power highly-available servers.

- Foundation Pack: This describes the switches, PDUs and UPSes used to power and connect the nodes.

- Monitor Pack: This describes the equipment used for the AN!CDB management dashboard.

Why Should I Follow This (Lengthy) Tutorial?

Following this tutorial is not the lightest undertaking. It is designed to teach you all the inner details of building an HA platform for VMs. When finished, you will have a detailed and deep understanding of what it takes to build a fully redundant platform for high-availability virtual machines. It's not a light undertaking, but a very worthwhile one if you want to understand high-availability.

If you want to build a VM cluster as quickly and efficiently as possible, AN! provides an installer script which automates most all of the cluster build.

In either case, when finished, you will have the following benefits;

- Totally open source. Everything. This guide and all software used is open!

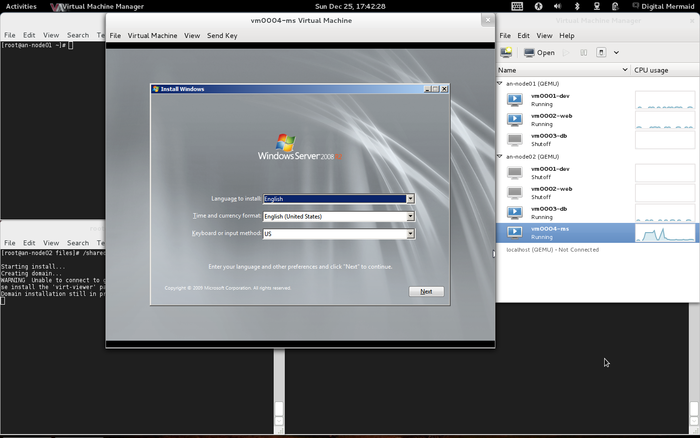

- You can host virtual servers running almost any operating system.

- The HA platform requires no access to the servers and no special software needs to be installed. Your users may well never know that they're on a virtual machine.

- Your servers will be transparently made highly-available. Most hardware failures will be totally transparent. The most core failures will cause an outage of roughly 30 to 90 seconds.

- Storage is synchronously replicated, guaranteeing that the total destruction of a node will cause no more data loss than a traditional server losing power.

- Storage is replicated without the need for a SAN, reducing cost and providing totally storage redundancy.

- Live-migration of virtual servers enables upgrading and node maintenance without downtime. No more weekend maintenance!

- With the AN! cluster monitoring, total monitoring of the HA stack, from predictive hardware failure detection to simple live migration alerts in a single application.

Ask your local VMWare or Microsoft Hyper-V sales person what they'd charge for all this. :)

High-Level Explanation of How HA Clustering Works

| Note: This section is an adaptation of this post to the Linux-HA mailing list. |

Before digging into the details, it might help to start with a high-level explanation of how HA clustering works.

Corosync uses the totem protocol for "heartbeat"-like monitoring of the other node's health. A token is passed around to each node, the node does some work (like acknowledge old messages, send new ones), and then it passes the token on to the next node. This goes around and around all the time. Should a node note pass it's token on after a short time-out period, the token is declared lost, an error count goes up and a new token is sent. If too many tokens are lost in a row, the node is declared lost.

Once the node is declared lost, the remaining nodes reform a new cluster. If enough nodes are left to form quorum (simple majority), then the new cluster will continue to provide services. In two-node clusters, like the ones we're building here, quorum is disabled so each node can work on it's own.

Corosync itself only cares about cluster membership and message passing. What happens after the cluster reforms is up to the cluster manager, cman, and the cluster resource manager, rgmanager.

The first thing cman does after being notified that a node was lost is initiate a fence against the lost node. This is a process where the lost node is powered off, called power fencing, or cut off from the network/storage, called fabric fencing. In either case, the idea is to make sure that the lost node is in a known state. If this is skipped, the node could recover later and try to provide cluster services, not having realized that it was removed from the cluster. This could cause problems from confusing switches to corrupting data.

When rgmanager is told that membership has changed because a node died, it looks to see what services might have been lost. Once it knows what was lost, it looks at the rules it's been given and decides what to do. These rules are defined in the cluster.conf's <rm> element. We'll go into detail on this later.

In two-node clusters, there is also a chance of a "split-brain". Because quorum has to be disabled, it is possible for both nodes to think the other node is dead and both try to provide the same cluster services. By using fencing, after the nodes break from one another (which could happen with a network failure, for example), neither node will offer services until one of them has fenced the other. The faster node will win and the slower node will shut down (or be isolated). The survivor can then run services safely without risking a split-brain.

Once the dead/slower node has been fenced, rgmanager then decides what to do with the services that had been running on the lost node. Generally, this means "restart the service here that had been running on the dead node". The details of this, though, are decided by you when you configure the resources in rgmanager. As we will see with each node's local storage service, the service is not recovered but instead left stopped.

The Task Ahead

Before we start, let's take a few minutes to discuss clustering and its complexities.

A Note on Patience

When someone wants to become a pilot, they can't jump into a plane and try to take off. It's not that flying is inherently hard, but it requires a foundation of understanding. Clustering is the same in this regard; there are many different pieces that have to work together just to get off the ground.

You must have patience.

Like a pilot on their first flight, seeing a cluster come to life is a fantastic experience. Don't rush it! Do your homework and you'll be on your way before you know it.

Coming back to earth:

Many technologies can be learned by creating a very simple base and then building on it. The classic "Hello, World!" script created when first learning a programming language is an example of this. Unfortunately, there is no real analogue to this in clustering. Even the most basic cluster requires several pieces be in place and working together. If you try to rush by ignoring pieces you think are not important, you will almost certainly waste time. A good example is setting aside fencing, thinking that your test cluster's data isn't important. The cluster software has no concept of "test". It treats everything as critical all the time and will shut down if anything goes wrong.

Take your time, work through these steps, and you will have the foundation cluster sooner than you realize. Clustering is fun because it is a challenge.

Technologies We Will Use

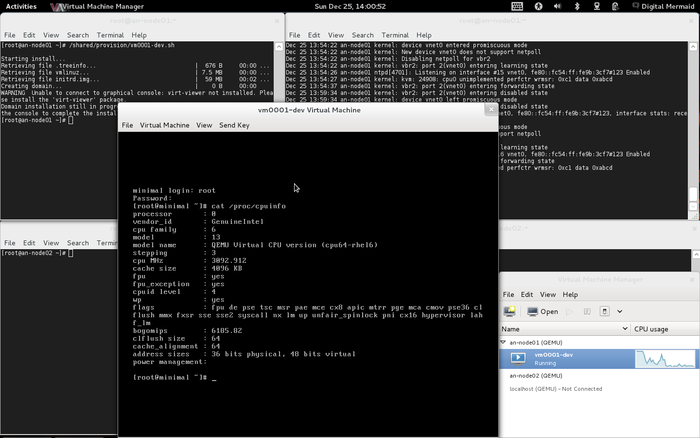

- Red Hat Enterprise Linux 6 (EL6); You can use a derivative like CentOS v6. Specifically, we're using 6.4.

- Red Hat Cluster Services "Stable" version 3. This describes the following core components:

- Corosync; Provides cluster communications using the totem protocol.

- Cluster Manager (cman); Manages the starting, stopping and managing of the cluster.

- Resource Manager (rgmanager); Manages cluster resources and services. Handles service recovery during failures.

- Clustered Logical Volume Manager (clvm); Cluster-aware (disk) volume manager. Backs GFS2 filesystems and KVM virtual machines.

- Global File Systems version 2 (gfs2); Cluster-aware, concurrently mountable file system.

- Distributed Redundant Block Device (DRBD); Keeps shared data synchronized across cluster nodes.

- KVM; Hypervisor that controls and supports virtual machines.

- Alteeve's Niche! Cluster DashBoard and Cluster Monitor

A Note on Hardware

Another new change is that Alteeve's Niche!, after years of experimenting with various hardware vendors, has partnered with Fujitsu. We chose them because of the unparalleled quality of their equipment.

This tutorial can be used on any manufacturer's hardware, provided it meets the minimum requirements listed below. That said, we strongly recommend readers give Fujitsu's RX-line of servers a close look. We do not get a discount for this recommendation, we genuinely love the quality of their gear. The only technical argument for using Fujitsu hardware is that we do all our cluster stack monitoring software development on Fujitsu RX200 and RX300 servers. So we can say with confidence that the AN! Software components will work well on their kit.

If you use any other hardware vendor and run into any trouble, please don't hesitate to contact us. We want to make sure that our HA stack works on as many systems as possible and will be happy to help out. Of course, all Alteeve code is open source, so contributions are always welcome, too!

System Requirements

The goal of this tutorial is to help you build an HA platform with zero single points of failure. In order to do this, certain minimum technical requirements must be met.

Bare minimum requirements;

- Two servers with the following;

- CPUs with [hardware-accelerated virtualization].

- Redundant power supplies

- IPMI or vendor-specific out-of-band management, like Fujitsu's iRMC, HP's iLO, Dell's iDRAC, etc.

- Six network interfaces, 1 Gbit or faster (yes, six!)

- 2 GiB of RAM and 44.5 GiB of storage for the host operating system, plus sufficient RAM and storage for your VMs.

- Two switched PDUs; APC-brand recommended but any with a supported fence agent is fine.

- Two network switches

Recommended Hardware; A Little More Detail

The previous section covers the bare-minimum system requirements for following this tutorial. If you are looking to production, we need to discuss important considerations for selecting hardware.

The Most Important Consideration - Storage

There is probably no single consideration more important than choosing the storage you will use.

In our years of building Anvil! HA platforms, we've found no single issue more important than storage latency. This is true for all virtualized environments, in fact.

The problem is this:

Multiple servers on shared storage can cause particularly random storage access. Traditional hard drives have disks with mechanical read/write heads on the ends of arms that sweep back and forth across the disk surfaces. These platters are broken up into "tracks" and each track is itself cut up into "sectors". So when a server needs to read or write data, the hard drive needs to sweep the arm over the track it wants and then wait there for the sector it wants to pass underneath.

This time taken to get the read/write head onto the track and then wait for the sector to pass underneath is called "seek latency". How long this latency actually takes depends on a few things;

- How fast are the platters rotating? The faster the platter speed, the less time it takes for a sector to pass under the read/write head.

- How fast the read/write arms can move and how far they have to travel between tracks. Highly random read/write requests can cause a lot of head travel.

- How many read/write requests are backed up in the queue. A problem that arises when the requests coming in are faster than the drive can service existing requests.

When many people think about hard drives, they generally worry about maximum write speeds. For environments with many virtual servers, this is actually far less important than it might seem. Reducing latency to ensure that read/write requests don't back up is far more important. If too many requests back-up in the cache, storage performance can collapse or stall out entirely.

This is particularly problematic when multiple servers try to boot at the same time. If, for example, a node with multiple servers dies, the surviving node will try to start the lost servers at nearly the same time. This causes a sudden dramatic rise in read requests and can cause all servers to hang entirely, a condition called a "boot storm".

Thankfully, this latency problem can be easily dealt with in one of three ways;

- Use solid-state drives. These have no moving parts, so there is no penalty for highly random read/write requests.

- Use fast platter drives and proper RAID controllers with write-back caching.

- Isolate each server onto dedicated platter drives.

Each of these solutions have benefits and downsides;

| Pro | Con | |

|---|---|---|

| Fast drives + Write-back caching | 15,000rpm SAS drives are extremely reliable and the high rotation speeds minimize latency caused by waiting for sectors to pass under the read/write heads. Using multiple drives in RAID level 5 or level 6 breaks up reads and writes into smaller pieces, allowing requests to be serviced quickly and helping keep the read/write buffer empty. Write-caching allows RAM-like write speeds and the ability to re-order disk access to minimize head movement. | The main con is the number of disks needed to get effective performance gains from striping. AN! Always uses a minimum of six disks, and many entry-level servers support a maximum of 4 drives. So you need to account for the number of disks you plan to use when selecting your hardware. |

| SSDs | No moving parts mean that read and write requests do not have to wait for mechanical movements to happen, drastically reducing latency. The minimum number of drives for SSD-based configuration is two. | Solid state drives use NAND flash, which can only be written to a finite number of times. All drives in our Anvil! will be written to roughly the same amount, so hitting this write-limit could mean that all drives in both nodes would fail at nearly the same time. Avoiding this requires careful monitoring of the drives and replacing them before their write limits are hit. |

| Isolated Storage | Dedicating hard drives to virtual servers avoids the highly random read/write issues found when multiple servers share the same storage. This allows for the safe use of cheap, inexpensive hard drives. This also means that dedicated hardware RAID controllers with battery-backed cache are not needed. This makes it possible to save a good amount of money in the hardware design. | The obvious down-side to isolated storage is that you significantly limit the number of servers you can host on your Anvil!. If you only need to support one or two servers though, this should not be an issue. |

The last piece to consider is the interface of the drives used, be they SSDs or traditional HDDs. The two common interface types are SATA and SAS.

- SATA HDD drives generally have a platter speed of 7,200rpm. The SATA interface has limited instruction set and provides minimal health reporting. These are "consumer" grade devices that are far less expensive, and far less reliable, than SAS drives.

- SAS drives are generally aimed at the enterprise environment and are built to much higher quality standards. SAS HDDs have rotational speeds of up to 15,000rpm and can handle far more read/write operations per second. Enterprise SSDs using the SAS interface are also much more reliable than their commercial counterpart. The main downside to SAS drives is their cost.

In all production environments, we strongly, strongly recommend SAS-connected drives. For learning, SATA drives are fine.

RAM - Preparing For Degradation

RAM is a far simpler topic than storage, thankfully. Here, all you need to do is add up how much RAM you plan to assign to servers, add at least 2 GiB for the host, and then install that much memory in your nodes.

In production, there are two technologies you will want to consider;

- ECC, error correction code, provide the ability for RAM to recover from single-bit errors. If you are familiar with how [[1]] in RAID arrays work, ECC in RAM is the same idea. This is often included in server-class hardware by default. It is highly recommended.

- Memory Mirroring is, continuing our storage comparison, RAID level 1 for RAM. All writes to memory go to two different chips. Should one fail, the contents of the RAM can still be read from the surviving module.

Never Over Provision!

"Over-provisioning", also called "This provisioning" is a concept made popular in many "cloud" technologies. It is a concept that has almost no place in HA environments.

A common example is creating virtual disks of a given apparent size, but which only pull space from real storage as needed. So if you created a "thin" virtual disk that was 80 GiB large, but only 20 GiB worth of data was used, only 20 GiB from the real storage would be used.

In essence; Over-provisioning is where you allocate more resources to servers than the nodes can actually provide, banking on the hopes that most servers will not use all of the resource allocated to them. The danger here, and the reason it has no place in HA, is that if the servers collectively use more resources than the nodes can provide, someone is going to crash.

CPU Cores - Possibly Acceptable Over-Provisioning

Over provisioning of RAM and storage is never acceptable in an HA environment, as mentioned. Over-allocating CPU cores is possibly acceptable though.

When selecting which CPUs to use in your nodes, the number of cores and the speed of the cores will determine how much computational horse-power you have to allocate to your servers. The main considerations are;

- Core speed; Any given "thread" can be processed by a single CPU core at a time. The faster the given core is, the faster it can process any given request. Many applications do not support multithreading, meaning that the only way to improve performance is to use faster cores, not more cores.

- Core count; Some applications support breaking up jobs into many threads, and passing them to multiple CPU cores at the same time for simultaneous processing. This way, the application feels faster to users because each CPU has to do less work to get a job done. Another benefit of multiple cores is that if one application consumes the processing power of a single core, other cores remain available for other applications, preventing processor congestion.

In processing, each CPU "core" can handle one program "thread" at a time. Since the earliest days of multitasking, operating systems have been able to handle threads waiting for a CPU resource to free up. So the risk of over-provisioning CPUs is restricted to performance issues only.

If you're building an Anvil! to support multiple servers and it's important that, no matter how busy the other servers are, the performance of each server can not degrade, then you need to be sure you have as many real CPU cores as you plan to assign to servers.

So for example, if you plan to have three servers and you plan to allocate each server four virtual CPU cores, you need a minimum of 13 real CPU cores (3 servers x 4 cores each plus at least one core for the node). In this scenario, you will want to choose servers with dual 8-core CPUs, for a total of 16 available real CPU cores. You may choose to buy two 6-core CPUs, for a total of 12 real cores, but you risk congestion still. If all three servers fully utilize their four cores at the same time, the host OS will be left with no available core for it's software, which manages the HA stack.

In many cases, however, risking a performance loss under periods of high CPU load is acceptable. In these cases, allocating more virtual cores than you have real cores is fine. Should the load of the servers climb to a point where all real cores are under 100% utilization, then some applications will slow down as they wait for their turn in the CPU.

In the end, the decision whether to over-provision CPU cores or not, and if so, by how much to over-provision, is up to you, the reader. Remember to consider balancing out faster cores with the number of cores. If your expected load will be short burst of computationally intense jobs, few-but-faster cores may be the best solution.

A Note on Hyper-Threading

Intel's hyper-threading technology can make a CPU appear to the OS to have twice as many real cores than it actually has. For example, a CPU listed as "4c/8t" (four cores, eight threads) will appear to the node as a simple 8-core CPU. In fact, you only have four cores and the additional four cores are emulated attempts to make more efficient use of the processing of each core.

Simply put, the idea behind this technology is to "slip in" a second thread when the CPU would otherwise be idle. For example, if the CPU core has to wait for memory to be fetched for the currently active thread, instead of sitting idle, a thread in the second core will be worked on.

How much benefit this gives you in the real world is debatable and highly depended on your applications. For the purposes of HA, it's recommended to not count the "HT cores" as real cores. That is to say, when calculating load, treat "4c/8t" CPUs as simple 4-core CPUs.

Six Network Interfaces, Seriously?

Yes, seriously.

Obviously, you can put everything on a single network card and your HA software will work, but it would be highly ill advised.

We will go into the network configuration at length later on. For now, here's an overview;

- Each network needs two links in order to be fault-tolerant. One link will go to the first network switch and the second link will go to the second network switch. This way, the failure of a network cable, port or switch will not interrupt traffic.

- There are three main networks in an Anvil!;

- Back-Channel Network; This is used by the cluster stack and is sensitive to latency. Delaying traffic on this network can cause the nodes the "partition", breaking the cluster stack.

- Storage Network; All disk writes will travel over this network. As such, it is easy to saturate this network. Sharing this traffic with other services would mean that it's very possible to significantly impact network performance under high disk write loads. For this reason, it is isolated.

- Internet-Facing Network; This network carries traffic to and from your servers. By isolating this network, users of your servers will never experience performance loss during storage or cluster high loads. Likewise, if your users place a high load on this network, it will not impact the ability of the Anvil! to function properly. It also isolates untrusted network traffic.

So, three networks, each using two links for redundancy, means that we need six network interfaces. Further complicating things, it is strongly recommended that you use three separate dual-port network cards. Using a single network card, as we will discuss in detail later, leaves you vulnerable to losing entire networks should the controller fail.

A Note on Dedicated IPMI Interfaces

Some server manufacturers provide access to IPMI using the same physical interface as one of the on-board network cards. Usually these companies provide optional upgrades to break the IPMI connection out to a dedicated network connector.

Whenever possible, it is recommended that you go with a dedicated IPMI connection.

We've found that it rarely, if ever, is possible for a node to talk to it's own network interface using a shared physical port. This is not strictly a problem, but it can certainly make testing and diagnostics easier when the node can ping and query it's own IPMI interface over the network.

Network Switches

The ideal switches to use in HA clustering are stackable, managed pair of switches. At the very least, a pair of switches that support VLANs is recommended. None of this is strictly required, but here are the reasons they're recommended;

- VLAN allows for totally isolating the BCN, SN and IFN traffic. This adds security and reduces broadcast traffic.

- Manages switches provide a unified interface for configuring both switches at the same time. This drastically simplifies complex configurations, like setting up VLANs that span the physical switches.

- Stacking provides a link between the two switches that effectively makes them work like one. Generally, this bandwidth available in the stack cable is much higher than the bandwidth of individual ports. This provides a high-speed link for all three VLANs in one cable and it allows for multiple links to fail without risking performance degradation. We'll talk more about this later.

Beyond these suggested features, there are a few other things to consider when choosing switches;

| Feature | Consideration |

|---|---|

| MTU size | # The default packet size on a network if 1500 bytes. If you build your VLANs in software, you need to account for the extra size needed for the VLAN header. If your switch supports "Jumbo Frames", then there should be no problem. However, some cheap switches do not support jumbo frames, requiring you to reduce the MTU size value for the interfaces on your nodes.

|

| Packets Per Second | This is a measure of how many packets can be routed per second, and generally is a reflection of the switch's processing power and memory. Cheaper switches will not have the ability to route a high number of packets at the same time, potentially causing congestion. |

| Multicast Groups | Some fancy switches, like some Cisco hardware, doesn't maintain multicast groups persistently. The cluster software uses multicast for communication, so if your switch drops a multicast group, it will cause your cluster to partition. If you have a managed switch, ensure that persistent multicast groups are enabled. We'll talk more about this later. |

| Port speed and count versus Internal Fabric Bandwidth | A switch that has, say, 48 Gbps ports may not be able to route 48 Gbps. This is a problem similar to over-provisioning we discussed above. If an inexpensive 48 port switch has an internal switch fabric of only 20 Gbps, then it can handle only up to 20 saturated ports at a time. Be sure to review the internal fabric capacity and make sure it's high enough to handle all connected interfaces running full speed. Note, of course, that only one link in a given bond will be active at a time. |

| Uplink speed | If you have a gigabit switch and you simply link the ports between the two switches, the link speed will be limited to 1 gigabit. Normally, all traffic will be kept on one switch, so this is fine in principle. If a single link fails over to the backup switch, then it will bounce up to the main switch at full speed. However, if a second link fails, both will be sharing the single gigabit uplink, so there is a risk of congestion on the link. If you can't get stacked switches, which generally have 10 Gbps speeds or higher, then look for switches with 10 Gbps dedicated uplink ports and use those for uplinks. Keep in mind that using ports for uplinks, instead of a stacking cable, will mean that each uplink port will be restricted to the VLAN it's a member of (or you need to share the uplink across multiple VLANs, breaking the isolation). |

| Port Trunking | If your existing network supports it, choosing a switch with port trunking provides a backup link from the foundation pack switches to the main network. This extends the network redundancy out to the rest of your network. |

There are numerous other valid considerations when choosing network switches for your Anvil!. These are the most prescient considerations, though.

Why Switched PDUs?

We will discuss this in detail later on, but in short, when a node stops responding, we can not simply assume that it is dead. To do so would be to risk a "split-brain" condition which can lead to data divergence, data loss and data corruption.

To deal with this, we need a mechanism of putting a node that is in an unknown state into a known state. A process called "fencing". Many people who build HA platforms use the IPMI interface for this purpose, as will we. The idea here is that, when a node stops responding, the surviving node connects to the lost node's IPMI interface and forces the machine to power off. The IPMI BMC is, effectively, a little computer inside the main computer, so it will work regardless of what state the node itself is in.

Once the node has been confirmed to be off, the services that had been running on it can be restarted on the remaining good node, safe in knowing that the lost peer is not also hosting these services. In our case, these "services" are the shared storage and the virtual servers.

There is a problem with this though. Actually, two.

- The IPMI draws it's power from the same power source as the server itself. If the host node loses power entirely, IPMI goes down with the host.

- The IPMI BMC has a single network interface and it is a single device.

Thus, if we relied on IPMI-based fencing alone, we'd have a single point of failure. If the surviving node can not put a lost node into a known state, it will hang, by design. The logic being that as bad as a hung cluster is, it's better than risking production. So what this means is that, with IPMI-based fencing alone, the loss of power to a single node would not be automatically recoverable.

That just will not do!

To make fencing redundant, we will use switched PDUs. Think of these as network-connected power bars.

Imagine now that one of the nodes blew itself up. The surviving node would try to connect to it's IPMI interface and, of course, get no response. Then it would log into both PDUs (one behind either side of the redundant power supplies) and cut the power going to the node. By doing this, we now have a way of putting a lost node into a known state.

So now, no matter how badly things go wrong, we can always recover!

Network Managed UPSes Are Worth It

We have found that a surprising number of issues that effect service availability are power related. A network-connected smart UPS allows you to monitor the power coming from the building mains. Thanks to this, we've been able to detect far more than simple "lost power" events. We've been able to detect failing transformers and regulators, over and under voltage events... Things that, if caught ahead of time, not only avoids full power outages, but also protects the rest of your gear that isn't protected by UPSes.

So strictly speaking, you don't need network managed UPSes. However, we have found them worth their weight in gold and thus strongly recommend them. We will, of course, be using them in this tutorial.

Dashboard Servers

The Anvil! will be managed by AN!CDB - Cluster Dashboard dashboard, a small little dedicated server. This can be a virtual machine on a laptop or desktop, or a dedicated little server. All that matters is that it can run RHEL or CentOS version 6 with a minimal desktop.

Normally, we setup a couple ASUS EeeBox machines, for redundancy of course, hanging off the back of a monitor. Then users can connect to the dashboard using a browser from any device and control the servers and nodes easily from it. It also provides KVM-like access to the servers on the Anvil!, allowing them to work on the servers when they can't connect over the network. For this reason, you will probably want to pair up the dashboard machines with a monitor that offers a decent resolution to make it easy to see the desktop of the hosted servers.

What You Should Know Before Beginning

It is assumed that you are familiar with Linux systems administration, specifically Red Hat Enterprise Linux and its derivatives. You will need to have somewhat advanced networking experience as well. You should be comfortable working in a terminal (directly or over ssh). Familiarity with XML will help, but is not terribly required as its use here is pretty self-evident.

If you feel a little out of depth at times, don't hesitate to set this tutorial aside. Browse over to the components you feel the need to study more, then return and continue on. Finally, and perhaps most importantly, you must have patience! If you have a manager asking you to "go live" with a cluster in a month, tell him or her that it simply won't happen. If you rush, you will skip important points and you will fail.

Patience is vastly more important than any pre-existing skill.

A Word On Complexity

Introducing the Fabimer Principle:

Clustering is not inherently hard, but it is inherently complex. Consider:

- Any given program has N bugs.

- RHCS uses; cman, corosync, dlm, fenced, rgmanager, and many more smaller apps.

- We will be adding DRBD, GFS2, clvmd, libvirtd and KVM.

- Right there, we have N^10 possible bugs. We'll call this A.

- A cluster has Y nodes.

- In our case, 2 nodes, each with 3 networks across 6 interfaces bonded into pairs.

- The network infrastructure (Switches, routers, etc). We will be using two managed switches, adding another layer of complexity.

- This gives us another Y^(2*(3*2))+2, the +2 for managed switches. We'll call this B.

- Let's add the human factor. Let's say that a person needs roughly 5 years of cluster experience to be considered an proficient. For each year less than this, add a Z "oops" factor, (5-Z)^2. We'll call this C.

- So, finally, add up the complexity, using this tutorial's layout, 0-years of experience and managed switches.

- (N^10) * (Y^(2*(3*2))+2) * ((5-0)^2) == (A * B * C) == an-unknown-but-big-number.

This isn't meant to scare you away, but it is meant to be a sobering statement. Obviously, those numbers are somewhat artificial, but the point remains.

Any one piece is easy to understand, thus, clustering is inherently easy. However, given the large number of variables, you must really understand all the pieces and how they work together. DO NOT think that you will have this mastered and working in a month. Certainly don't try to sell clusters as a service without a lot of internal testing.

Clustering is kind of like chess. The rules are pretty straight forward, but the complexity can take some time to master.

Overview of Components

When looking at a cluster, there is a tendency to want to dive right into the configuration file. That is not very useful in clustering.

- When you look at the configuration file, it is quite short.

Clustering isn't like most applications or technologies. Most of us learn by taking something such as a configuration file, and tweaking it to see what happens. I tried that with clustering and learned only what it was like to bang my head against the wall.

- Understanding the parts and how they work together is critical.

You will find that the discussion on the components of clustering, and how those components and concepts interact, will be much longer than the initial configuration. It is true that we could talk very briefly about the actual syntax, but it would be a disservice. Please don't rush through the next section, or worse, skip it and go right to the configuration. You will waste far more time than you will save.

- Clustering is easy, but it has a complex web of inter-connectivity. You must grasp this network if you want to be an effective cluster administrator!

Component; cman

The cman portion of the the cluster is the cluster manager. In the 3.0 series used in EL6, cman acts mainly as a quorum provider. That is, is adds up the votes from the cluster members and decides if there is a simple majority. If there is, the cluster is "quorate" and is allowed to provide cluster services.

The cman service will be used to start and stop all of the components needed to make the cluster operate.

Component; corosync

Corosync is the heart of the cluster. Almost all other cluster compnents operate though this.

In Red Hat clusters, corosync is configured via the central cluster.conf file. It can be configured directly in corosync.conf, but given that we will be building an RHCS cluster, we will only use cluster.conf. That said, almost all corosync.conf options are available in cluster.conf. This is important to note as you will see references to both configuration files when searching the Internet.

Corosync sends messages using multicast messaging by default. Recently, unicast support has been added, but due to network latency, it is only recommended for use with small clusters of two to four nodes. We will be using multicast in this tutorial.

A Little History

There were significant changes between RHCS the old version 2 and version 3 available on EL6, which we are using.

In the RHCS version 2, there was a component called openais which provided totem. The OpenAIS project was designed to be the heart of the cluster and was based around the Service Availability Forum's Application Interface Specification. AIS is an open API designed to provide inter-operable high availability services.

In 2008, it was decided that the AIS specification was overkill for most clustered applications being developed in the open source community. At that point, OpenAIS was split in to two projects: Corosync and OpenAIS. The former, Corosync, provides totem, cluster membership, messaging, and basic APIs for use by clustered applications, while the OpenAIS project became an optional add-on to corosync for users who want the full AIS API.

You will see a lot of references to OpenAIS while searching the web for information on clustering. Understanding its evolution will hopefully help you avoid confusion.

The Future of Corosync

In EL6, corosync is version 1.4. Upstream, however, it's passed version 2. One of the major changes in the 2+ version is that corosync becomes a quorum provider, helping to remove the need for cman. If you experiment with clustering on Fedora, for example, you will find that cman is gone entirely.

Concept; quorum

Quorum is defined as the minimum set of hosts required in order to provide clustered services and is used to prevent split-brain situations.

The quorum algorithm used by the RHCS cluster is called "simple majority quorum", which means that more than half of the hosts must be online and communicating in order to provide service. While simple majority quorum is a very common quorum algorithm, other quorum algorithms exist (grid quorum, YKD Dyanamic Linear Voting, etc.).

The idea behind quorum is that, when a cluster splits into two or more partitions, which ever group of machines has quorum can safely start clustered services knowing that no other lost nodes will try to do the same.

Take this scenario;

- You have a cluster of four nodes, each with one vote.

- The cluster's expected_votes is 4. A clear majority, in this case, is 3 because (4/2)+1, rounded down, is 3.

- Now imagine that there is a failure in the network equipment and one of the nodes disconnects from the rest of the cluster.

- You now have two partitions; One partition contains three machines and the other partition has one.

- The three machines will have quorum, and the other machine will lose quorum.

- The partition with quorum will reconfigure and continue to provide cluster services.

- The partition without quorum will withdraw from the cluster and shut down all cluster services.

When the cluster reconfigures and the partition wins quorum, it will fence the node(s) in the partition without quorum. Once the fencing has been confirmed successful, the partition with quorum will begin accessing clustered resources, like shared filesystems.

This also helps explain why an even 50% is not enough to have quorum, a common question for people new to clustering. Using the above scenario, imagine if the split were 2 and 2 nodes. Because either can't be sure what the other would do, neither can safely proceed. If we allowed an even 50% to have quorum, both partition might try to take over the clustered services and disaster would soon follow.

There is one, and only one except to this rule.

In the case of a two node cluster, as we will be building here, any failure results in a 50/50 split. If we enforced quorum in a two-node cluster, there would never be high availability because and failure would cause both nodes to withdraw. The risk with this exception is that we now place the entire safety of the cluster on fencing, a concept we will cover in a second. Fencing is a second line of defense and something we are loath to rely on alone.

Even in a two-node cluster though, proper quorum can be maintained by using a quorum disk, called a qdisk. Unfortunately, qdisk on a DRBD resource comes with its own problems, so we will not be able to use it here.

Concept; Virtual Synchrony

Many cluster operations, like distributed locking and so on, have to occur in the same order across all nodes. This concept is called "virtual synchrony".

This is provided by corosync using "closed process groups", CPG. A closed process group is simply a private group of processes in a cluster. Within this closed group, all messages between members are ordered. Delivery, however, is not guaranteed. If a member misses messages, it is up to the member's application to decide what action to take.

Let's look at two scenarios showing how locks are handled using CPG;

- The cluster starts up cleanly with two members.

- Both members are able to start service:foo.

- Both want to start it, but need a lock from DLM to do so.

- The an-c05n01 member has its totem token, and sends its request for the lock.

- DLM issues a lock for that service to an-c05n01.

- The an-c05n02 member requests a lock for the same service.

- DLM rejects the lock request.

- The an-c05n01 member successfully starts service:foo and announces this to the CPG members.

- The an-c05n02 sees that service:foo is now running on an-c05n01 and no longer tries to start the service.

- The two members want to write to a common area of the /shared GFS2 partition.

- The an-c05n02 sends a request for a DLM lock against the FS, gets it.

- The an-c05n01 sends a request for the same lock, but DLM sees that a lock is pending and rejects the request.

- The an-c05n02 member finishes altering the file system, announces the changed over CPG and releases the lock.

- The an-c05n01 member updates its view of the filesystem, requests a lock, receives it and proceeds to update the filesystems.

- It completes the changes, annouces the changes over CPG and releases the lock.

Messages can only be sent to the members of the CPG while the node has a totem token from corosync.

Concept; Fencing

| Warning: DO NOT BUILD A CLUSTER WITHOUT PROPER, WORKING AND TESTED FENCING. |

Fencing is a absolutely critical part of clustering. Without fully working fence devices, your cluster will fail.

Sorry, I promise that this will be the only time that I speak so strongly. Fencing really is critical, and explaining the need for fencing is nearly a weekly event.

So then, let's discuss fencing.

When a node stops responding, an internal timeout and counter start ticking away. During this time, no DLM locks are allowed to be issued. Anything using DLM, including rgmanager, clvmd and gfs2, are effectively hung. The hung node is detected using a totem token timeout. That is, if a token is not received from a node within a period of time, it is considered lost and a new token is sent. After a certain number of lost tokens, the cluster declares the node dead. The remaining nodes reconfigure into a new cluster and, if they have quorum (or if quorum is ignored), a fence call against the silent node is made.

The fence daemon will look at the cluster configuration and get the fence devices configured for the dead node. Then, one at a time and in the order that they appear in the configuration, the fence daemon will call those fence devices, via their fence agents, passing to the fence agent any configured arguments like username, password, port number and so on. If the first fence agent returns a failure, the next fence agent will be called. If the second fails, the third will be called, then the forth and so on. Once the last (or perhaps only) fence device fails, the fence daemon will retry again, starting back at the start of the list. It will do this indefinitely until one of the fence devices succeeds.

Here's the flow, in point form:

- The totem token moves around the cluster members. As each member gets the token, it sends sequenced messages to the CPG members.

- The token is passed from one node to the next, in order and continuously during normal operation.

- Suddenly, one node stops responding.

- A timeout starts (~238ms by default), and each time the timeout is hit, and error counter increments and a replacement token is created.

- The silent node responds before the failure counter reaches the limit.

- The failure counter is reset to 0

- The cluster operates normally again.

- Again, one node stops responding.

- Again, the timeout begins. As each totem token times out, a new packet is sent and the error count increments.

- The error counts exceed the limit (4 errors is the default); Roughly one second has passed (238ms * 4 plus some overhead).

- The node is declared dead.

- The cluster checks which members it still has, and if that provides enough votes for quorum.

- If there are too few votes for quorum, the cluster software freezes and the node(s) withdraw from the cluster.

- If there are enough votes for quorum, the silent node is declared dead.

- corosync calls fenced, telling it to fence the node.

- The fenced daemon notifies DLM and locks are blocked.

- Which fence device(s) to use, that is, what fence_agent to call and what arguments to pass, is gathered.

- For each configured fence device:

- The agent is called and fenced waits for the fence_agent to exit.

- The fence_agent's exit code is examined. If it's a success, recovery starts. If it failed, the next configured fence agent is called.

- If all (or the only) configured fence fails, fenced will start over.

- fenced will wait and loop forever until a fence agent succeeds. During this time, the cluster is effectively hung.

- Once a fence_agent succeeds, fenced notifies DLM and lost locks are recovered.

- GFS2 partitions recover using their journal.

- Lost cluster resources are recovered as per rgmanager's configuration (including file system recovery as needed).

- Normal cluster operation is restored, minus the lost node.

This skipped a few key things, but the general flow of logic should be there.

This is why fencing is so important. Without a properly configured and tested fence device or devices, the cluster will never successfully fence and the cluster will remain hung until a human can intervene.

Is "Fencing" the same as STONITH?

Yes.

In the old days, there were two distinct open-source HA clustering stacks. The Linux-HA's project used the term "STONITH", an acronym for "Shoot The Other Node In The Head", for fencing. Red Hat's cluster stack used the term "fencing" for the same concept.

We prefer the term "fencing" because the fundamental goal is to put the target node into a state where it can not effect cluster resources or provide clustered services. This can be accomplished by powering it off, called "power fencing", or by disconnecting it from SAN storage and/or network, a process called "fabric fencing".

The term "STONITH", based on it's acronym, implies power fencing. This is not a big deal, but it is the reason this tutorial sticks with the term "fencing".

Component; totem

The totem protocol defines message passing within the cluster and it is used by corosync. A token is passed around all the nodes in the cluster, and nodes can only send messages while they have the token. A node will keep its messages in memory until it gets the token back with no "not ack" messages. This way, if a node missed a message, it can request it be resent when it gets its token. If a node isn't up, it will simply miss the messages.

The totem protocol supports something called 'rrp', Redundant Ring Protocol. Through rrp, you can add a second backup ring on a separate network to take over in the event of a failure in the first ring. In RHCS, these rings are known as "ring 0" and "ring 1". The RRP is being re-introduced in RHCS version 3. Its use is experimental and should only be used with plenty of testing.

Component; rgmanager

When the cluster membership changes, corosync tells the rgmanager that it needs to recheck its services. It will examine what changed and then will start, stop, migrate or recover cluster resources as needed.

Within rgmanager, one or more resources are brought together as a service. This service is then optionally assigned to a failover domain, an subset of nodes that can have preferential ordering.

The rgmanager daemon runs separately from the cluster manager, cman. This means that, to fully start the cluster, we need to start both cman and then rgmanager.

What about Pacemaker?

Pacemaker is also a resource manager, like rgmanager. You can not use both in the same cluster.

Back prior to 2008, there were two distinct open-source cluster projects;

- Red Hat's Cluster Service

- Linux-HA's Heartbeat

Pacemaker was born out of the Linux-HA project as an advanced resource manager that could use either heartbeat or openais for cluster membership and communication. Unlike RHCS and heartbeat, it's sole focus was resource management.

In 2008, plans were made to begin the slow process of merging the two independent stacks into one. As mentioned in the corosync overview, it replaced openais and became the default cluster membership and communication layer for both RHCS and Pacemaker. Development of heartbeat was ended, though Linbit continues to maintain the heartbeat code to this day.

The fence and resource agents, software that acts as a glue between the cluster and the devices and resource they manage, were merged next. You can now use the same set of agents on both pacemaker and RHCS.

Red Hat introduced pacemaker as "Tech Preview" in RHEL 6.0. It has been available beside RHCS ever since, though support is not offered yet*.

Red Hat has a strict policy of not saying what will happen in the future. That said, the speculation is that Pacemaker will become supported soon and will replace rgmanager entirely in RHEL 7, given that cman and rgmanager no longer exist upstream in Fedora.

So why don't we use pacemaker here?

We believe that, no matter how promising software looks, stability is king. Pacemaker on other distributions has been stable and supported for a long time. However, on RHEL, it's a recent addition and the developers have been doing a tremendous amount of work on pacemaker and associated tools. For this reason, we feel that on RHEL 6, pacemaker is too much of a moving target at this time. That said, we do intend to switch to pacemaker some time in the next year or two, depending on how the Red Hat stack evolves.

Component; qdisk

| Note: qdisk does not work reliably on a DRBD resource, so we will not be using it in this tutorial. |

A Quorum disk, known as a qdisk is small partition on SAN storage used to enhance quorum. It generally carries enough votes to allow even a single node to take quorum during a cluster partition. It does this by using configured heuristics, that is custom tests, to decided which node or partition is best suited for providing clustered services during a cluster reconfiguration. These heuristics can be simple, like testing which partition has access to a given router, or they can be as complex as the administrator wishes using custom scripts.

Though we won't be using it here, it is well worth knowing about when you move to a cluster with SAN storage.

Component; DRBD

DRBD; Distributed Replicating Block Device, is a technology that takes raw storage from two nodes and keeps their data synchronized in real time. It is sometimes described as "network RAID Level 1", and that is conceptually accurate. In this tutorial's cluster, DRBD will be used to provide that back-end storage as a cost-effective alternative to a traditional SAN device.

DRBD is, fundamentally, a raw block device. If you've ever used mdadm to create a software RAID array, then you will be familiar with this.

Think of it this way;

With traditional software raid, you would take;

- /dev/sda5 + /dev/sdb5 -> /dev/md0

With DRBD, you have this;

- node1:/dev/sda5 + node2:/dev/sda5 -> both:/dev/drbd0

In both cases, as soon as you create the new md0 or drbd0 device, you pretend like the member devices no longer exist. You format a filesystem onto /dev/md0, use /dev/drbd0 as an LVM physical volume, and so on.

The main difference with DRBD is that the /dev/drbd0 will always be the same on both nodes. If you write something to node 1, it's instantly available on node 2, and vice versa. Of course, this means that what ever you put on top of DRBD has to be "cluster aware". That is to say, the program or file system using the new /dev/drbd0 device has to understand that the contents of the disk might change because of another node.

Component; Clustered LVM

With DRBD providing the raw storage for the cluster, we must next consider partitions. This is where Clustered LVM, known as CLVM, comes into play.

CLVM is ideal in that by using DLM, the distributed lock manager. It won't allow access to cluster members outside of corosync's closed process group, which, in turn, requires quorum.

It is ideal because it can take one or more raw devices, known as "physical volumes", or simple as PVs, and combine their raw space into one or more "volume groups", known as VGs. These volume groups then act just like a typical hard drive and can be "partitioned" into one or more "logical volumes", known as LVs. These LVs are where KVM's virtual machine guests will exist and where we will create our GFS2 clustered file system.

LVM is particularly attractive because of how flexible it is. We can easily add new physical volumes later, and then grow an existing volume group to use the new space. This new space can then be given to existing logical volumes, or entirely new logical volumes can be created. This can all be done while the cluster is online offering an upgrade path with no down time.

Component; GFS2

With DRBD providing the clusters raw storage space, and Clustered LVM providing the logical partitions, we can now look at the clustered file system. This is the role of the Global File System version 2, known simply as GFS2.

It works much like standard filesystem, with user-land tools like mkfs.gfs2, fsck.gfs2 and so on. The major difference is that it and clvmd use the cluster's distributed locking mechanism provided by the dlm_controld daemon. Once formatted, the GFS2-formatted partition can be mounted and used by any node in the cluster's closed process group. All nodes can then safely read from and write to the data on the partition simultaneously.

| Note: GFS2 is only supported when run on top of Clustered LVM LVs. This is because, in certain error states, gfs2_controld will call dmsetup to disconnect the GFS2 partition from its storage in certain failure states. |

Component; DLM

One of the major roles of a cluster is to provide distributed locking for clustered storage and resource management.

Whenever a resource, GFS2 filesystem or clustered LVM LV needs a lock, it sends a request to dlm_controld which runs in userspace. This communicates with DLM in kernel. If the lockspace does not yet exist, DLM will create it and then give the lock to the requester. Should a subsequant lock request come in for the same lockspace, it will be rejected. Once the application using the lock is finished with it, it will release the lock. After this, another node may request and receive a lock for the lockspace.

If a node fails, fenced will alert dlm_controld that a fence is pending and new lock requests will block. After a successful fence, fenced will alert DLM that the node is gone and any locks the victim node held are released. At this time, other nodes may request a lock on the lockspaces the lost node held and can perform recovery, like replaying a GFS2 filesystem journal, prior to resuming normal operation.

Note that DLM locks are not used for actually locking the file system. That job is still handled by plock() calls (POSIX locks).

Component; KVM

Two of the most popular open-source virtualization platforms available in the Linux world today and Xen and KVM. The former is maintained by Citrix and the other by Redhat. It would be difficult to say which is "better", as they're both very good. Xen can be argued to be more mature where KVM is the "official" solution supported by Red Hat in EL6.

We will be using the KVM hypervisor within which our highly-available virtual machine guests will reside. It is a type-1 hypervisor, which means that the host operating system runs directly on the bare hardware. Contrasted against Xen, which is a type-2 hypervisor where even the installed OS is itself just another virtual machine.

Node Installation

This section is going to be intentionally vague, as I don't want to influence too heavily what hardware you buy or how you install your operating systems. However, we need a baseline, a minimum system requirement of sorts. Also, I will refer fairly frequently to my setup, so I will share with you the details of what I bought. Please don't take this as an endorsement though... Every cluster will have its own needs, and you should plan and purchase for your particular needs.

In my case, my goal was to have a low-power consumption setup and I knew that I would never put my cluster into production as it's strictly a research and design cluster. As such, I can afford to be quite modest.

Node Host Names

Before we begin, let

We need to decide what naming convention and IP ranges to use for our nodes and their networks.

The IP addresses and subnets you decide to use are completely up to you. The host names though need to follow a certain standard, if you wish to use the AN!CDB dashboard, as we will do here. Specifically, the node names on your nodes must end in n01 for node #1 and n02 for node #2. The reason for this will be discussed later.

The node host name convention that we've created is this:

- xx-cYYn0{1,2}

- xx is a two or three letter prefix used to denote the company, group or person who owns the Anvil!

- cYY is a simple zero-padded sequence number number.

- n0{1,2} indicated the node in the cluster.

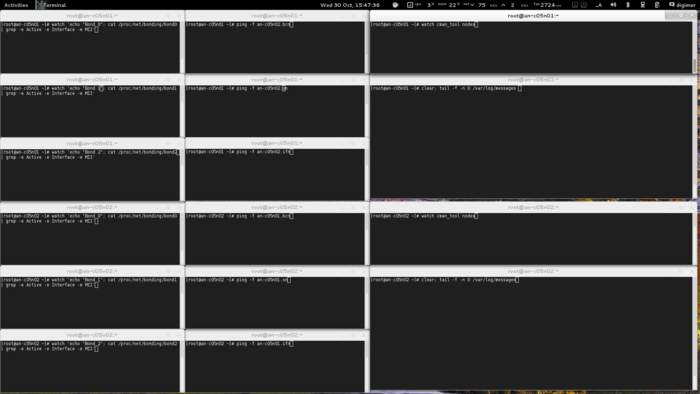

In this tutorial, the Anvil! is owned and operated by "Alteeve's Niche!", so the prefix "an" is used. This is the fifth cluster we've got, so the cluster name is an-cluster-05, so the host name's cluster number is c05. Thus, node #1 is named an-c05n01 and node #2 is named an-c05n02.

As we have three distinct networks, we have three network-specific suffixes we apply to these host names which we will map to subnets in /etc/hosts later.

- <hostname>.bcn; Back-Channel Network host name.

- <hostname>.sn; Storage Network hostname.

- <hostname>.ifn; Internet-Facing Network host name.

Again, what you use is entirely up to you. Just remember that the node's host name must end in n01 and n02 for AN!CDB to work.

Foundation Pack Host Names

The foundation pack devices, switches, PDUs and UPSes, can support multiple Anvil! platforms. Likewise, the dashboard servers support multiple Anvil!s as well. For this reason, the cXX portion of the host name does not make sense when choosing host names for these devices.

As always, you are free to choose host names that make sense to you. For this tutorial, the following host names are used;

| Device | Host name | Examples | Note |

|---|---|---|---|

| Network Switches | xx-sYY |

|

The xx prefix is the owner's prefix and YY is a simple sequence number. |

| Switched PDUs | xx-pYY |

|

The xx prefix is the owner's prefix and YY is a simple sequence number. |

| Network Managed UPSes | xx-uYY |

|

The xx prefix is the owner's prefix and YY is a simple sequence number. |

| Dashboard Servers | xx-mYY |

|

The xx prefix is the owner's prefix and YY is a simple sequence number. Note that the m letter was chosen for historical reasons. The dashboard used to be called "monitoring servers". For consistency with existing dashboards, m has remained. Note also that the dashboards will connect to both the BCN and SN, so like the nodes, host names with the .bcn and .ifn suffixes will be used. |

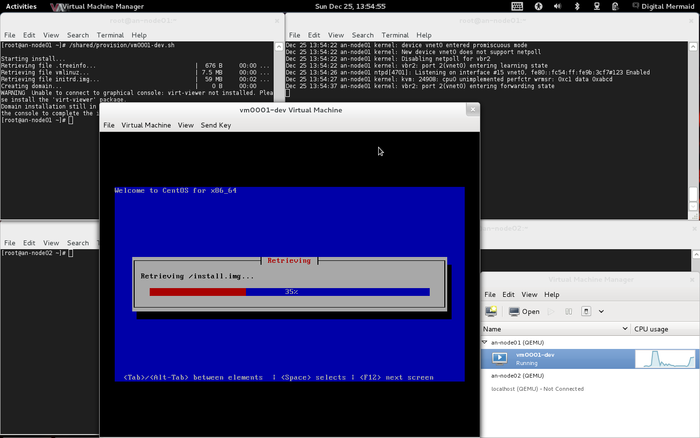

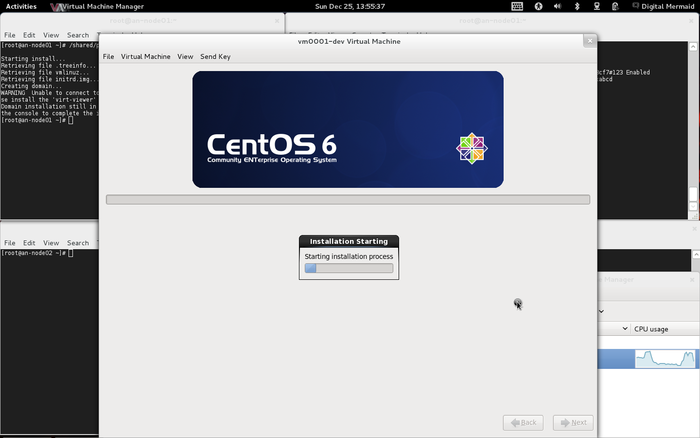

OS Installation

| Warning: EL6.1 shipped with a version of corosync that had a token retransmit bug. On slower systems, there would be a form of race condition which would cause totem tokens the be retransmitted and cause significant performance problems. This has been resolved in EL6.2 so please be sure to upgrade. |

Beyond being based on RHEL 6, there are no requirements for how the operating system is installed. This tutorial is written using "minimal" installs, and as such, installation instructions will be provided that will install all needed packages if they aren't already installed on your nodes.

A few notes about the installation used for this tutorial;

- RHCS stable 3 supports selinux, but it is disabled in this tutorial.

- Both iptables and ip6tables firewalls are disabled.

Obviously, this significantly reduces the security of your nodes. For learning, which is the goal here, this helps keep a focus on the clustering and simplifies debugging when things go wrong. In production clusters though, these steps are ill advised. It is strongly suggested that you enable first the firewall, then when that is working, enabling selinux. Leaving selinux for last is intentional, as it generally takes the most work to get right.

Network Security

When building production clusters, you will want to consider two options with regard to network security.

First, the interfaces connected to an untrusted network, like the Internet, should not have an IP address, though the interfaces themselves will need to be up so that virtual machines can route through them to the outside world. Alternatively, anything inbound from the virtual machines or inbound from the untrusted network should be DROPed by the firewall.

Second, if you can not run the cluster communications or storage traffic on dedicated network connections over isolated subnets, you will need to configure the firewall to block everything except the ports needed by storage and cluster traffic. The default ports are below.

| Component | Protocol | Port | Note |

|---|---|---|---|

| dlm | TCP | 21064 | |

| drbd | TCP | 7788+ | Each DRBD resource will use an additional port, generally counting up (ie: r0 will use 7788, r1 will use 7789, r2 will use 7790 and so on). |

| luci | TCP | 8084 | Optional web-based configuration tool, not used in this tutorial. |

| modclusterd | TCP | 16851 | |

| ricci | TCP | 11111 | Each DRBD resource will use an additional port, generally counting up (ie: r1 will use 7790, r2 will use 7791 and so on). |

| totem | UDP/multicast | 5404, 5405 | Uses a multicast group for cluster communications |

| Note: As of EL6.2, you can now use unicast for totem communication instead of multicast. This is not advised, and should only be used for clusters of two or three nodes on networks where unresolvable multicast issues exist. If using gfs2, as we do here, using unicast for totem is strongly discouraged. |

Network

Before we begin, let's take a look at a block diagram of what we're going to build. This will help when trying to see what we'll be talking about.

A Map!

Nodes \_/

____________________________________________________________________________ _____|____ ____________________________________________________________________________

| an-c05n01.alteeve.ca | /--------{_Internet_}---------\ | an-c05n02.alteeve.ca |

| Network: | | | | Network: |

| _________________ _____________________| | _________________________ | |_____________________ _________________ |

| Servers: | vbr2 |---| bond2 | | | an-s01 Switch 1 | | | bond2 |---| vbr2 | Servers: |

| _______________________ | 10.255.50.1 | | ____________________| | |____ Internet-Facing ____| | |____________________ | | 10.255.50.2 | ......................... |

| | [ vm01-win2008 ] | |_________________| || eth2 =----=_01_] Network [_02_=----= eth2 || |_________________| : [ vm01-win2008 ] : |

| | ____________________| | : | | : : | | || 00:1B:21:81:C3:34 || | |____________________[_24_=-/ || 00:1B:21:81:C2:EA || : : | | : : | : :____________________ : |

| | | NIC 1 =----/ : | | : : | | ||___________________|| | | an-s02 Switch 2 | ||___________________|| : : | | : : | :----= NIC 1 | : |

| | | 10.255.1.1 || : | | : : | | | ____________________| | |____ ____| |____________________ | : : | | : : | :| 10.255.1.1 | : |

| | | ..:..:..:..:..:.. || : | | : : | | || eth5 =----=_01_] VLAN ID 101 [_02_=----= eth5 || : : | | : : | :| ..:..:..:..:..:.. | : |

| | |___________________|| : | | : : | | || A0:36:9F:02:E0:05 || | |____________________[_24_=-\ || A0:36:9F:07:D6:2F || : : | | : : | :|___________________| : |

| | ____ | : | | : : | | ||___________________|| | | ||___________________|| : : | | : : | : ____ : |

| /--=--[_c:_] | : | | : : | | |_____________________| \-----------------------------/ |_____________________| : : | | : : | : [_c:_]--=--\ |

| | |_______________________| : | | : : | | _____________________| |_____________________ : : | | : : | :.......................: | |

| | : | | : : | | | bond1 | _________________________ | bond1 | : : | | : : | | |

| | ......................... : | | : : | | | 10.10.50.1 | | an-s01 Switch 1 | | 10.10.50.2 | : : | | : : | _______________________ | |

| | : [ vm02-win2012 ] : : | | : : | | | ____________________| |____ Storage ____| |____________________ | : : | | : : | | [ vm02-win2012 ] | | |

| | : ____________________: : | | : : | | || eth1 =----=_09_] Network [_10_=----= eth1 || : : | | : : | |____________________ | | |

| | : | NIC 1 =---: | | : : | | || 00:19:99:9C:9B:9F || |_________________________| || 00:19:99:9C:A0:6D || : : | | : : \---= NIC 1 | | | |

| | : | 10.255.1.2 |: | | : : | | ||___________________|| | an-s02 Switch 2 | ||___________________|| : : | | : : || 10.255.1.2 | | | |

| | : | ..:..:..:..:..:.. |: | | : : | | | ____________________| |____ ____| |____________________ | : : | | : : || ..:..:..:..:..:.. | | | |

| | : |___________________|: | | : : | | || eth4 =----=_09_] VLAN ID 100 [_10_=----= eth4 || : : | | : : ||___________________| | | |

| | : ____ : | | : : | | || A0:36:9F:02:E0:04 || |_________________________| || A0:36:9F:07:D6:2E || : : | | : : | ____ | | |

| | /--=--[_c:_] : | | : : | | ||___________________|| ||___________________|| : : | | : : | [_c:_]--=--\ | |

| | | :.......................: | | : : | | /--|_____________________| |_____________________|--\ : : | | : : |_______________________| | | |

| | | | | : : | | | _____________________| |_____________________ | : : | | : : | | |

| | | _______________________ | | : : | | | | bond0 | _________________________ | bond0 | | : : | | : : ......................... | | |

| | | | [ vm03-win7 ] | | | : : | | | | 10.20.50.1 | | an-s01 Switch 1 | | 10.20.50.2 | | : : | | : : : [ vm02-win2012 ] : | | |

| | | | ____________________| | | : : | | | | ____________________| |____ Back-Channel ____| |____________________ | | : : | | : : :____________________ : | | |

| | | | | NIC 1 =-----/ | : : | | | || eth0 =----=_13_] Network [_14_=----= eth0 || | : : | | : :-----= NIC 1 | : | | |

| | | | | 10.255.1.3 || | : : | | | || 00:19:99:9C:9B:9E || |_________________________| || 00:19:99:9C:A0:6C || | : : | | : :| 10.255.1.3 | : | | |

| | | | | ..:..:..:..:..:.. || | : : | | | ||___________________|| | an-s02 Switch 2 | ||___________________|| | : : | | : :| ..:..:..:..:..:.. | : | | |

| | | | |___________________|| | : : | | | || eth3 =----=_13_] VLAN ID 1 [_14_=----= eth3 || | : : | | : :|___________________| : | | |

| | | | ____ | | : : | | | || 00:1B:21:81:C3:35 || |_________________________| || 00:1B:21:81:C2:EB || | : : | | : : ____ : | | |

| +--|-=--[_c:_] | | : : | | | ||___________________|| ||___________________|| | : : | | : : [_c:_]--=--|--+ |

| | | |_______________________| | : : | | | |_____________________| |_____________________| | : : | | : :.......................: | | |

| | | | : : | | | | | | : : | | : | | |

| | | _______________________ | : : | | | | | | : : | | : ......................... | | |

| | | | [ vm04-win8 ] | | : : | | \ | | / : : | | : : [ vm04-win8 ] : | | |

| | | | ____________________| | : : | | \ | | / : : | | : :____________________ : | | |

| | | | | NIC 1 =-------/ : : | | | | | | : : | | :-------= NIC 1 | : | | |

| | | | | 10.255.1.4 || : : | | | | | | : : | | :| 10.255.1.4 | : | | |

| | | | | ..:..:..:..:..:.. || : : | | | | | | : : | | :| ..:..:..:..:..:.. | : | | |

| | | | |___________________|| : : | | | | | | : : | | :|___________________| : | | |

| | | | ____ | : : | | | | | | : : | | : ____ : | | |

| +--|-=--[_c:_] | : : | | | | | | : : | | : [_c:_]--=--|--+ |

| | | |_______________________| : : | | | | | | : : | | :.......................: | | |

| | | : : | | | | | | : : | | | | |

| | | ......................... : : | | | | | | : : | | _______________________ | | |

| | | : [ vm05-freebsd9 ] : : : | | | | | | : : | | | [ vm05-freebsd9 ] | | | |

| | | : ____________________: : : | | | | | | : : | | |____________________ | | | |

| | | : | em0 =---------: : | | | | | | : : | \---------= em0 | | | | |

| | | : | 10.255.1.5 |: : | | | | | | : : | || 10.255.1.5 | | | | |

| | | : | ..:..:..:..:..:.. |: : | | | | | | : : | || ..:..:..:..:..:.. | | | | |

| | | : |___________________|: : | | | | | | : : | ||___________________| | | | |

| | | : ______ : : | | | | | | : : | | ______ | | | |

| | +--=--[_ada0_] : : | | | | | | : : | | [_ada0_]--=--+ | |

| | | :.......................: : | | | | | | : : | |_______________________| | | |

| | | : | | | | | | : : | | | |

| | | ......................... : | | | | | | : : | _______________________ | | |

| | | : [ vm06-solaris11 ] : : | | | | | | : : | | [ vm06-solaris11 ] | | | |

| | | : ____________________: : | | | | | | : : | |____________________ | | | |

| | | : | net0 =-----------: | | | | | | : : \-----------= net0 | | | | |

| | | : | 10.255.1.6 |: | | | | | | : : || 10.255.1.6 | | | | |

| | | : | ..:..:..:..:..:.. |: | | | | | | : : || ..:..:..:..:..:.. | | | | |

| | | : |___________________|: | | | | | | : : ||___________________| | | | |

| | | : ______ : | | | | | | : : | ______ | | | |

| | +--=--[_c3d0_] : | | | | | | : : | [_c3d0_]--=--+ | |

| | | :.......................: | | | | | | : : |_______________________| | | |

| | | | | | | | | : : | | |

| | | _______________________ | | | | | | : : ......................... | | |

| | | | [ vm07-rhel6 ] | | | | | | | : : : [ vm07-rhel6 ] : | | |

| | | | ____________________| | | | | | | : : :____________________ : | | |

| | | | | eth0 =-------------/ | | | | | : :-------------= eth0 | : | | |

| | | | | 10.255.1.7 || | | | | | : :| 10.255.1.7 | : | | |

| | | | | ..:..:..:..:..:.. || | | | | | : :| ..:..:..:..:..:.. | : | | |

| | | | |___________________|| | | | | | : :|___________________| : | | |

| | | | _____ | | | | | | : : _____ : | | |

| +--|--=--[_vda_] | | | | | | : : [_vda_]--=--|--+ |

| | | |_______________________| | | | | | : :.......................: | | |

| | | | | | | | : | | |

| | | _______________________ | | | | | : ......................... | | |

| | | | [ vm08-sles11 ] | | | | | | : : [ vm08-sles11 ] : | | |

| | | | ____________________| | | | | | : :____________________ : | | |

| | | | | eth0 =---------------/ | | | | :---------------= eth0 | : | | |

| | | | | 10.255.1.8 || | | | | :| 10.255.1.8 | : | | |

| | | | | ..:..:..:..:..:.. || | | | | :| ..:..:..:..:..:.. | : | | |

| | | | |___________________|| | | | | :|___________________| : | | |

| | | | _____ | | | | | : _____ : | | |

| +--|--=--[_vda_] | | | | | : [_vda_]--=--|--+ |

| | | |_______________________| | | | | :.......................: | | |

| | | | | | | | | |

| | | | | | | | | |

| | | | | | | | | |

| | | Storage: | | | | Storage: | | |

| | | __________ | | | | __________ | | |

| | | [_/dev/sda_] | | | | [_/dev/sda_] | | |

| | | | ___________ _______ | | | | _______ ___________ | | | |

| | | +--[_/dev/sda1_]--[_/boot_] | | | | [_/boot_]--[_/dev/sda1_]--+ | | |

| | | | ___________ ________ | | | | ________ ___________ | | | |

| | | +--[_/dev/sda2_]--[_<swap>_] | | | | [_<swap>_]--[_/dev/sda2_]--+ | | |

| | | | ___________ ___ | | | | ___ ___________ | | | |

| | | +--[_/dev/sda3_]--[_/_] | | | | [_/_]--[_/dev/sda3_]--+ | | |

| | | | ___________ ____ ____________ | | | | ____________ ____ ___________ | | | |

| | | +--[_/dev/sda5_]--[_r0_]--[_/dev/drbd0_]--+ | | +--[_/dev/drbd0_]--[_r0_]--[_/dev/sda5_]--+ | | |

| | | | | | | | | | | | | |

| | | | \----|--\ | | /--|----/ | | | |

| | | | ___________ ____ ____________ | | | | | | ____________ ____ ___________ | | | |

| | | \--[_/dev/sda6_]--[_r1_]--[_/dev/drbd1_]--/ | | | | \--[_/dev/drbd1_]--[_r1_]--[_/dev/sda6_]--/ | | |

| | | | | | | | | | | |

| | | Clustered LVM: | | | | | | Clustered LVM: | | |

| | | _________________________________ | | | | | | _________________________________ | | |

| | +--[_/dev/an-c05n01_vg0/vm02-win2012_]-----+ | | | | +--[_/dev/an-c05n01_vg0/vm02-win2012_]-----+ | |

| | | __________________________________ | | | | | | __________________________________ | | |

| | +--[_/dev/an-c05n01_vg0/vm05-freebsd9_]----+ | | | | +--[_/dev/an-c05n01_vg0/vm05-freebsd9_]----+ | |

| | | ___________________________________ | | | | | | ___________________________________ | | |

| | \--[_/dev/an-c05n01_vg0/vm06-solaris11_]---/ | | | | \--[_/dev/an-c05n01_vg0/vm06-solaris11_]---/ | |

| | | | | | | |

| | _________________________________ | | | | _________________________________ | |

| +-----[_/dev/an-c05n02_vg0/vm01-win2008_]-------------+ | | +----------[_/dev/an-c05n02_vg0/vm01-win2008_]--------+ |

| | ______________________________ | | | | ______________________________ | |

| +-----[_/dev/an-c05n02_vg0/vm03-win7_]----------------+ | | +----------[_/dev/an-c05n02_vg0/vm03-win7_]-----------+ |

| | ______________________________ | | | | ______________________________ | |

| +-----[_/dev/an-c05n02_vg0/vm04-win8_]----------------+ | | +----------[_/dev/an-c05n02_vg0/vm04-win8_]-----------+ |

| | _______________________________ | | | | _______________________________ | |

| +-----[_/dev/an-c05n02_vg0/vm07-rhel6_]---------------+ | | +----------[_/dev/an-c05n02_vg0/vm07-rhel6_]----------+ |

| | ________________________________ | | | | ________________________________ | |

| \-----[_/dev/an-c05n02_vg0/vm08-sles11_]--------------+ | | +----------[_/dev/an-c05n02_vg0/vm08-sles11_]---------/ |

| ___________________________ | | | | ___________________________ |

| /--[_/dev/an-c05n01_vg0/shared_]-------------------/ | | \----------[_/dev/an-c05n01_vg0/shared_]--\ |

| | _________ | _________________________ | ________ | |

| \--[_/shared_] | | an-s01 Switch 1 | | [_shared_]--/ |

| ____________________| |____ Back-Channel ____| |____________________ |

| | IPMI =----=_03_] Network [_04_=----= IPMI | |

| | 10.20.51.1 || |_________________________| || 10.20.51.2 | |

| _________ _____ | 00:19:99:9C:9B:9E || | an-s02 Switch 2 | || 00:19:99:9A:D8:E8 | _____ _________ |

| {_sensors_}--[_BMC_]--|___________________|| | | ||___________________|--[_BMC_]--{_sensors_} |

| ______ ______ | | VLAN ID 101 | | ______ ______ |

| | PSU1 | PSU2 | | |____ ____ ____ ____| | | PSU1 | PSU2 | |

|____________________________________________________________|______|______|_| |_03_]_[_07_]_[_08_]_[_04_| |_|______|______|____________________________________________________________|

|| || | | | | || ||

/---------------------------||-||-------------|------/ \-------|-------------||-||---------------------------\

| || || | | || || |

_______________|___ || || __________|________ ________|__________ || || ___|_______________

| UPS 1 | || || | PDU 1 | | PDU 2 | || || | UPS 2 |

| an-u01 | || || | an-p01 | | an-p02 | || || | an-u02 |

_______ | 10.20.3.1 | || || | 10.20.2.1 | | 10.20.2.2 | || || | 10.20.3.1 | _______

{_Mains_}==| 00:C0:B7:58:3A:5A |=======================||=||==| 00:C0:B7:56:2D:AC | | 00:C0:B7:59:55:7C |==||=||=======================| 00:C0:B7:C8:1C:B4 |=={_Mains_}

|___________________| || || |___________________| |___________________| || || |___________________|

|| || || || || || || ||